Save

You have reached your maximum number of saved items.

Remove items from your saved list to add more.

It wasn’t the overtly pornographic nature of the computer-generated image of Coralie Alison that most disturbed her. It was how, on a fake body contorted into a degrading pose she hadn’t performed, the small freckles on her arms were replicated. Each in its exact spot.

Alison, the operations manager at Collective Shout, an Australian lobby organisation dedicated to the prevention of the exploitation of women and girls, says the AI image wasn’t an isolated incident.

Alison and her colleagues have borne the brunt of online attacks since 2019, when they decided to combat artificial intelligence deepfakes and “nudify” apps – platforms where users can upload anybody’s face to be morphed into sexually explicit or vulgar content.

But now, a terrifying new way to use AI has emerged. Pictures and videos depicting Alison and her colleagues being raped or tortured, using excruciating details from their social media profile pictures, are being shared on social media, produced by anonymous online predators armed with AI tools.

“There were pictures of me with my face all sliced up with a knife, pictures of me with my throat slit and a dark figure behind me with the knife, and those photos were in my own bedroom at home,” Alison says.

“There were ones of me decapitated as well, like a man holding an axe in one hand and my head dripping with blood in the other, as well as me going through a human mincer, being minced alive … Those specific ones, I would say, were the worst that have ever been done of me.”

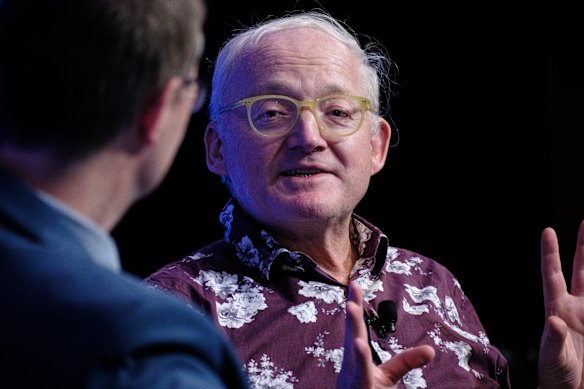

According to Toby Walsh, a professor of AI at the University of New South Wales, the nascent use of AI to produce violent threats of death, rape and torture means it’s likely to rapidly filter into the mainstream.

“These tools are now becoming widely, very cheaply available,” Walsh says. “The technology is now very accessible. It doesn’t require any expertise. Often doesn’t even require you to pay any money. Many of the services are free.”

UNSW professor Toby Walsh says violent AI-generated abuse material has the potential to threaten democracy.Oscar Colman‘Threat to democracy’

UNSW professor Toby Walsh says violent AI-generated abuse material has the potential to threaten democracy.Oscar Colman‘Threat to democracy’

Violent AI-generated abuse material has the potential to threaten democracy, warn Walsh and Melinda Tankard Reist, movement director of Collective Shout.

“You could easily classify this as a national security threat,” Walsh says. “If people outside Australia are making threats, and quite explicit, violent, death threats, to Australians, you could certainly see that if I were the security services, I would be incredibly worried.”

He said people within the armed forces, or with access to classified information, were open to significant blackmail threats using such content.

“Governments could be thrown this way by the strategic release of material like this … It’s even possibly our democracy could be compromised by people using these sorts of tools in malicious ways.”

Tankard Reist agrees. She and her colleagues have created a dossier of all the rape and torture deepfakes predators have made of them and shared on social media platform X.

“We’re gathering as much data as we can with engaged forensic experts and we’re going to use the evidence about the abuse, the cyber-terror hate campaign against us, to call for reform in Australia and on a global level,” Tankard Reist says.

Melinda Tankard Reist has been campaigning against the use of deepfakes.Janie Barrett

Melinda Tankard Reist has been campaigning against the use of deepfakes.Janie Barrett

“No woman should be subjected to what we’ve been subjected to. It’s beyond us, what we’ve suffered individually … This has ramifications for democracy.”

The AFP prosecutes cases where a sexualised deepfake depicting a child is produced in Australia. The federal policing agency’s powers stop, however, if a deepfake involves a person over 18, which is then investigated by states and territories.

Meanwhile, the federal eSafety commissioner says that, depending on the nature of the deepfakes, the regulator could have powers under various complaint schemes to wrest distressing material from the internet.

“But while our complaint schemes can offer meaningful relief to Australians in the midst of an online crisis, eSafety is not empowered to scan or police the entire internet,” eSafety commissioner Julie Inman Grant says.

“We are already receiving reports containing ‘synthetic’ AI-generated child sexual abuse material, terror and extremist content, as well as deepfake images and videos created by teens to bully their peers.”

eSafety commissioner Julie Inman Grant.Edwina Pickles

eSafety commissioner Julie Inman Grant.Edwina Pickles

The rapid deployment, increasing sophistication, and popular uptake of generative AI mean creating deepfakes is becoming more convincing and harder to distinguish from reality, Inman Grant says. “Which is why a greater burden must fall on the purveyors and profiteers of AI to take a more robust approach, so they are engineering out misuse at the front end,” she says.

Last Monday, independent federal senator David Pocock introduced the My Face, My Rights bill before federal parliament, aiming to strengthen the eSafety commissioner’s powers to issue removal notices and formal warnings to technology companies and individuals.

What to do if a deepfake is made of youeSafety strongly encourages Australians who are targeted by serious online abuse to report it to the platform where it appears.If the platform fails to act, report it to eSafety.gov.au/report. If you are concerned for your safety as a result of any online abuse, you should report to police.There are also criminal offences in some Australian states that cover deepfake intimate images, which are investigated by police.Scraping ‘dark corners’ of the net

AI scrapes millions of websites across the internet to obtain information at the direction of what technology companies want it to learn. As Walsh describes it, AI tools are merely a “mirror of what they are trained on”.

He says companies that offer AI – whether it’s social media like X’s Grok, Google’s Gemini or AI services such as OpenAI – can wire their technology not to accept certain requests or generate offensive material. However, it is much easier never to feed the AI violent content in the first place.

Technology companies are allowing their AI to scan the internet recklessly because they are motivated to have the most advanced product, Walsh says.Bloomberg

Technology companies are allowing their AI to scan the internet recklessly because they are motivated to have the most advanced product, Walsh says.Bloomberg

“If you’re just scraping The New York Times, there is much less you have to worry about than if you’re scraping the dark corners of 4chan,” Walsh says.

Technology companies can utilise safeguards, he says, to prevent inappropriate or criminal material from being produced with AI, such as how to create a Molotov cocktail or self-harm.

“YouTube has been doing this for years because they don’t allow pornography … There are filters that can identify pornography by the amount of flesh that is just shown in the video,” Walsh says.

Technology companies are allowing their AI to scan the internet recklessly because they are motivated to have the most advanced product, Walsh says.

Microsoft’s spending on AI infrastructure soared to a record of about $35 billion ($53 billion) in the September quarter after the company’s shares rose nearly 7 per cent in extended trading in July. At the time, Microsoft said sales at Azure, the cloud-computing business that has integrated AI, surpassed $US75 billion ($116 billion) on an annual basis.

‘Digital tools of terror against women’

A disturbing finding for Tankard Reist, after investigating and herself being victim to rape deepfakes, was that it almost always depicted women and girls.

“It goes beyond undressing and nudifying apps – as if that’s not bad enough – but you can turn any girl, any female school teacher, into specific genres of porn, including torture porn, sadism, forced impregnation, slavery, hentai porn, bondage. Any woman or girl,” Tankard Reist says.

The exponential growth of AI technology has powered a new proliferation of child pornography.Adobe Stock image/Artwork: Marija Ercegovac

The exponential growth of AI technology has powered a new proliferation of child pornography.Adobe Stock image/Artwork: Marija Ercegovac

“These are digital tools of terror against women.”

On Thursday, a British-based technology company banned Australians from accessing three nudify websites. It follows an enforcement effort by the eSafety regulator in September that found the company was responsible for the creation of sexual exploitation material of Australian school children on its platforms.

The eSafety regulator also said reports of image-based abuse of children have more than doubled in the past 18 months compared with the total number of reports in the seven years prior.

Four out of five of these reports involved girls.

“Shockingly, we found these services did little to deter the generation of synthetic child sexual abuse material by marketing alarming features such as undressing ‘any girl’, with options for ‘schoolgirl’ and ‘sex mode’ to name a few,” Inman Grant said.

While hardened by years of abuse, Alison said the issue of innocent women and girls having to reckon with deepfakes blazes at the front of her mind.

“I just think about all the schoolgirls around Australia that are going through this and might feel so isolated and alone and don’t have the power to push back and get the result they need,” Alison says.

“It actually gives me a renewed sense of enthusiasm to keep going, so these abusers that wanted to try and stop us … it’s backfired on them because I’m only more determined to see justice be done.”

Start the day with a summary of the day’s most important and interesting stories, analysis and insights. Sign up for our Morning Edition newsletter.