An Australian cancer surgeon is warning people against using AI tools like ChatGPT to diagnose health symptoms, after testing revealed the chatbot suggested delaying medical attention for a potential breast cancer sign.

Sanjay Warrier, Associate Professor of the University of Sydney with the Royal Prince Alfred Academic Institute, told Yahoo Lifestyle the advice could be dangerous, with some aggressive cancers progressing in weeks — turning delayed treatment into a life-or-death risk.

“Some of my more savvy patients were using AI,” Warrier said.

“So, I put a question into ChatGPT about redness on the breast, and it suggested that I wait a few weeks to see if it improved.

“Some cancers are so aggressive that a few weeks can mean the difference between life and death.”

Warrier said Australians’ growing reliance on ChatGPT points to the start of a worrying trend, which can make the artificial intelligence “dangerous”.

“ChatGPT is not a health service,” the Council member for Breast Surgeons of Australia New Zealand said.

“It cannot examine you, it cannot feel a lump, and it cannot assess your individual risk.

“AI cannot replace a GP or specialist.”

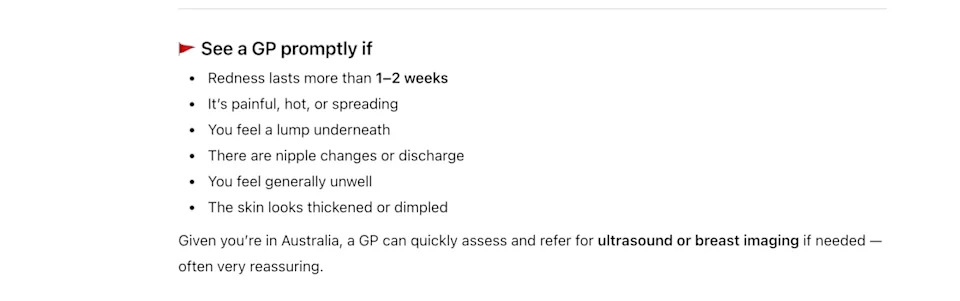

ChatGPT’s advice after asking about breast redness. Source: Yahoo Lifestyle Australia

Why are people turning to AI?

In June 2024, an Australian study conducted by the University of Sydney found 9.9 per cent of respondents had used ChatGPT to obtain health-related information during the preceding six months.

More concerningly, it also revealed 61 per cent had asked ChatGPT at least one higher-risk question, related to taking action that would typically require clinical advice.

“It’s convenient and cost-efficient, and it’s never nice to have an examination or talk about some symptoms, so I can understand why people are turning to AI,” Warrier said.

“But it comes down to the quality of the advice.”

False reassurance is the real risk

Warrier wants to spread the message that misplaced confidence is as big of a risk as misinformation.

“If an online tool suggests something is likely benign, some people will delay seeking proper care,” he said.

And for those on the edge of denial or who have other barriers in getting to a GP, it can provide false reassurance, justifying their delay.

“Our biggest driver for outcome is picking up symptoms early,” Warrier said, reiterating that a delay can be deadly.

“Time matters,” he said.

“You should not be typing [symptoms] into a chatbot hoping for reassurance.

“You should be booking an appointment.”

The breast cancer specialist said ‘technology can support healthcare, but it cannot substitute it’. Source: Sanjay Warrier

Symptoms aren’t simple — and AI can’t see the full picture

Every health symptom could point to a myriad of different conditions, as we all know from experience with “Dr” Google.

For example, typing “fatigued” into into Google or AI like ChatGPT throws up causes as disparate as lifestyle to diabetes.

“You lose perceptibility using AI,” Warrier said.

On the other hand, he said AI can struggle to put symptoms into proper context.

While it provides general information, it can’t pick up subtle physical changes or factor in your personal medical history.

“We need to see that AI is not gospel,” he said.

“The perfect answer is not there yet, particularly in health.”

Warrier stressed the warning isn’t about rejecting technology altogether, but understanding its limits.

He said AI can be useful for improving health awareness and helping people learn about potential symptoms.

However, it cannot replace clinical judgement or a proper medical examination.

If something feels unusual, changes persist or concern lingers, he urged people not to rely on chatbot reassurance and instead seek professional advice.

“Technology can support healthcare, but it cannot substitute it,” he said.

Want the latest lifestyle and entertainment news? Make sure to follow us on Facebook, Instagram, and TikTok.