March 30, 2026

By Karan Singh

For owners of Tesla vehicles equipped with HW3, the wait for the latest FSD updates has become a tense waiting game. FSD v12.6.4 was the last update released on Tesla’s legacy hardware about 13 months ago, and it was an incremental update to previous versions within the same major build.

As Tesla’s end-to-end neural networks grow increasingly massive and complex, the AI team is struggling to fit its most capable versions of FSD, like v14, onto the older computers. Tesla has said it intends to prepare an FSD v14-lite build for HW3 vehicles in Summer 2026, but FSD development has slowed down drastically in recent months due to the focus on Robotaxi and Unsupervised FSD.

That leaves little time for the team to work on optimizing a modern build for legacy vehicles. However, a recent breakthrough from NVIDIA in the world of Large Language Models (LLMs) might just hold the conceptual key to how Tesla can keep HW3 highly capable without completely lobotomizing FSD.

The HW3 Bottleneck: It’s All About Memory

To understand the solution, we have to understand the bottleneck. While HW3 has less raw computational power than the newer AI4 hardware, its biggest limiting factor for modern AI is actually memory.

When you run a massive neural network, it requires a significant amount of working memory to function in real-time. In LLMs like ChatGPT, this working memory is called the KV (Key-Value) cache, which stores the context of your conversation so the AI doesn’t have to re-read the entire chat history for each new prompt.

Tesla’s FSD operates on a very similar principle. The car utilizes spatial-temporal memory to remember the driving context over time. If a pedestrian walks behind a parked delivery truck, the car’s temporal memory tracks that the pedestrian is still there, even if the cameras can no longer see them. As FSD gets smarter, this temporal memory cache grows larger, quickly exhausting the limited RAM available on the HW3 computer.

NVIDIA’s 20x Compression Breakthrough

This is where NVIDIA’s latest innovation comes in. As reported by VentureBeat last week, NVIDIA’s researchers have introduced a new technique that shrinks the memory footprint of an LLM’s working cache by a staggering 20x.

The most important part is that they did it without changing the model’s actual weights.

The technique, called KV Cache Transform Coding (KVTC), borrows a concept from classical media compression formats like JPEG. Instead of permanently deleting information, the algorithm identifies the most critical components of the working memory and compresses the rest on the fly.

Previously, to fit massive AI models onto constrained hardware, developers had to permanently alter the model through “quantization” or “pruning” (literally cutting out neural pathways). While this saves space, it often degrades the AI’s intelligence.

NVIDIA’s new approach avoids this entirely. By aggressively compressing the working memory during inference, the LLM maintains its original, uncompromised intelligence with less than a 1% accuracy penalty, all while using a fraction of the hardware memory.

Applying the JPEG Method to Neural Networks

While NVIDIA’s research is focused on text-based LLMs, the underlying math and architecture may be able to be adapted for the vision-based AI running in your Tesla.

If Tesla’s Autopilot engineering team applies a similar dynamic memory sparsification or transform coding to FSD’s spatial-temporal memory, the results for HW3 could be game-changing. By highly compressing the “video memory” of the car’s recent surroundings in real-time, Tesla could drastically reduce the total VRAM required to run the software.

Why does this matter? Because freeing up that memory cache means Tesla wouldn’t have to shrink the core intelligence of the neural network to make it fit.

Instead of delivering a heavily pruned v14-lite that removes millions of parameters and degrades the car’s driving capability, Tesla could ship a much more capable version of the v14 model to HW3. The car would still be running the highly advanced, end-to-end driving logic; it would just be utilizing a highly compressed, ultra-efficient JPEG-style temporal memory to stay within the hardware’s limits.

Squeezing the Silicon

There is no denying that HW3 is aging silicon. Eventually, the hardware will reach a hard ceiling where it simply cannot process the data fast enough to keep up with the demands of unsupervised autonomy.

However, NVIDIA’s KVTC breakthrough proves that the AI industry is finding radical new ways to optimize software inference without needing bigger, more expensive chips. As Tesla races to unify its fleet on the v14 architecture, advanced memory compression techniques like these are exactly how the company will squeeze every last drop of capability out of its legacy hardware until the HW3 upgrade happens.

Ordering a New Tesla?

Use our referral code and get 3 months free of FSD or $1,000 off your new Tesla.

March 30, 2026

By Karan Singh

Tesla and SpaceX could soon become a single corporate behemoth, according to Wedbush Securities analyst Dan Ives. A recently issued research note predicted that Elon Musk’s two most valuable companies would merge into a single entity by 2027.

Ives argues that the groundwork is already actively being laid and points to the newly announced Terafab project as the ultimate catalyst for this historic combination.

The TERAFAB Connection

During the recent TERAFAB launch event in Austin, Elon Musk revealed a massive joint chip manufacturing facility intended to serve both Tesla and SpaceX simultaneously. According to Ives, this physical overlap of infrastructure is the vital first step toward merging their vast operations.

By building shared facilities to produce next-generation silicon like the AI5, AI6, and D3 space chips, the two companies are already operating with a highly unified hardware roadmap that makes a formal corporate merger highly feasible in the near future.

Laying the Financial Groundwork

The financial ties between the two companies are also tightening at a rapid pace. Earlier this year, SpaceX officially acquired Musk’s artificial intelligence startup known as xAI, and as part of that transaction, Tesla’s previous $2 billion investment in xAI was converted directly into SpaceX shares. While this currently represents less than a 1% stake in SpaceX, it officially links the two companies financially for the first time.

The next major milestone on this timeline is a highly anticipated SpaceX initial public offering, which is reportedly targeting an incredible $1.75 trillion valuation to raise roughly $75 billion by mid-June of 2026. Ives suggests that completing this public offering is a necessary sequence before any formal merger with Tesla can ultimately occur.

The Ultimate AI Ecosystem

Combining an automaker with a space exploration company might seem unorthodox, but Ives notes that this strategy is all about dominating the future of artificial intelligence. Musk has frequently stated his desire to build a vertically integrated innovation engine, and by merging Tesla and SpaceX, he would successfully consolidate his sprawling AI ecosystem under one unified roof.

This super-company would command the terrestrial robotics of Optimus alongside the autonomous driving data of Tesla and the orbital AI compute infrastructure of SpaceX. A merger would consequently give Tesla direct access to the massive space-based data centers necessary to train the next generation of neural networks without having to rely on an overloaded terrestrial power grid.

The Upside for Tesla

Despite the massive regulatory and antitrust hurdles a merger of this scale would undoubtedly face, Wedbush remains incredibly bullish on the prospect. Ives views this combination as the ultimate strategy for Musk to cement his control over a disruptive technology empire, and he has reiterated his Outperform rating on Tesla stock as a result.

He maintains a massive $600 price target for the automaker, which implies an upside of nearly 66 percent from current trading levels of $360. If this bold prediction holds true, 2027 could mark the dawn of the most powerful technology conglomerate in human history.

March 30, 2026

By Karan Singh

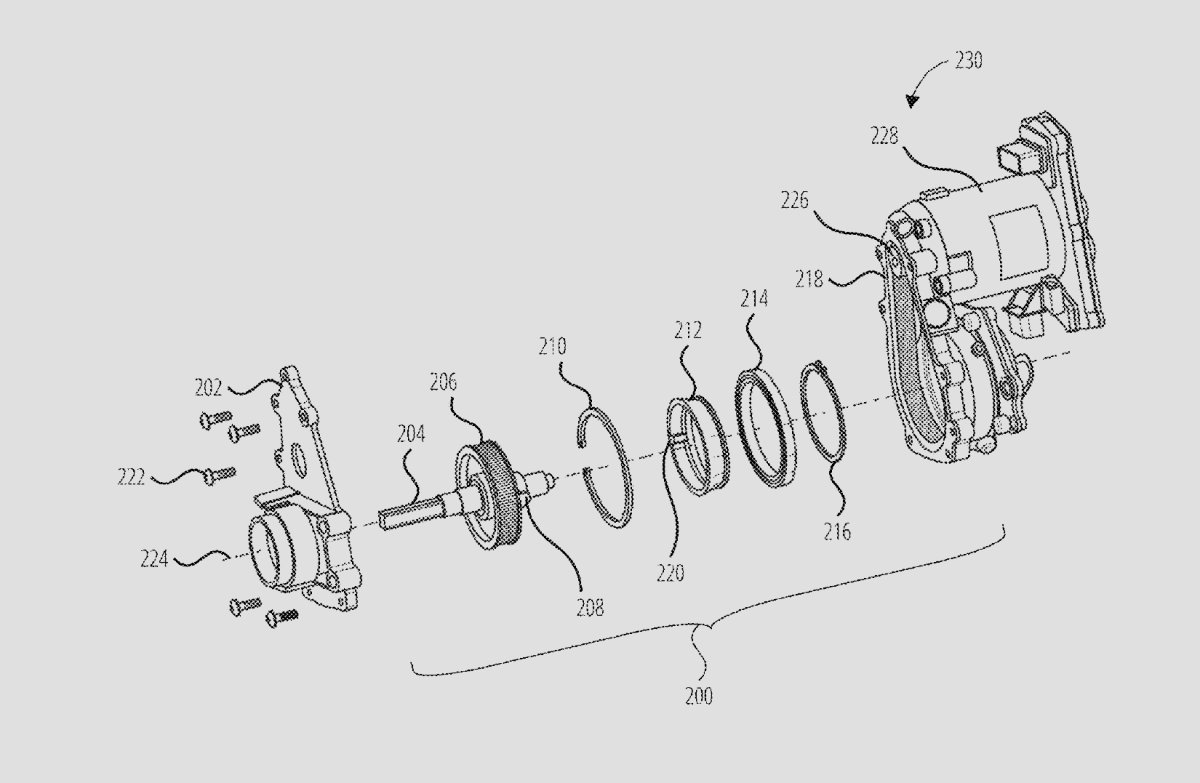

Tesla recently published a new patent that could change the way future steer-by-wire vehicles feel and operate. The patent is titled “MULTI-TURN STEERING FEEDBACK ACTUATOR” and was officially published on March 19, 2026.

Credited to inventors Stephen Alexander Harasym and Joel Timothy Van Rooyen, this document outlines a new steering column assembly designed specifically for steer-by-wire systems. It allows for an extended range of steering wheel rotation while maintaining a robust mechanical end stop.

The Problems with Current Steer-by-Wire

In modern vehicles equipped with steer-by-wire technology, steering feedback actuators provide resistive force to emulate the physical sensation of a traditional mechanical steering system. These systems require travel limiters to prevent the driver from rotating the wheel excessively and damaging other internal components.

Conventional steer-by-wire actuators use mechanical pins or stoppers inside the housing to limit the steering wheel’s range of motion. These traditional setups provide a rigid hard stop at predetermined angles, which is commonly limited to around plus or minus 170 degrees from the center position. That specific limit is essentially 180 degrees, but it is slightly reduced by the physical width of the internal components, which causes them to bump into each other.

Tesla’s Two-Stage Solution

To bypass this rotational limit, Tesla designed a clever but relatively simple mechanical structure. The new steering column assembly features an input shaft with a pin, a stationary housing, and a rotating member known as a stop ring. This stop ring features two distinct stops. The first stop is designed to engage the housing, and the second stop is designed to engage the pin on the input shaft.

Because the stop ring can rotate independently before engaging the final housing stop, the overall steering range is massively increased. This unique two-stage configuration enables steering ranges of approximately plus or minus 340 degrees.

This provides significantly more rotation than conventional systems while keeping the entire package compact and efficient. Additionally, the design is highly adjustable. Engineers can tune the configuration of the stop ring to limit the range of motion to any specific value between 170 degrees and 340 degrees, and the patent even notes the potential for an arc of at least 540 degrees.

Premium Feel, Variable Feedback

Tesla is also focusing heavily on the haptic feel of the steering wheel. Hitting a hard mechanical stop can feel jarring to the driver, so the steering column assembly integrates damping elements to soften the blow. Specifically, Tesla will utilize polymer O-rings positioned to protrude from the contact surfaces.

When the driver hits the limit of the steering range, these O-rings expand upon impact to provide resistance and soften the collision. This creates a much softer and more premium feel when the driver reaches the end stops. Ultimately, this improved haptic feedback greatly enhances the overall steering experience.

Finally, the new assembly integrates seamlessly with existing feedback systems. It uses a gear or pulley on the input shaft to connect to a belt drive system and a motor. This setup allows the system to generate variable feedback torque, successfully simulating the physical resistance and road feel of a traditional steering rack.

Built for the Future

While this patent uses a highly efficient design with minimal parts, it is fully capable of withstanding high input torques. It could potentially lower manufacturing costs and improve reliability for future Tesla vehicles. Whether this technology debuts on the upcoming next-generation Roadster or a future iteration of the Cybertruck, Tesla is clearly preparing to take its steer-by-wire capabilities to the next level.