Hyperspectral satellites are rewriting what “seeing from space” means. Instead of the three color channels your eyes use, a new generation of satellites captures hundreds of color bands per pixel, and the implications stretch from farming to military surveillance.

Coming to you from Scott Manley, this technically rich video breaks down how hyperspectral imaging works, why it’s hard to build, and what organizations like Planet Labs are already doing with it in orbit. Manley opens with some important context: satellites have seen in color for decades, but hyperspectral imaging isn’t about seeing color the way humans do. It’s about capturing hundreds of distinct wavelength bands in a single image, enough to identify specific vegetation types, surface minerals, and military camouflage that looks identical to the human eye but has a completely different spectral signature than actual leaves. Spectral analysis has been used in astronomy for over 200 years, long enough that the element helium was first identified by its spectral signature in the sun’s atmosphere before it was ever found on Earth. The same physics now applies to every pixel in a satellite image.

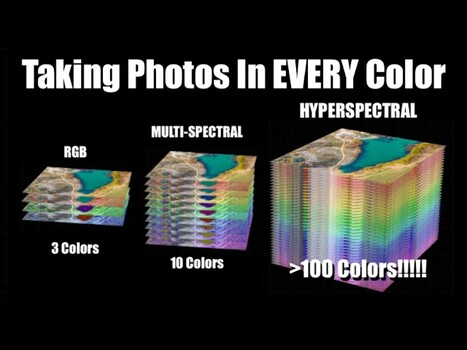

The core engineering problem is that image sensors are two-dimensional, but hyperspectral data is three-dimensional, essentially a cube with hundreds of color layers stacked on top of each other. Manley walks through several approaches to solving this, starting with the familiar Bayer mask found in consumer cameras, which uses a grid of red, green, and blue filters in front of the sensor. Scaling that approach to hundreds of colors creates serious tradeoffs in spatial resolution and manufacturing complexity, which is why most scientific imaging systems skip it entirely. The more practical approach for satellites is called push broom imaging: a thin strip of the scene is passed through a diffraction grating, spreading the light into its component wavelengths along one axis of the sensor while the satellite’s orbital motion scans the strip across the landscape below. Planet Labs’ Tanager satellite uses this method, capturing 424 spectral bands between 400 and 2,500 nanometers at roughly 30 to 35 meters of spatial resolution, with the sensor reading out at around 240 frames per second to keep pace with the satellite moving at about 7.8 km/s.

The tradeoff with push broom imaging, and with most of the techniques Manley covers, is that they discard a large fraction of the photons they collect, either by filtering them out or by only imaging one narrow strip at a time. This is a genuine efficiency problem, and it’s one reason snapshot hyperspectral designs are worth paying attention to. These approaches attempt to capture the full spectral cube in a single exposure rather than scanning across a scene. One method uses a matrix of fiber optics at the focal plane, routing light to a spectrometer and then reconstructing the two-dimensional image afterward. Another, called computed tomography imaging spectrometry, uses a specialized grating to project the spectral data at multiple angles simultaneously, then applies the same math used in CAT scans to reconstruct the full color cube. A third approach, coded aperture snapshot spectral imaging (CASSI), places a carefully coded mask in front of the sensor, then uses a prism or grating to spread the light, allowing software to reconstruct color and spatial information from the shadow patterns. Manley is candid that these snapshot methods involve enormous computational loads and come with their own resolution tradeoffs.

What makes this video worth your time is that Manley doesn’t just explain the science in a way that actually sticks, he also connects it to things you already know: how a CD produces a rainbow, how slow-motion video on an iPhone relates to satellite sensor readout rates, how the color fringing in NASA’s famous DSCOVR footage of the moon crossing Earth is a direct result of filter-wheel timing. These aren’t decorative analogies. They’re the clearest path to understanding why building a hyperspectral satellite is genuinely difficult, and why the handful of companies doing it represent a real shift in what remote sensing can do. Check out the video above for the full breakdown from Manley.