Once the AI darling of programmers everywhere, Anthropic’s Claude has been stumbling mightily, both in terms of cost and perceived quality. The service was down briefly on Monday with “a major outage,” service trouble that only amplifies growing discontent from customers that even a bot can see.

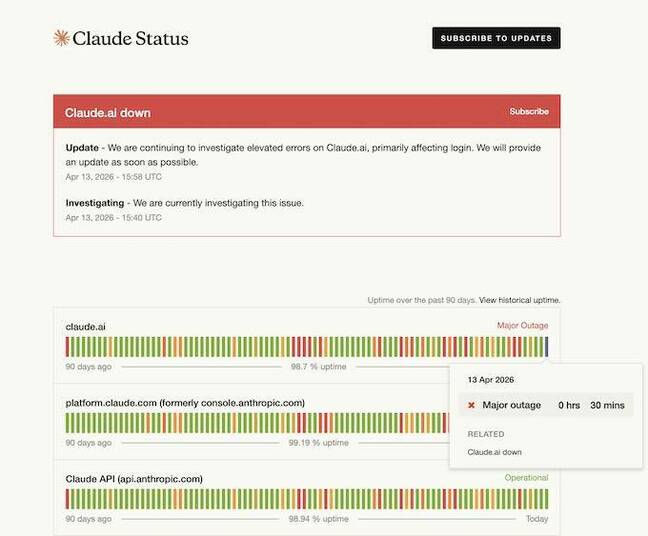

The outage, which involved elevated error rates, affected Claude.ai and Claude Code from 15:31 to 16:19 UTC.

Screenshot of 4-13-26 outage – Click to enlarge

That’s not all. In the past few months, Claude’s answers have been getting less satisfactory, according to social media posts as well as issues filed on GitHub. This has occurred as Anthropic has had to take steps to reduce usage during peak hours to balance capacity and demand.

To get a more objective measurement, we pointed Claude itself at the Claude Code GitHub repo, filtered for open issues that mention quality, and gave it the following prompt: “Analyze and graph complaints about Claude Code quality in this repo since January 2026. Use open issues that mention quality concerns. Have these concerns increased lately?”

Anthropic’s AI model concluded, “Yes, quality complaints have escalated sharply — and the data tells a pretty clear story.”

Claude-generated assessment of Claude Code quality issues – Click to enlarge

We asked Claude to rerun its self-analysis on Monday and the results were similar, with the model emitting “The velocity is notable: April is already at 20+ quality issues in 13 days, putting it on pace to exceed March’s 18 — which was itself a 3.5× jump over the January–February baseline.”

Claude itself is not a reliable narrator, and just because someone (or some bot) has reported a concern to the Claude Code repo does not mean that the report is accurate or valid. It appears many issues are now AI-generated – a widely reported concern among open source developers – which may be contributing to report volume.

Anthropic’s GitHub Actions script also appears to automatically close issues after a period of inactivity, which may serve to mask unresolved problems.

The Register has covered some of the issues Claude flagged in its analysis, like the caching issue and claims by AMD’s AI director Stella Laurenzo that Claude’s responses have been getting worse. Others have yet to be substantiated, such as one claim that “Claude autonomously deleted 35,254 production customer message records and 35,874 billing transactions belonging to a real paying customer (JIXEN).”

The individual or bot account behind this post has made no other posts. The Register has attempted to contact Jixen Enterprises Private Limited, which appears to be a private company registered in India, to check on that claim but we’ve not heard back. Developers have reported data loss from using Claude Code and other models. But if this occurred, no one has ruled out user error.

In any event, Claude is capable of citing actual GitHub issue posts to justify its “reasoning,” so the general trend – of a growing number of reports about quality – is evident.

The model points to issues like “Claude Code’s prediction-first behavior is dangerous on capital-at-risk projects” #46212, “Claude Code is unusable for complex engineering tasks with the Feb updates” #42796 (addressed by Claude Code head Boris Cherny), “Artificial degradation, Acquisition Bias, and unacceptable compute throttling for paid users” #46949, and “Opus 4.6: Severe quality degradation on iterative coding tasks” #46099 to justify its conclusion.

Data from Margin Lab, however, suggests that Claude Opus 4.6 has at least maintained its score on the SWE-Bench-Pro test. Assessments conducted since February show some variation but no substantive change.

Anthropic did not immediately respond to a request to comment on Claude quality concerns.®