Avid has announced a multi-year strategic partnership with Google Cloud that embeds Gemini models and Vertex AI directly into Media Composer and the newly commercially available Avid Content Core platform. The integration promises natural-language media search, automated metadata logging, and agentic AI workflows capable of autonomously handling tasks like style matching and B-roll generation, with a first public demonstration set for NAB 2026.

The partnership targets two of Avid’s core products: Media Composer, still the dominant NLE in high-end film and television post-production (Avid claims 87% of this year’s Oscar-winning pictures were crafted on its platform), and Avid Content Core, a cloud-native SaaS platform that acts as a unified data layer for global media assets. It is worth noting that Avid also announced a parallel collaboration with AWS at NAB, running Content Core and Media Composer on Amazon’s cloud infrastructure, so the company is clearly pursuing a multi-cloud strategy rather than locking into a single provider.

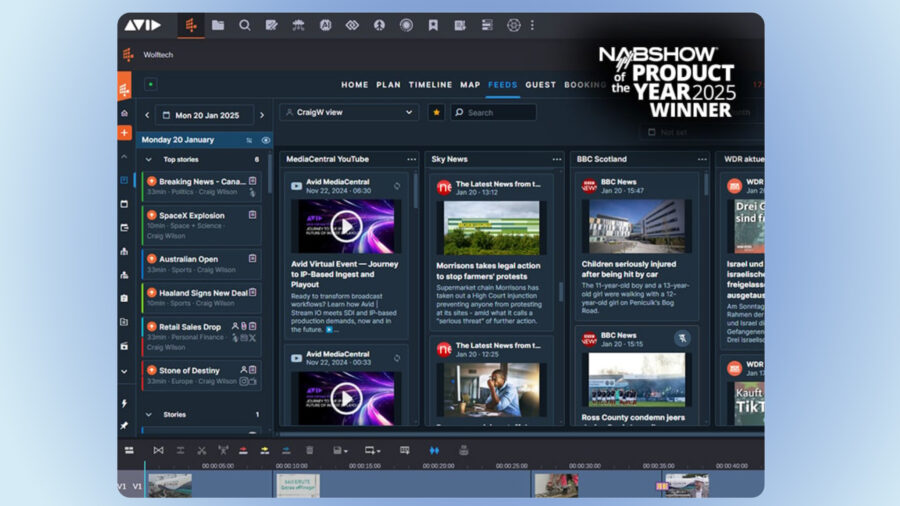

AI-driven post-production workflows. Image credit: AvidWhat the Gemini integration actually does

AI-driven post-production workflows. Image credit: AvidWhat the Gemini integration actually does

Inside Media Composer, the new multimodal extension powered by Google’s Gemini models allows editors to streamline several post-production bottlenecks. According to the companies, the integration covers intelligent metadata enhancement, automated logging, and generating B-roll, all designed to reduce the manual burden that consumes so much of an editor’s day. If you have ever spent hours logging dailies or manually tagging footage with descriptive metadata, this is the exact kind of task Avid and Google Cloud are targeting.

The more ambitious piece is the agentic AI component. Rather than simple automation that follows a fixed script, agentic workflows are designed to act as digital assistants that can autonomously manage complex tasks. Avid and Google Cloud describe capabilities like matching visual styles across clips and identifying emotional cues in raw footage. How well these features actually perform in a professional editing environment remains to be seen, and we will be looking closely at the demos at NAB to evaluate whether the reality matches the promise.

Manage content with built-in AI. Image credit: AvidFrom passive storage to active library

Manage content with built-in AI. Image credit: AvidFrom passive storage to active library

On the Avid Content Core side, the integration leverages Google Cloud’s BigQuery, Vision Warehouse, and Vertex AI Search to transform what has traditionally been passive storage into something far more interactive. The pitch is that searching your media library becomes a natural conversation: instead of hunting through bins and folders using filenames and timecodes, users can describe what they need based on visual actions, dialogue content, or emotional tone.

Avid’s CEO Wellford Dillard framed the partnership as a response to customer demand, noting that production teams are asking for intelligent tools that integrate into existing workflows rather than requiring entirely new pipelines. Google Cloud’s Anil Jain, global managing director for strategic industries, went further, suggesting that editors can now collaborate with an intelligent agent to handle tasks like filling timelines and matching styles.

For large-scale productions managing terabytes of footage across distributed teams, the potential time savings are significant. Avid claims the system can turn weeks of manual archive discovery into seconds of automated insight. That is an extraordinary claim, and one that will need real-world validation beyond a trade show floor demo.

Agentic AI in editing workflow. Image credit: AvidContext matters: Avid’s evolving AI strategy

Agentic AI in editing workflow. Image credit: AvidContext matters: Avid’s evolving AI strategy

This Google Cloud partnership does not exist in a vacuum. At IBC 2025, Avid showed AI-driven updates across Media Composer, Pro Tools, and MediaCentral, including built-in speech-to-text, translation capabilities, and automation features through the SoundFlow integration and Pro Tools Scripting SDK. The company has been steadily layering AI functionality into its existing tools rather than building standalone AI products.

It is also worth remembering Avid’s broader corporate trajectory. The company was acquired by private equity firm STG for $1.4 billion in 2023, and since then, the push toward cloud-native infrastructure and AI integration has clearly accelerated. Whether that acceleration is driven by genuine product vision or the pressure to demonstrate growth under private equity ownership is a question worth keeping in mind, though the two are not mutually exclusive.

AI tools in video editing. Image credit: AvidWhere does this leave editors?

AI tools in video editing. Image credit: AvidWhere does this leave editors?

The language surrounding this announcement, particularly the agentic AI framing, will understandably raise questions among working editors about the long-term direction of their craft. AI that logs footage, tags metadata, and surfaces relevant clips is broadly welcomed by most professionals who would rather spend their time making creative decisions. AI that generates B-roll and autonomously fills timelines enters more contested territory, where the line between assistance and replacement becomes blurry.

To be fair, Avid and Google Cloud are positioning these capabilities as tools that free editors to focus on storytelling and creative intent. That framing tracks with what we have seen from other AI integrations in the NLE space, including Eddie AI’s approach to automated rough cuts and Adobe’s expanding Firefly integration in Premiere Pro. The question is not whether AI will become part of the professional editing toolkit; it already is. The question is how much creative agency it absorbs in the process.

Avid and Google Cloud will demonstrate these new workflows at the NAB Show in Las Vegas (April 18 to 22, 2026). Visitors can see the technology in action at the Google Cloud booth (West Hall, W2731) and the Avid booth (North Hall, N2226).

For more information, visit Avid’s website.

What do you think of agentic AI inside Media Composer: genuine time-saver or a step too far? Don’t hesitate to let us know in the comments below!