SINGAPORE: Proposed “nutrition labels” for artificial intelligence services could help users better understand how such systems work – but only if they are clearly designed, regularly updated and backed by the industry, experts told CNA.

The idea, which Digital Development and Information Minister Josephine Teo raised last month, is being “actively explored” by the government as part of broader efforts to strengthen trust and safety in the digital space.

While such labels have potential to improve transparency, Professor Simon Chesterman, who leads AI governance and policy at the National University of Singapore’s AI Institute, said poorly designed ones risk becoming another “box-ticking exercise” that burdens responsible developers while being ignored by everyone else.

To be effective, Prof Chesterman said, the labels should explain what a system is designed to do, what data it relies on, what its main limitations are, how often it is updated and who is accountable when something goes wrong.

“For consumer-facing tools, the most important point is not to create the illusion of precision, but to give people a better sense of when they should trust the output and when they should be cautious,” he added.

Echoing this, Professor Bo An, who heads the AI division at Nanyang Technological University’s (NTU) College of Computing and Data Science, cautioned that labels must strike a balance between clarity and detail.

“If they are too vague, too long or treated as a box-ticking exercise, most users will ignore them,” said Prof An.

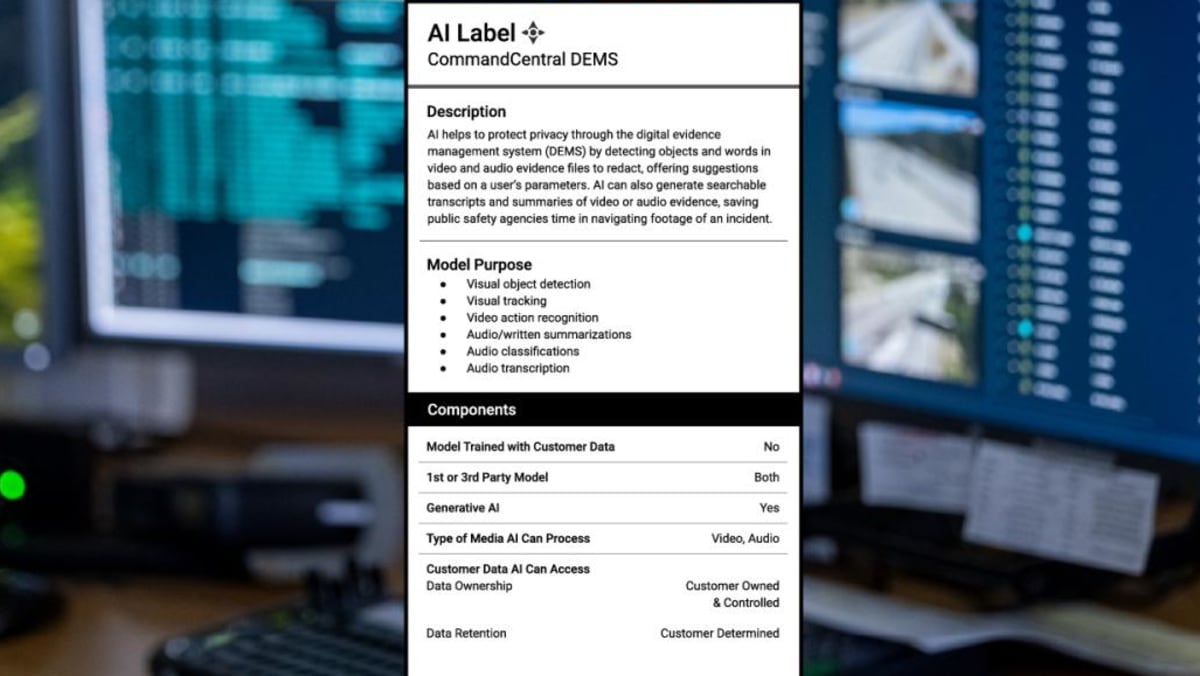

Motorola Solutions, which introduced “AI nutrition labels” across its safety and security technologies in July last year, said it addressed the potential issue of information overload by using a layered approach.

The labels have a “glanceable” summary for general understanding but provide more detailed information through links, said the technology company’s senior vice-president Jehan Wickramasuriya, who leads Motorola Solutions’ AI research and development teams globally.