IntroductionBackground

Cognitive decline and dementia represent a major public health concern, with significant global and regional prevalence. In 2021, approximately 57 million people were affected by dementia worldwide, with more than 60% residing in low- and middle-income countries []. As the global population ages, dementia is expected to impact 78 million individuals by 2030 and 139 million by 2050 []. This increasing prevalence is attributed to both the rising number of older adults and the fact that dementia disproportionately affects the older population. Furthermore, the severity of the problem is anticipated to rise more sharply in regions with significant demographic changes, particularly low- and middle-income countries, where health care resources are often limited [].

Dementia severely affects the quality of life, as it is a leading cause of disability and dependency in older individuals []. In addition to the physical and cognitive impairments experienced by individuals, dementia imposes a heavy emotional and economic burden on families and caregivers. Caregivers, often family members, are responsible for a substantial amount of care, with an average of 5 hours of daily care and supervision per individual affected. The global cost of dementia is estimated to exceed US $1.3 trillion, with informal caregiving accounting for approximately half of this expenditure. Health care and social systems face immense strain in responding to the needs of individuals with dementia, highlighting the urgent need for preventive measures and early intervention [].

There are several modifiable risk factors for cognitive decline and dementia, including physical inactivity, unhealthy diets, smoking, and cardiovascular conditions such as hypertension, diabetes, and obesity []. Early identification of cognitive decline is crucial for enabling timely interventions. Traditional screening methods, such as the Mini-Mental State Examination (MMSE) and Montreal Cognitive Assessment (MoCA) [], have limitations due to their episodic nature and reliance on periodic visits to health care providers. These methods are often influenced by situational factors [,] and may not detect early signs of decline or track changes in cognitive function over time [,]. In addition, performance on these instruments can be affected by educational level, language, and cultural background, and they provide only a cross-sectional snapshot of cognitive status []. Therefore, there is a growing need for continuous and more sensitive methods of monitoring cognitive health []. At the same time, the use of artificial intelligence (AI)–driven wearable technologies for continuous cognitive monitoring raises ethical and data privacy considerations, as such systems may involve the collection of sensitive behavioral and health-related data, underscoring the importance of appropriate data governance and responsible implementation [].

Wearable devices present significant potential for the continuous and passive monitoring of key physiological variables, including activity levels, sleep patterns, heart rate, and gait. These devices can detect subtle, longitudinal changes in behavior that may occur before clinical symptoms emerge [], allowing for the early identification of cognitive decline. AI enhances this process by analyzing data from wearables to identify patterns that indicate potential risks. AI algorithms can process large volumes of data, detecting even small shifts in physiological and behavioral patterns, which can inform personalized interventions. By leveraging AI for predictive analytics, it is possible to improve early detection capabilities, facilitating timely interventions and supporting more effective management of cognitive health []. This combination of wearables and AI offers a promising approach [] to enhancing dementia prevention and care.

Objective

Previous reviews have mainly examined associations between wearable-derived measures and dementia outcomes [], often focusing on physiological or behavioral differences in individuals already diagnosed with the disease. Other reviews have emphasized specific domains, such as gait and mobility assessment using wearables [] or the relationship between physical activity and cognitive decline []. In contrast, this review focuses on how wearable-derived data are analyzed, with particular attention to advanced analytic approaches, including machine learning and deep learning, used to support early detection and prevention across the cognitive aging spectrum, from subjective cognitive decline to clinical dementia. By synthesizing evidence within a digital phenotyping framework, this review highlights how longitudinal, multimodal wearable data combined with contemporary analytic methods can move beyond descriptive associations toward individualized risk characterization and prevention-oriented applications.

Thus, the main objective of this systematic review is to synthesize and critically evaluate the current evidence base and methodological maturity of wearable devices for the early detection and prevention of cognitive impairment and dementia. We structure our synthesis around 4 specific objectives:

To evaluate the usage trends and application contexts of wearable technologies, specifically comparing the deployment of research-grade actigraphy vs consumer-grade devices.To synthesize the strength of statistical associations between wearable-derived digital biomarkers (eg, sleep and circadian rhythms) and standard clinical cognitive assessments.To critically assess the performance and robustness of analytic approaches, comparing the outcomes of conventional statistical modeling, machine learning, and deep learning techniques.To assess how early detection is addressed in wearable-based studies by differentiating between studies that directly implement early detection and those that frame their findings as potentially relevant to early detection.

By addressing these specific objectives, the review aims to clarify the current state of knowledge and identify priorities for advancing wearable-based approaches toward clinical and public health application.

MethodsOverview

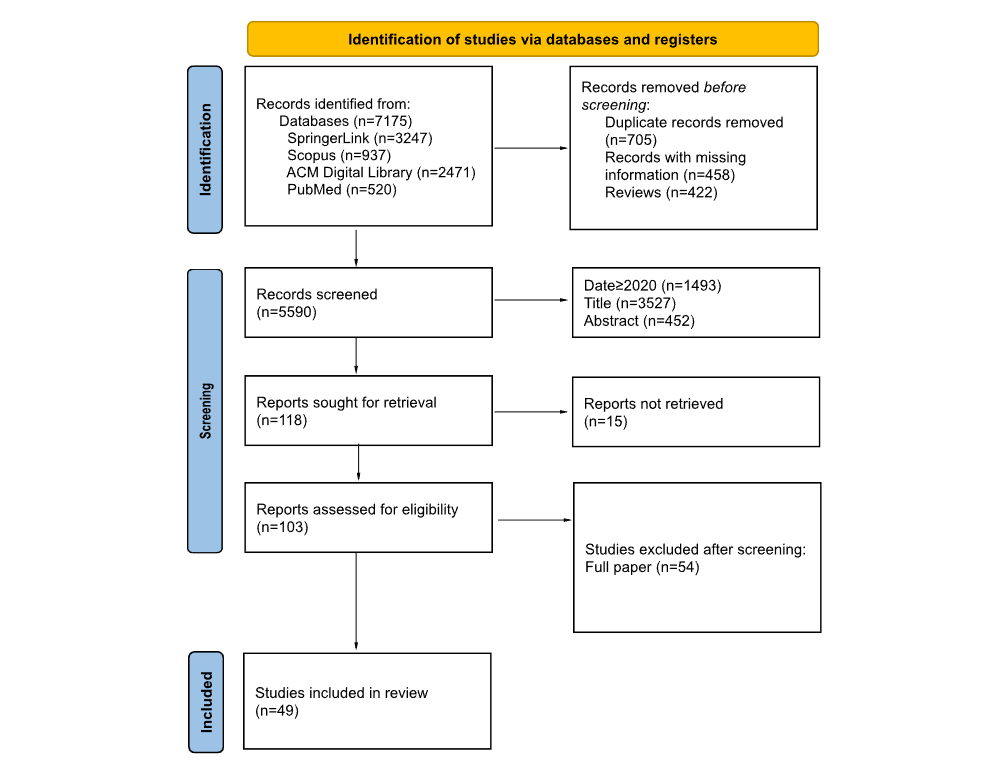

The reporting of this systematic review was guided by the standards of the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) 2020 statement [] (). The literature search strategy was developed and reported in accordance with the PRISMA-S (Preferred Reporting Items for Systematic Reviews and Meta-Analyses literature search extension) [] to enhance transparency and reproducibility of the search process (). The aim was to evaluate the association between wearable device–based sleep and activity measures and the onset or progression of cognitive impairment, including mild cognitive impairment (MCI), Alzheimer disease, and cognitive decline. The review process was carried out in multiple stages: identification of relevant articles, screening for eligibility, data extraction, and assessment of risk of bias. Studies that meet predefined inclusion criteria were included, and their findings were synthesized to provide insights into the role of wearable devices in monitoring cognitive health. No review protocol was prepared or registered before conducting this study.

Information Sources and Search Strategy

A comprehensive search strategy was used across multiple electronic databases, including PubMed, Scopus, ACM Digital Library, and SpringerLink, to identify relevant studies published after 2020. The search was conducted using a combination of MeSH (Medical Subject Headings) terms and keywords related to cognitive impairment, sleep and activity measures, wearable devices, and their associations with cognitive outcomes. Databases were searched individually using their native interfaces. Duplicate records were removed, and studies published before 2020 were discarded to align the scope of this systematic review with contemporary research questions, analytic frameworks, and the use of wearable devices representative of current practice in digital phenotyping and early detection and prevention of cognitive decline. The search strategy was designed to capture a broad range of studies, including randomized controlled trials, cohort studies, and observational studies, to provide a comprehensive overview of the existing evidence.

The search strategy integrates key concepts relevant to studies on the early detection of cognitive impairment and dementia using wearable devices that monitor activity and sleep. lists the search terms, organized into categories capturing cognitive impairment, sleep and activity measurements, wearable devices, and their application in early detection. Within each group, similar terms were combined using the OR operator, and the 4 groups were combined using the AND operator. Conceptually, the search followed the structure: (HC1 OR HC2 OR …) AND (Data1 OR Data2 OR …) AND (Wearable1 OR Wearable2 OR …) AND (EarlyDetection1 OR EarlyDetection2 OR …). Full search queries are included in .

Alzheimer disease is specifically included under dementia, as it accounts for 60%-70% of all dementia cases []. Data capture must include information on sleep, activity, or physiological signals. Wearable devices may be commercial (eg, smartwatches) or noncommercial (ad hoc or research-grade devices) and are typically worn on the body for extended periods. This review focuses on studies advancing early detection and prevention, excluding research that examines features of the condition without the potential to identify cognitive decline before clinical diagnosis or progression to a more advanced stage.

To consider an article in this study, at least 1 search term of each group must be present in either the title or the abstract. This ensures that the manuscript has a clear focus on continuous data collection on sleep, activity, or physiological signals and links these measurements to cognitive impairment outcomes. The search was limited to studies published after 2020 and in English to ensure accessibility to full-text articles.

No additional search methods were used, and no study registries were searched. No targeted website searching or manual browsing of conference proceedings was conducted. Study authors and experts were not contacted for additional data. No published search filters were used. Search strategies were developed specifically for this review and were not adapted from prior reviews. No search updates were performed after the initial search, and the search strategy was not peer reviewed. Reference lists of included studies were screened to identify additional relevant records.

Table 1. Groups of search terms and representative keywords used to identify studies in the systematic review, organized by health condition, data type, wearable technology, and early detection.GroupSearch termsHealth conditionCognitive impairment, mild cognitive impairment, dementia, cognitive decline, Alzheimer, neurocognitive disorder, and memory impairmentDataSleep, sleep duration, sleep quality, circadian rhythm, rest-activity rhythm, physical activity, actigraphy, accelerometry, heart rate, heart rate variability, HRV, respiratory rate, body temperature, respiration, skin temperature, step count, steps, and distanceWearableWearable, wearable device, wearable devices, wearable sensor, wearable sensors, body-worn sensor, body-worn sensors, fitness tracker, smartwatch, smart band, wristband, wristbands, accelerometers, consumer-grade wearable, actigraph, actigraphy, ambulatory monitor, and digital biomarkerEarly detectionRisk factor, onset, association, predictor, correlation, early detection, screening, and preventionEligibility Criteria

The inclusion and exclusion criteria that guided the selection of studies for this systematic review are presented in . The criteria are designed to ensure that the review captures only the most relevant, recent, and methodologically sound research.

For inclusion, studies must be published between 2020 and 2025 to reflect the most recent developments in the field. Only publications identified by the predefined search queries were considered. Eligible studies must present either new statistical outcomes or AI-based results that provide original contributions. Because the review focuses on prevention and early detection, manuscripts must directly address these topics. Furthermore, included studies were required to involve human participants with a mean age of 50 years or older, ensuring relevance to an aging population. Another important condition was the continuous collection of data from wearable devices for at least 24 hours, which allows for robust and objective measurements. Only peer-reviewed journal articles were considered, discarding gray literature and preprints.

Exclusion criteria further refine the selection. Publications not written in English or not available in full text, for example, when behind an inaccessible paywall, were excluded. Review articles, study protocols, and books were not considered to maintain a focus on original research. Retracted publications were excluded. In addition, studies were not eligible if they did not apply validated measures of cognitive impairment or dementia, focused on pharmacological interventions, relied exclusively on self-reported data or smartphone-based sensing, or included only healthy participants without relevant clinical characteristics. No minimum sample-size threshold was applied during study selection. As a result, included studies span a wide range of sample sizes, including small-sample investigations (eg, n<30).

Textbox 1. Inclusion and exclusion criteria applied in the systematic review.

Inclusion criteria:

Published between 2020 and 2025Identified by the search queriesArticles presenting new statistical outcomes or artificial intelligence resultsManuscripts closely related to prevention and early detectionHuman participants must be involved in the study with average age of 50 years or olderContinuous data captured from wearable devices for 24 hours or more

Exclusion criteria:

Publication not in EnglishPublication behind a paywall that cannot be retrievedReview papers, protocols, and booksRetracted publicationsValidated measures of cognitive impairment and dementia not includedFocus on drug or pharmacological interventionsOnly used self-reported data or smartphone-based sensingOnly included healthy participantsRisk of Bias Assessment

The risk of bias in included studies was assessed using the Appraisal Tool for Cross-Sectional Studies, the Newcastle-Ottawa Scale for cohort studies, the Cochrane Risk of Bias tool for randomized controlled trials, and the Quality Assessment of Diagnostic Accuracy Studies-2 for diagnostic studies []. These instruments evaluate domains such as selection, performance, detection, attrition, and reporting bias, as well as other potential sources of bias. Each study was independently evaluated by 2 coauthors (AC and MA), with disagreements resolved through discussion or consultation with a third coauthor (CM). The overall risk of bias was classified as low, moderate, or high, and these assessments were considered when interpreting the results of the review.

Study Selection and Data Collection Process

Search results from each database were exported as BibTeX files and managed in Zotero (Corporation for Digital Scholarship), then converted to comma-separated values for automated preprocessing using a custom Python script. This process harmonized metadata across databases, removed duplicates, applied publication year filters, and verified the presence of predefined search terms. Automated preprocessing was conducted by the first author and independently verified by the second author. The following data items were extracted from each eligible study: bibliographic details (author and year), study design, participant characteristics (sample size, age, and diagnosis), wearable device specifications (type, brand, and sensor modality), monitoring duration, cognitive assessment tools, analytic methods (statistical vs AI), and primary outcomes (statistical associations or predictive performance metrics). Study selection followed a 3-stage screening process, with independent title, abstract, and full-text reviews performed by the first and second authors. Discrepancies were resolved through consensus or adjudication by a third author. Eligible studies were synthesized using a structured narrative approach aligned with predefined review objectives, grouping studies by device type, cognitive outcomes, analytic methods, and prevention-oriented contributions.

Analytic approaches were classified according to the overall modeling paradigm rather than individual algorithms. Studies were categorized as AI-based when wearable-derived data were analyzed within predictive modeling frameworks emphasizing automated pattern learning, such as machine learning or deep learning methods, including regression-based models implemented within machine learning pipelines (eg, automated feature selection or cross-validation). In contrast, studies relying on predefined statistical models for inferential or association analyses were classified as statistical model–based approaches. In this review, “direct” evidence was defined for studies demonstrating individual-level early detection capability, including both cross-sectional screening or discriminative analyses and longitudinal or interventional designs enabling risk estimation or prevention. Cross-sectional studies were therefore considered direct when they demonstrated the ability to distinguish preclinical or prodromal disease states at the time of assessment.

Synthesis Methods

Quantitative synthesis was planned only for outcomes estimating a common construct using comparable analytical objectives and metrics. When outcomes cannot be mapped to a shared estimand for early detection, quantitative pooling was not conducted. In such cases, results were synthesized using a structured narrative approach aligned with the predefined review objectives. This approach grouped studies according to analytic strategy, cognitive assessment, and wearable measurement characteristics, without applying formal qualitative synthesis methodologies.

ResultsStudy Selection

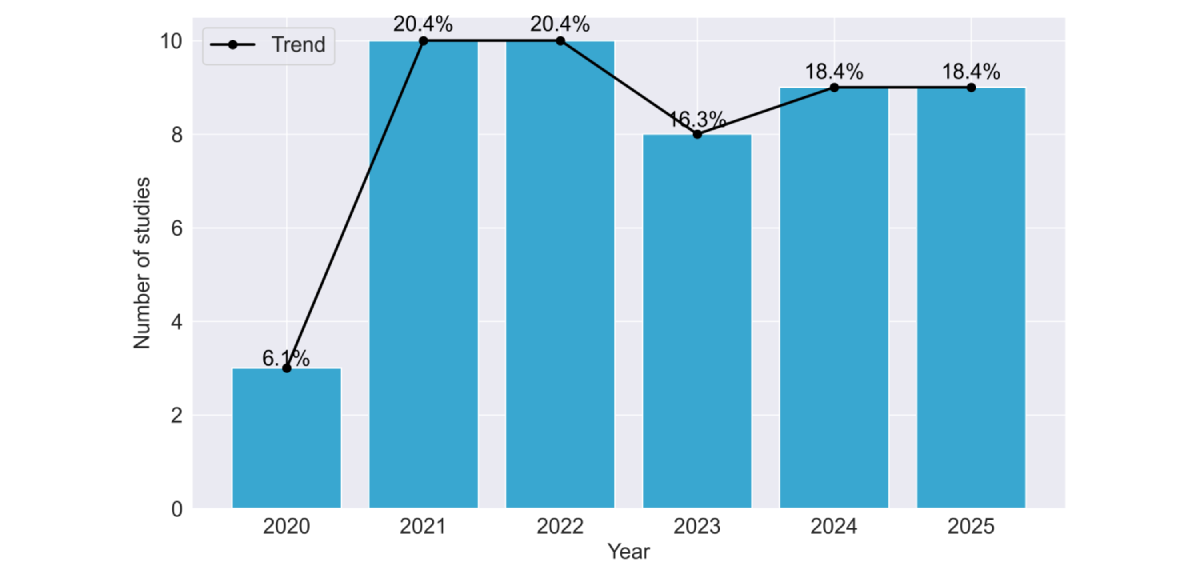

The database search identified 7175 records (SpringerLink, n=3247; Scopus, n=937; ACM Digital Library, n=2471; and PubMed, n=520). After removal of 705 duplicates, 458 records with missing information, and 422 reviews, a total of 5590 records remained for screening. Of these, 1493 were excluded based on publication year (<2020, n=1493), title (n=3527), or abstract (n=452). The remaining 118 reports were sought for full-text retrieval, but 15 could not be obtained. A total of 103 full-text articles were assessed for eligibility, and 54 were excluded for not meeting the inclusion criteria. Finally, a total of 49 studies were included in this systematic review (). shows the number of included studies by publication year. At the full-text screening stage, most studies were excluded because they did not include continuous wearable-based data collected over at least 24 hours, did not use wearable-derived data as part of the analysis, or did not report results relevant to the early detection of cognitive impairment or dementia. shows the relevant information extracted from the included studies, and additional details are provided in .

Figure 1. PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flow diagram of the study selection process.

Figure 1. PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flow diagram of the study selection process.  Figure 2. Temporal distribution of included studies by publication year (2020-2025). The bar chart and overlaid trend line illustrate the progression of research interest in the field. Data show a substantial increase in publication volume beginning in 2021, with research activity remaining consistently elevated through the 2024-2025 period. Data for 2025 are incomplete by 1 month. Table 2. Summary of included cross-sectional studies investigating wearable-derived measures in relation to dementia outcomes. The table reports study source, cross-sectional study design, participant groups and mean ages, and assessed risk of bias.SourceObjectiveGroups, nAge (years), mean (SD)Risk of biasLentzen et al []Early detectionHCa=69; DEMb=16069.2 (7.7)LowJones et al []Early detectionNCDc=21; MCId=1967.2 (8.4)LowSatomi et al []Early detectionHC=25; MCI=63; DEM=2179.3 (6.3)LowSauers et al []Risk assessmentHC=196; MCI=4774LowBaril et al []Risk assessmentHC=159; MCI=4468.25LowSeo et al []Early detectionHC=1519; MCI=353; DEM=8770.1 (6.1)LowHuang et al []Preventive interventionHC=225; MCI=133; DEM=3671.3(7.8); 72.9 (8.0)LowPalmer et al []Progression monitoringSCIe=29; MCI=7968.8 (8.9)LowLim []Early detectionHC=14; DEM=4≥65LowBoa Sorte Silva et al []Early detectionMCI=11373.1 (5.7)LowKim et al []Progression monitoringHC=41; NCD=54Median 60LowBasta et al []General insightMCI=40; DEM=4978.3 (6.5)LowRoh et al []Progression monitoringMCI=70; DEM=30Median 73LowLiu et al []Early detectionHC=31; MCI=6866.5LowEspinosa et al []Early detectionSCI=44; MCI=9967.4 (7.9)MediumManning et al []Risk assessmentHC=7; MCI=2; DEM=565.8MediumGhosal et al []Early detectionHC=54; DEM=3873.4 (7.1)MediumPark et al []Early detectionMCI/DEM=177; HC=14,48274.1 (7); 39 (2.3)MediumPalmer et al []Early detectionSCI=23; MCI=4465.8 (7.9)MediumWei et al []Early detectionHC=20; DEM=8Mean 59.2MediumCorbi and Burgos []Early detectionHC=37; DEM=7480.5Medium

Figure 2. Temporal distribution of included studies by publication year (2020-2025). The bar chart and overlaid trend line illustrate the progression of research interest in the field. Data show a substantial increase in publication volume beginning in 2021, with research activity remaining consistently elevated through the 2024-2025 period. Data for 2025 are incomplete by 1 month. Table 2. Summary of included cross-sectional studies investigating wearable-derived measures in relation to dementia outcomes. The table reports study source, cross-sectional study design, participant groups and mean ages, and assessed risk of bias.SourceObjectiveGroups, nAge (years), mean (SD)Risk of biasLentzen et al []Early detectionHCa=69; DEMb=16069.2 (7.7)LowJones et al []Early detectionNCDc=21; MCId=1967.2 (8.4)LowSatomi et al []Early detectionHC=25; MCI=63; DEM=2179.3 (6.3)LowSauers et al []Risk assessmentHC=196; MCI=4774LowBaril et al []Risk assessmentHC=159; MCI=4468.25LowSeo et al []Early detectionHC=1519; MCI=353; DEM=8770.1 (6.1)LowHuang et al []Preventive interventionHC=225; MCI=133; DEM=3671.3(7.8); 72.9 (8.0)LowPalmer et al []Progression monitoringSCIe=29; MCI=7968.8 (8.9)LowLim []Early detectionHC=14; DEM=4≥65LowBoa Sorte Silva et al []Early detectionMCI=11373.1 (5.7)LowKim et al []Progression monitoringHC=41; NCD=54Median 60LowBasta et al []General insightMCI=40; DEM=4978.3 (6.5)LowRoh et al []Progression monitoringMCI=70; DEM=30Median 73LowLiu et al []Early detectionHC=31; MCI=6866.5LowEspinosa et al []Early detectionSCI=44; MCI=9967.4 (7.9)MediumManning et al []Risk assessmentHC=7; MCI=2; DEM=565.8MediumGhosal et al []Early detectionHC=54; DEM=3873.4 (7.1)MediumPark et al []Early detectionMCI/DEM=177; HC=14,48274.1 (7); 39 (2.3)MediumPalmer et al []Early detectionSCI=23; MCI=4465.8 (7.9)MediumWei et al []Early detectionHC=20; DEM=8Mean 59.2MediumCorbi and Burgos []Early detectionHC=37; DEM=7480.5Medium

aHC: healthy controls.

bDEM: dementia.

cNCD: neurocognitive disorder.

dMCI: mild cognitive impairment.

eSCI: subjective cognitive impairment.

Characteristics of Included Studies

A total of 49 studies were included in the review (summarized in -). Sample sizes ranged from 14 to 91,948 participants, with a median of 145. A total of 5 large cohorts heavily influenced the mean values (ranging between 47,371 and 91,948 participants). When stratified by analytic approach, studies using traditional statistical methods (36/49, 73.5%) had a median of 100 participants (mean 2597, SD 13,574), while those applying machine learning (7/49, 14.3%) had a median of 99 participants (mean 114.9, SD 76.4). Studies using deep learning (6/49, 12.2%) had a median of 108 participants (mean 397.6, SD 465.4), largely driven by population-scale datasets. Most studies were rated as low risk (34/49, 69.4%), nearly one-third (13/49, 26.5%) were classified as medium risk, and 2/49 (4.1%) as high risk. Participant populations were predominantly composed of individuals with MCI (29/49, 59.2%), dementia (35/49, 71.4%), or healthy controls (36/49, 73.5%). A smaller subset included participants with subjective cognitive decline (4/49, 8.2%) or other at-risk groups (2/49, 4.1%). Regarding study design, half of the studies were cross-sectional (21/49, 42.9%), followed by longitudinal cohorts (24/49, 49%), with fewer randomized controlled trials or diagnostic studies. Most studies (28/49, 57.1%) focused on early detection, while others assessed risk factors (8/49, 19.5%), monitored disease progression (7/49, 14.3%), or preventive interventions (5/49, 10.2%). Risk of bias (complete tables are provided in ) was generally low (35/49, 71.4%), though higher risk ratings were associated with small sample sizes and lack of external validation.

Table 3. Summary of included cohort studies investigating wearable-derived measures in relation to dementia outcomes. The table reports study source, study objective, cohort study design, participant groups and mean ages, and assessed risk of bias.SourceObjectiveGroups, nAge (years), mean (SD)Risk of biasBaril et al []Progression monitoringHCa=159; MCIb=4468.2 (5.4)LowSun et al []Early detectionHC=807; DEMc=27080.9 (7.3)LowWiner et al []Early detectionHC=82,377; DEM=45262.0 (7.8)LowHaghayegh et al []Early detectionHC=90,962; MCI or DEM=55562.4 (7.8)LowBasta et al []Progression monitoringHC=29; MCI=49; DEM=3280.3 (6.6)LowMilton et al []Risk assessmentHC=476; MCI=164; DEM=9382.5 (2.9)LowSkourti et al []Progression monitoringHC=146; MCI=231; DEM=12872.8 (6.7)LowGao et al []Early detectionHC=409; MCI=61; DEM=8158 (8)LowJeon et al []Early detectionHC=100; MCI=2969.3 (7.7)LowYi Lee et al []Preventive interventionHC=123; MCI=5175.6 (6.9)LowLysen et al []General insightHC=1262; DEM=6066.1 (7.6)LowAgudelo et al []Early detectionDEM=103555.2 (2.5)LowNing et al []Risk assessmentHC=91,212; DEM=736Median 63LowNing et al []Risk assessmentHC=89,619; DEM=710Median 64.9LowZhao et al []Risk assessmentHC=87,857; DEM=73561.9 (7.9)LowLu et al []Early detectionHC=303; MCI=130; DEM=27781.1 (5.2)LowChan et al []Early detectionHC=46,984; DEM=38767.0 (4.0)LowShi et al []Early detectionHC=346; DEM=34470.1 (6.9)LowXiao et al []Progression monitoringHC=570; MCI=120; DEM=7384.1LowHoepel et al []Preventive interventionHC=1849; DEM=5071.3 (9.26)LowCho et al []Early detectionDEM=22280.4 (7.4)MediumPlotogea et al []Early detection, preventive interventionHC=25; SCId=7; MCI=17; DEM=2558.9 (9.8)MediumTarga et al []Early detectionDEM=100Median 76MediumCho et al []Risk assessmentDEM=14581.2 (6)Medium

aHC: healthy controls.

bMCI: mild cognitive impairment.

cDEM: dementia.

dSCI: subjective cognitive impairment.

Table 4. Summary of included studies with other study designs investigating wearable-derived measures in relation to dementia outcomes. The table reports study source, study objective (early detection or progression monitoring), study design (diagnostic study, randomized controlled trial, or quasi-experimental study), participant groups and ages, and assessed risk of bias.SourceObjectiveStudy designGroups, nAge (years)Risk of biasRykov et al []Early detectionQuasi-experimental studyMCIa=1760.3 (SD 4.5)MediumDavid et al []Progression monitoringRandomized controlled trialDEMb=3870 (SD 7)MediumKhosroazad et al []Early detectionDiagnostic studyHC=45; MCIc=5073.6HighHossain et al []Early detectionDiagnostic studyHC=22; MCI=6; DEM=557.5High

aHC: healthy controls.

bDEM: dementia.

cMCI: mild cognitive impairment.

Table 5. Wearable devices, recording parameters, and analytic methods used across the included studies. The table lists device type, monitoring duration, product and model, analytic approach (statistical, machine learning, deep learning, or clustering), primary task, and cognitive assessments applied.SourceWearable

categoryWearableProduct (company name)Duration (days), nModelCognitive testEspinosa et al []ResearchActigraphActiwatch Spectrum (Philips)13.7StatisticalMMSEaLentzen et al []Commercial researchSmartwatchFitbit Charge 3 (Google); AX3 (Axivity); Physilog (Gait Up)56Machine learningAmsterdam IADLbManning et al []ResearchActigraphActiwatch (Philips)14StatisticalMoCAcJones et al []CommercialSmartwatchApple Watch 8 (Apple)6.9StatisticalHVLTdSatomi et al []ResearchActigraphActTrust (Condor Instruments)7StatisticalCDReBaril et al []ResearchActigraphActiwatch (Philips)6.5StatisticalRBANSfSauers et al []ResearchActigraphSleep Profiler (Advanced Brain Monitoring); Actiwatch2 (Philips); Alice PDx (Philips)6StatisticalCDRSun et al []ResearchActigraphActical (Philips)14Deep learningNINCDS-ADRDAgWiner et al []ResearchAccelerometerAX3 (Axivity)7StatisticalTMTh[]Multimodal researchWrist-worn deviceE4 wristband (Empatica)10Machine learningNTBiHaghayegh et al []ResearchAccelerometerAX3 (Axivity)6StatisticalICD-10jBasta et al []Ad hocActigraphNot specified7StatisticalICD-10Baril et al []ResearchActigraphActiwatch (Philips)6.5StatisticalMMSEMilton et al []Multimodal researchActigraphSleepWatch-O (Ambulatory Monitoring)3StatisticalMMSECho et al []ResearchActigraphActiGraph wGT3X-BT (ActiGraph)14Machine learningMMSESeo et al []ResearchAccelerometerHW-100 (Kao Corporation)28StatisticalMMSEHuang et al []ResearchActigraphGENEActiv Original (Activinsights Company)6StatisticalMMSEKhosroazad et al []ResearchActigraphActiwatch (Philips)7Deep learningHVLTSkourti et al []ResearchActigraphActigraph GT3XP (ActiGraph)3StatisticalRAVLTkDavid et al []CommercialSmartwatchFitbit Charge 2 (Google)13StatisticalMoCAGao et al []ResearchAccelerometerAX3 (Axivity)7StatisticalICD-10Ghosal et al []ResearchAccelerometerActigraph GT3x+ (ActiGraph)7Machine learningCDRPalmer et al []ResearchActigraphActiwatch Spectrum (Philips)10.5Deep learningMMSEJeon et al []ResearchActigraphActiwatch 2 (Philips)5.5StatisticalMMSELim et al []Ad hocSmartwatchNot specified7Deep learningMMSEPlotogea et al []ResearchActigraphActiwatch Spectrum Pro (Philips)7StatisticalPHESlBoa Sorte Silva et al []ResearchActigraphMotionWatch8 (CamNtech)7StatisticalMoCAPark et al []CommercialAccelerometerFitmeter (FitNLife); ActiGraph AM-7164 (ActiGraph )24.6 7Deep learningMMSE; CDR; GDSmPalmer et al []ResearchActigraphActiwatch Spectrum (Philips)7StatisticalMMSEKim et al []ResearchActigraphSpectrum Plus (Philips); Actiwatch Spectrum (Philips)10.5StatisticalNIHnTarga et al []ResearchActigraphActiwatch 2 (Philips)14StatisticalMMSEWei et al []CommercialSmartwatchMi Band 2 (Xiaomi)14StatisticalMMSEHossain et al []Ad hocSmartwatchNot specified182.5Machine learningMMSECho et al []Ad hocActigraphwGT3X-BT (ActiGraph)11.5StatisticalMMSECorbi and Burgos []ResearchAccelerometerAX3 (Axivity)4Machine learningGDSBasta et al []ResearchActigraphActigraph GT3XP (ActiGraph)3StatisticalMMSEYi Lee et al []ResearchActigraphGENEActiv Original (ActivInsights)7StatisticalMoCARoh et al []CommercialAccelerometerFitmeter (FitNLife)4StatisticalSNSBoLysen et al []ResearchActigraphActiWatch AW4 (CamNtech)6StatisticalMMSEAgudelo et al []ResearchActigraphActiwatch Spectrum (Philips)7StatisticalB-SEVLT-SumpNing et al []ResearchAccelerometerAX3 (Axivity)7StatisticalICD-10Ning et al []ResearchAccelerometerAX3 (Axivity)7StatisticalICD-10Zhao et al []ResearchAccelerometerAX3 (Axivity)7StatisticalICD-10Lu et al []ResearchActigraphwGT3X-BT (Ametris)7StatisticalMoCAChan et al []ResearchSmartwatchNot specified7StatisticalICD-10Shi et al []ResearchActigraphwGT3X-BT (Ametris); Actiwatch Spectrum (Philips)7; 3Deep learningMoCALiu et al []CommercialAccelerometerW180 (Shenzhen Fitfaith)14Machine learningMoCAXiao et al []ResearchActigraphActigraph GT3XP (Ametris)7StatisticalTMTHoepel et al []ResearchActigraphGENEActiv Original (ActivInsights)4StatisticalMMSE

aMMSE: Mini-Mental State Examination.

bAmsterdam IADL: Amsterdam Instrumental Activities of Daily Living.

cMoCA: Montreal Cognitive Assessment.

dHVLT: Hopkins Verbal Learning Test-Revised.

eCDR: Clinical Dementia Rating.

fRBANS: Repeatable Battery for the Assessment of Neuropsychological Status.

gNINCDS-ADRDA: National Institute of Neurologic, Communicative Disorders and Stroke and Alzheimer’s Disease and Related Disorders Association.

hTMT: Trail-Making Test.

iNTB: Neuropsychological Test Battery.

jICD-10: International Statistical Classification of Diseases, Tenth Revision.

kRAVLT: Rey Auditory Verbal Learning Test.

lPHES: Psychometric Hepatic Encephalopathy Score.

mGDS: Global Deterioration Scale.

nNIH: National Institutes of Health.

oSNSB: Seoul Neuropsychological Screening Battery.

pB-SEVLT-Sum: Brief Spanish-English Verbal Learning Test.

Table 6. Summary of machine learning used across studies, including model type, main performance metrics, analytical task (classification, regression, or clustering), and the corresponding feature selection or explainable artificial intelligence (XAI) techniques applied. Only the best-performing metric for each model is reported.SourceModelMetrics (best results)TaskFeature selection/XAILentzen et al []LRa; DTb; RFc; XGBoostdAUCe=0.73Binary classificationSHAPfRykov et al []LR; RF; XGBoostR2=0.690; ρ=0.700; MAEg=0.460RegressionCorrelation-based feature selectionCho et al []LR; RF; GBMh; SVMiAUC=0.929; accuracy=0.935; precision=0.800; sensitivity=0.956; F1-score (0.819)Binary classificationPermutation feature importanceGhosal et al []GLMj; SOFRk; SOTDRl; SOTDR-LmAUC=0.811; R2=0.333Binary classification; regressionLASSOn/GELo penalties + functional coefficientsHossain et al []GBM; SVM; LR + LASSO; CTGANpAccuracy=0.948Multiclass classification; regressionCorrelation-based feature selection; wrapper feature selectionCorbi and Burgos []Expectation-maximization clusteringAccuracy=0.910Unsupervised clusteringFeature relevance via variable testingLiu et al []GBDTq; XGBoostrAccuracy=0.757; recall=0.952; AUC=0.628Binary classificationPermutation importance

aLR: logistic regression.

bDT: decision tree.

cRF: random forest.

dXGBoost: Extreme Gradient Boosting.

eAUC: area under the curve.

fSHAP: Shapley additive explanations.

gMAE: mean absolute error.

hGBM: gradient boosting machine.

iSVM: support vector machine.

jGLM: generalized linear model.

kSOFR: scalar-on-function regression.

lSOTDR: scalar on time-by-distribution regression.

mSOTDR-L: SOTDR via time varying L moments.

nLASSO: least absolute shrinkage and selection operator.

oGEL: group exponential LASSO.

pCTGAN: conditional tabular generative adversarial network.

qGBDT: gradient boosting decision tree.

rXGBoost: Extreme Gradient Boosting.

Table 7. Summary of deep learning used across studies, including model type, main performance metrics, analytical task (classification, regression, or survival analysis), and the corresponding feature selection or explainable artificial intelligence (XAI) techniques applied. Only the best-performing metric for each model is reported.SourceModelMetrics (best results)TaskFeature selection/XAISun et al []CNNa; ElasticNet; RSFbC-index=0.840; AUCc=0.880Survival analysis (time to Alzheimer disease onset)Gradient and Gini feature importance; hazard ratio interpretabilityKhosroazad et al []Neural networkAUC=0.880; sensitivity=0.870; specificity=0.890Binary classificationIntrinsic via time-latencyPalmer et al []MS-GANd; Bayesian LReDice=0.730; ORf=1.830Segmentation; regressionBayesian coefficients (odds ratio and CI)Lim et al []Neural network + PCAgAUC=0.990Binary classificationCorrelation-based interpretabilityPark et al []1D convolutional autoencoder + LRR2=0.979RegressionBackward feature eliminationShi et al []CDPredh; XGBoostiHip: accuracy=0.84 and AUC=0.86; Wrist: accuracy=0.69 and AUC=0.73Binary classificationNonzero predictor relative importance ranking

aCNN: convolutional neural network.

bRSF: random survival forest.

cAUC: area under the curve.

dMS-GAN: multispecies generative adversarial network.

eLR: logistic regression.

fOR: odds ratio.

gPCA: principal component analysis.

hCDPred: cognitive decline predictor.

iXGBoost: Extreme Gradient Boosting.

Table 8. Summary of statistical approaches applied across studies, including model type, reporting metrics, covariates or adjustment factors, and main associations between wearable-derived variables and cognitive impairment or dementia indicators. Only the most relevant and statistically significant results for each study are presented. For wearable-derived metrics, arrows indicate the direction of association: an upward arrow (↑) denotes that higher values of the variable are associated with greater cognitive impairment, whereas a downward arrow (↓) indicates the opposite.SourceModelMetricsScoreEspinosa et al []General linear modelsCWPaIVb (CWP<.001, ↑); ISc (CWP<.001, ↓)Manning et al []Pearson correlation analysisPearson CCd, P valueActivity counts (CC=–0.829; P=.041, ↓)Jones et al []Linear regression modelsβ coefficient, 95% CIIV (β≈.40, q=.022, ↑); sleep onset (β=–.28, 95% CI –0.55 to –0.02, ↑); IS (β=–.27, 95% CI –0.54 to 0.00, ↓)Satomi et al []Multinomial logistic regressionRRe, 95% CI, P valueIV (RR 3.21, 95% CI 1.40-7.34, P=.006, ↑); IS (RR 0.44, 95% CI 0.21-0.93, P=.03, ↓); M10 (RR 0.40, 95% CI 0.18-0.89, P=.02, ↓)Baril et al []Linear regression modelsStandardized β coefficient, P valueSleep duration (β=.384, P=.001, ↑)Sauers et al []Linear regression modelsEstimate (β), P valueSleep efficiency (β=–6.026, P<.001, ↓); sleep latency (β=11.302, P<.001, ↑); number of awakenings (β=6.585, P=.001, ↑)Winer et al []Cox proportional hazards modelsHRg, 95% CI, P valueIS (HR 1.25, 95% CI 1.050-1.480; P=.007, ↑); amplitude (HR 0.79, 95% CI 0.650-0.960, P=.02, ↓); M10 (HR 0.75, 95% CI 0.610-0.940, P=.01, ↓); MESORh (HR 0.78, 95% CI 0.590-0.998, P=.048, ↓)Haghayegh et al []Cox proportional hazard modelsHR, 95% CIAmplitude (HR 1.32, 95% CI 1.17-1.49, ↑); M10 (HR 1.28, 95% CI 1.14-1.44, ↑); L5 (HR 1.15, 95% CI 1.10-1.21, ↑); IV (HR 1.14, 95% CI 1.05-1.24, ↑); rest-activity rhythm (HR 1.23, 95% CI 1.16-1.29, ↑)Basta et al []ANCOVAP valueSleep duration (night TSTj, P<.001, ↑); TiBk (night TiB, P<.001, ↑)Baril et al []Linear regression modelsP valueSleep duration (P<.05, ↑); activity counts (P<.05, ↑); circadian rhythm (P<.05, ↑)Milton et al []Multinomial logistic regressionORl, 95% CIWake after sleep onset (OR 2.26, 95% CI 1.12-4.55, ↑); sleep efficiency (OR 2.15, 95% CI 1.03-4.47, ↓)Seo et al []Two-way ANCOVAP valueMovement/acceleration (P=.03, ↓)Huang et al []Unconditional multivariable logistic regressionAORm, 95% CIMESOR (AOR 1.99, 95% CI 1.04-3.81, ↑)Skourti et al []Path models (analysis of moment structures)Standardized β coefficient, P valueSleep efficiency (direct β=.266, P=.001, ↓); wake after sleep onset (direct β=–.211, P=.001, ↑); TiB (24-hour TiB, indirect β=–.079, P<.001, ↑)David et al []Spearman rank correlationPartial correlation coefficient (ρ), P valueModerate-to-vigorous physical activity (ρ=0.558, P=.02, ↓)Gao et al []Cox proportional hazardsHR, 95% CIAmplitude (HR 1.94, 95% CI 1.53-2.46, P<.001, ↑); IV (HR 1.49, 95% CI 1.18-1.88, P<.001, ↑); sleep duration (HR 1.28, 95% CI 1.06-1.55, P=.01, ↑)Jeon et al []Multivariate linear regressionsβ coefficient, P valueAcrophase (β=–.256, P=.004, ↑)Plotogea et al []Multivariate logistic regressionOR, 95% CI, P valueSleep efficiency (OR 0.803, 95% CI 0.711-0.904, P=.001, ↓); sleep latency (OR 1.212, 95% CI 1.063-1.383, P=.004, ↑)Boa Sorte Silva et al []Linear regression modelsUnstandardized β (β), P valueFragmentation index (β=.004, P=.046, ↑)Palmer et al []Fixel-wise linear regression; Bayesian multiple linear regressionβ coefficient, P valueL5 (β=.29, P<.050, ↑)Kim et al []Multivariable linear modelsPartial rank correlation (ρ), P valueIV (ρ=–0.44, P<.001, ↑); M10 (ρ=0.45, P<.001, ↓); IS (ρ=0.40, P=.009, ↓)Targa et al []Linear regression modelsEffect size, P valueIV (effect size=–0.715, P=.013, ↑)Wei et al []Descriptive statistics; 1-tailed t testMean (SD), P valueAmplitude (0.93, SD 0.59, P=.030, ↓); IS (0.32, SD 0.19, P=.02, ↓); acrophase (44, SD 145, P<.001, ↓)Cho et al []Generalized linear mixed modelOR, 95% CI, P valueSleep duration (OR 0.9, 95% CI 0.8-1.0, P<.001, ↓); activity counts (OR 0.02, 95% CI 0.0-0.5, P=.02, ↓)Basta et al []ANCOVAMean (SD), P valueSleep duration (night TST=7.7-hour vs 7.2-hour, P=.011, ↑); sleep duration (24-hour TST=8.5-hour vs 7.8-hour, P=.012, ↑)Yi Lee et al []Multivariate logistic regression; multinomial logistic regressionAOR, 95% CIPercent rhythm (AOR 0.26, 95% CI 0.08-0.79, ↓)Roh et al []Multiple linear regressionEstimate (β), SE, P valueMESOR (β=1.17, SE=0.37, P<.001, ↓); L5 (β=3.77, SE=1.22, P<.001, ↓)Lysen et al []Cox proportional hazardsHR, 95% CISleep latency (HR 1.44, 95% CI 1.13-1.83, ↑); TiB (HR 1.40, 95% CI 1.04-1.88, ↑); sleep efficiency (HR 0.72, 95% CI 0.55-0.93, ↓); wake after sleep onset (HR 1.38, 95% CI 1.10-1.74, ↑)Agudelo et al []Survey linear regression modelsβ coefficient, P valueSleep latency (β=–.003, P<.001, ↑); sleep duration (β=–.070, P<.05, ↑)Ning et al []Cox proportional hazardsHR, 95% CIModerate-to-vigorous physical activity (HR 0.69, CI 0.54-0.87, P<.001, ↓)Ning et al []Cox proportional hazardsHR, 95% CIModerate-to-vigorous physical activity (HR 0.60, 95% CI 0.40-0.90, ↓)Zhao et al []Cox proportional hazardsHR, 95% CISleep duration (HR 0.801, 95% CI 0.717-0.893, ↓)Lu et al []Logistic regression models ANCOVAOR, 95% CIRelative amplitude (OR 1.68, 95% CI 1.12-2.50, ↑)Chan et al []Cox proportional hazardsHR, 95% CIBedtime (HR 1.52, 95% CI 1.22-1.85, ↑)Xiao et al []Cox proportional hazardsHR, 95% CIMESOR (HR 2.45, 95% CI 1.52-3.94, ↓)Hoepel et al []Cox proportional hazardsHR, 95% CISedentary behavior (HR 0.36, 95% CI 0.24-0.55, ↓)

aCWP: clusterwise P value.

bIV: intradaily variability.

cIS: interdaily stability.

dCC: correlation constant.

eRR: relative risk.

fM10: most active 10-hour.

gHR: hazard ratio.

hMESOR: midline estimated statistic of rhythm.

iL5: least active 5-hour.

jTST: total sleep time.

kTiB: time in bed.

lOR: odds ratio.

mAOR: adjusted odds ratio.

Feasibility of Quantitative Synthesis

The feasibility of quantitative meta-analysis for early detection depends on whether the included studies estimate a common outcome construct or estimand. Quantitative synthesis requires studies to measure the same underlying construct. In the current literature, early detection is not operationalized as a single measurable outcome but is addressed through multiple, nonequivalent analytical objectives and metrics. The evidence base is characterized by the following dimensions:

Reported statistical metric: of the 49 included studies, 13 (26.5%) report performance metrics from AI-based approaches, such as accuracy or area under the curve. The remaining 36 studies report heterogeneous statistical effect measures, including hazard ratios for time-to-event analyses (n=16), odds ratios (ORs) for binary risk (n=8), and beta coefficients or correlation measures for continuous associations (n=12). These metrics reflect distinct inferential targets and are not interchangeable.

Device and sensor characteristics: most studies rely on research-grade actigraphy with access to raw accelerometry data (43/49, 87.8%), whereas a smaller subset uses consumer-grade wearables integrating additional sensors and proprietary processing (7/49, 14.3%). Differences in device type, sensor modality, and preprocessing lead to wearable-derived measures that do not map onto a common estimand.

Study design: study designs were divided between cross-sectional analyses assessing concurrent associations (21/49, 42.8%) and longitudinal cohort studies estimating prospective risk or disease onset (24/49, 49%). These designs address different research questions and operate over distinct temporal frameworks.

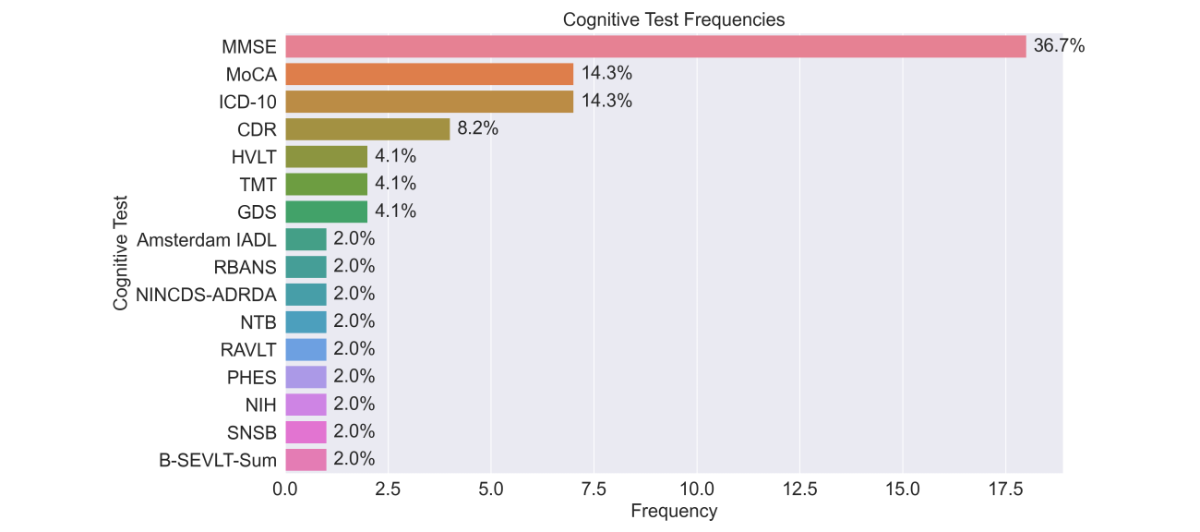

Clinical endpoint: cognitive outcomes included both categorical clinical diagnoses and continuous screening scores. The MMSE was the most frequently used outcome (18/49, 36.7%), followed by ICD-10 (International Statistical Classification of Diseases, Tenth Revision)–based diagnoses and the MoCA (each 7/49, 14.3%). These endpoints differ in scale, sensitivity, and clinical interpretation.

Because early detection is not defined as a single measurable construct across studies, no common estimand can be specified for quantitative pooling. A structured narrative synthesis is therefore adopted to examine how analytical approaches, cognitive assessments, and wearable measurement strategies are used to demonstrate early detection capability across the cognitive continuum.

Wearable Categories

Wearable devices were categorized into 4 groups according to their primary intended use. Research devices were by far the most common, reported in 87.8% (43/49) of studies, and included systems such as ActiGraph, Actiwatch, and accelerometer loggers (eg, Axivity AX3). These devices were purpose-built and validated for assessing activity, sleep, and circadian rhythms in research and clinical contexts. Commercial everyday wearables, such as Fitbit, Apple Watch, and Xiaomi Mi Band, were used in 14.3% (7/49) of studies; these multipurpose consumer devices are affordable and widely accessible but often provide limited access to raw data. Ad hoc prototypes, developed specifically for individual studies, accounted for 8.2% (4/49) of studies. Finally, multimodal research devices were used in 4.1% (2/49) of studies, typically integrating physiological sensing beyond accelerometry, such as heart rate, electrodermal activity, or peripheral arterial tonometry. Counts are nonexclusive because some studies used multiple device types in the same cohort [].

Across all categories, wear time was typically about 1 week (median, 7 days), with durations ranging from 3-4 days to several months (up to 182.5 days in 1 longitudinal cohort). Most of the studies (29/49, 59.2%) relied on actigraphy devices to capture rest–activity rhythms and sleep, followed by accelerometer devices (12/49, 25.5%) and smartwatches (7/49, 14.3%).

Cognitive Outcomes

The most frequently used cognitive measures were global screening tests, with their distribution shown in . The MMSE was the most frequently used measure, applied in 36.7% (18/49) of studies. Clinical diagnoses based on ICD-10 dementia or MCI codes were reported in 14.3% (7/49) of studies. The MoCA appeared in 14.3% (7/49) of studies and Clinical Dementia Rating was used in 8.2% (7/49) of studies. The Hopkins Verbal Learning Test and the Global Deterioration Scale were each used in 4.1% (2/49) of studies. A wide range of other neuropsychological and functional instruments, including the Amsterdam Instrumental Activities of Daily Living scale, Repeatable Battery for the Assessment of Neuropsychological Status, National Institute of Neurologic, Communicative Disorders and Stroke and Alzheimer’s Disease and Related Disorders Association criteria, Trail Making Test, Neuropsychological Test Battery, Rey Auditory Verbal Learning Test, Psychometric Hepatic Encephalopathy Score, National Institutes of Health toolbox, Seoul Neuropsychological Screening Battery, and Brief Spanish-English Verbal Learning Test-Sum, were each applied in 2% (1/49) of studies. The overall distribution of cognitive measures is shown in .

One study applied AI-based approaches to directly predict cognitive test scores [], while others relied on predefined cut-off points for classification [,,,,,]. Some studies used both direct score estimation and classification approaches [,]. A separate line of work focused on predicting survival outcomes, which were not directly comparable to cognitive test scores []. Finally, 2 studies addressed related regression tasks by estimating white matter characteristics in the brain from wearable-derived data [,].

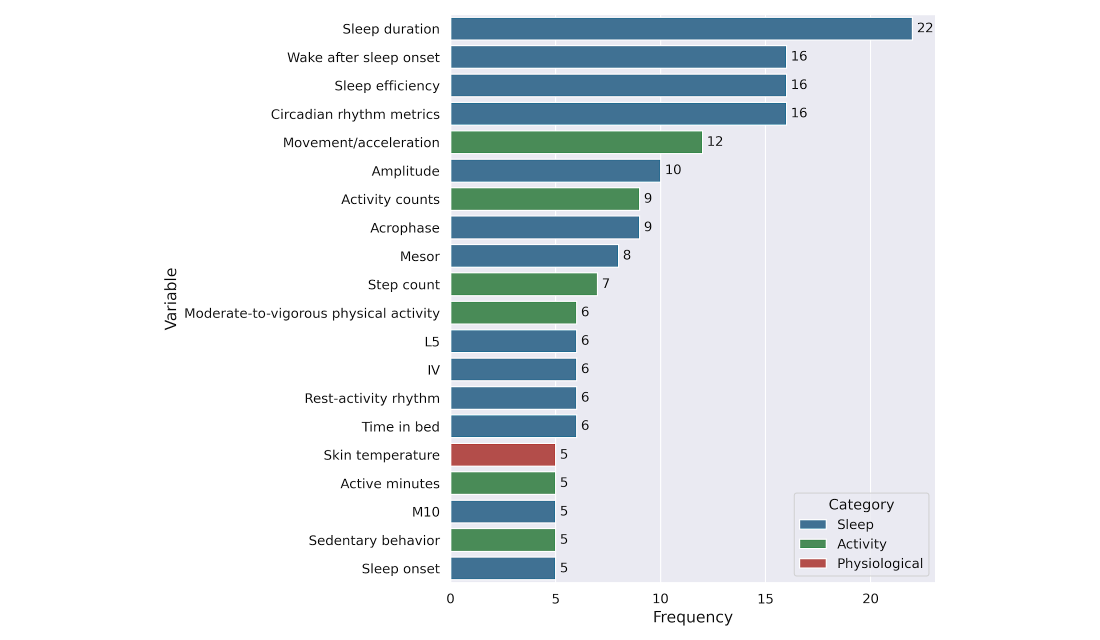

Across the 49 studies, the most frequently reported wearable-derived variables (complete list is provided in ) used to estimate cognitive assessments () were predominantly sleep-related. Sleep duration was the most frequently reported measure (22/49, 44.9%), followed by wake after sleep onset (16/49, 32.7%), sleep efficiency (16/49, 32.7%), and circadian rhythm metrics (16/49, 32.7%). Activity-related variables were reported less often overall, including movement/acceleration (12/49, 24.5%), activity counts (9/49, 18.4%), step count (7/49, 14.3%), moderate-to-vigorous physical activity (6/49, 12.2%), active minutes (5/49, 10.2%), and sedentary behavior (5/49, 10.2%). Physiological variables were least commonly reported, with skin temperature appearing in a small subset of studies (5/49, 10.2%).

Figure 3. Frequency of cognitive assessment instruments across included studies. The bar chart illustrates the distribution of specific cognitive tests and diagnostic criteria used. Frequencies and percentages represent the prevalence of each tool within the total sample of studies. Amsterdam IADL: Amsterdam Instrumental Activities of Daily Living; B-SEVLT-Sum: Brief Spanish-English Verbal Learning Test; CDR: Clinical Dementia Rating; GDS: Global Deterioration Scale; HVLT: Hopkins Verbal Learning Test-Revised; ICD-10: International Classification of Diseases, Tenth Revision; MMSE: Mini-Mental State Examination; MoCA: Montreal Cognitive Assessment; NIH: National Institutes of Health; NINCDS-ADRDA: National Institute of Neurologic, Communicative Disorders and Stroke and Alzheimer’s Disease and Related Disorders Association; NTB: Neuropsychological Test Battery; PHES: Psychometric Hepatic Encephalopathy Score; RAVLT: Rey Auditory Verbal Learning Test; RBANS: Repeatable Battery for the Assessment of Neuropsychological Status; SNSB: Seoul Neuropsychological Screening Battery; TMT: Trail-Making Test.

Figure 3. Frequency of cognitive assessment instruments across included studies. The bar chart illustrates the distribution of specific cognitive tests and diagnostic criteria used. Frequencies and percentages represent the prevalence of each tool within the total sample of studies. Amsterdam IADL: Amsterdam Instrumental Activities of Daily Living; B-SEVLT-Sum: Brief Spanish-English Verbal Learning Test; CDR: Clinical Dementia Rating; GDS: Global Deterioration Scale; HVLT: Hopkins Verbal Learning Test-Revised; ICD-10: International Classification of Diseases, Tenth Revision; MMSE: Mini-Mental State Examination; MoCA: Montreal Cognitive Assessment; NIH: National Institutes of Health; NINCDS-ADRDA: National Institute of Neurologic, Communicative Disorders and Stroke and Alzheimer’s Disease and Related Disorders Association; NTB: Neuropsychological Test Battery; PHES: Psychometric Hepatic Encephalopathy Score; RAVLT: Rey Auditory Verbal Learning Test; RBANS: Repeatable Battery for the Assessment of Neuropsychological Status; SNSB: Seoul Neuropsychological Screening Battery; TMT: Trail-Making Test.  Figure 4. Top 20 most frequently used wearable-derived variables across studies. Variables are grouped by domain: sleep (blue), activity (green), and physiological (red). Frequencies represent the number of studies reporting each variable after standardization and filtering for wearable compatibility. Wearable Data Analysis Approaches

Figure 4. Top 20 most frequently used wearable-derived variables across studies. Variables are grouped by domain: sleep (blue), activity (green), and physiological (red). Frequencies represent the number of studies reporting each variable after standardization and filtering for wearable compatibility. Wearable Data Analysis Approaches

Traditional statistical methods were the most frequently applied, reported in 73.5% (36/49) of studies (). These included group comparisons, correlation analyses, and regression models evaluating associations between wearable-derived activity or sleep metrics and cognitive outcomes. Commonly reported features were total daily activity counts, sleep duration and efficiency, circadian rhythm indices (intradaily variability, interdaily stability, and relative amplitude), and timing markers such as acrophase or sleep midpoint. The list of wearable-derived features used across these studies, with a description per feature, is included in .

Most studies relying on conventional statistical approaches reported significant associations between wearable-derived variables and cognitive outcomes, although the magnitude of reported effects varied with sample size. In smaller studies (n<100), standardized regression coefficients and odds ratios tended to be larger, typically ranging from β≈.35-.55 or OR 2.0-3.1 [,,]. In larger cohort studies (n≥100), effect estimates were generally lower, around β≈.10-.25 or OR 1.3-1.8 [,,,].

According to established benchmarks for social and behavioral sciences, standardized coefficients are typically categorized as small (β≈.20), medium (β≈.50), or large (β≈.80) []. While the estimates from larger cohorts in this review fall within the small-to-moderate range, they must be interpreted within the specific context of cognitive research. Given that cognitive performance is a multifactorial outcome influenced by a broad array of genetic, environmental, and demographic variables, the observation of stable effect sizes between .10 and .25 is highly meaningful. Such values represent robust contributions to the variance that, despite their conservative magnitude, suggest that wearable-derived metrics are reliable markers of cognitive health at a population level. While most associations reached statistical significance (P<.05), only a limited number of studies reported fully adjusted models or external validation [].

Machine learning methods were applied in 14.3% (7/49) of studies, most often for classification tasks such as distinguishing MCI or dementia from healthy controls. Machine learning models included logistic regression, decision trees, support vector machines, random forests, and gradient-boosting approaches such as Extreme Gradient Boosting (XGBoost). Deep learning approaches were reported in 12.2% (6/49) of studies and were typically applied in larger datasets, where models captured temporal patterns of activity or sleep. Deep learning architectures included neural networks, convolutional neural networks, generative adversarial network–based approaches, and autoencoders. In addition, a small number of studies (2/49, 4.1%) applied clustering techniques to identify subgroups with distinct rest–activity profiles.

Across machine learning and deep learning studies, reported performance metrics varied widely depending on task, cohort size, and study design. Classification performance ranged from moderate discrimination in large, pragmatic settings (eg, area under the receiver operating characteristic curve [AUROC]≈0.73; n=229; dementia=160 and healthy controls=69 []) to higher values in smaller or more constrained cohorts (eg, AUROC≈0.93; n=222; affective symptoms=126 and not affective symptoms=96 []). Deep learning models reported metrics such as a C-index of approximately 0.84 (n=1077; dementia=270 and healthy controls=807) for long-horizon prediction of Alzheimer disease onset [] and R² values approaching 0.98 (n=14,659; dementia=177 and healthy controls=14,482) for regression tasks estimating brain structural characteristics from wearable-derived data []. Several high-performing models were trained on small or imbalanced datasets, including studies with limited case numbers or highly unequal case–control ratios [,,,].

In addition, machine learning approaches applied to wearable-derived features achieved high discriminative performance across multiple tasks. Reported results included classification accuracies up to 93.5% [] (n=222; sleep and nighttime behaviors=81 and not sleep and nighttime behaviors=141) for identifying high-risk individuals, accuracies of approximately 94%-95% (n=33; dementia=5, MCI=6, and healthy controls=22) across multiple cognitive impairment levels [], area-under-the-curve values ranging from approximately 0.73 (n=229; dementia=160 and healthy controls=69) [] to 0.99 (n=18; dementia=4 and healthy controls=14) [] for early or prodromal Alzheimer disease detection, sensitivities and specificities exceeding 85% (n=95; MCI=50 and healthy controls=45) [] for distinguishing MCI from normal cognition, and C-index values up to 0.84 (n=1077; dementia=207 and healthy controls=807) [] for long-horizon Alzheimer disease onset prediction.

Interpretability approaches were reported in a limited subset of machine learning and deep learning studies. Explicit explainability techniques were applied in 46.1% (6/13) of AI-based studies, including Shapley additive explanations values or permutation feature importance in 2 machine learning studies [,], functional coefficients in 1 statistical–machine learning hybrid approach [], and Bayesian coefficient estimation in 1 deep learning framework []. One additional study reported feature relevance through statistical testing in a clustering-based approach []. The remaining AI-based studies relied on feature-selection heuristics or dimensionality reduction methods, such as correlation-based selection, wrapper methods, or principal component analysis, without reporting interpretable model outputs [,,,].

Prevention-Oriented Findings

The included studies were divided into 2 categories according to the type of contribution reported. A total of 22.4% (11/49) of studies explicitly provided quantitative results relevant to the early detection or prevention of cognitive decline (direct evidence), defined as studies demonstrating or validating predictive or preventive applications. The remaining 38 (77.6%) studies contributed indirect evidence, identifying associations between wearable-derived features and cognitive outcomes that may inform, but do not yet constitute, preventive or predictive applications.

Among studies providing direct evidence (n=11), longitudinal or interventional designs were commonly used. Specifically, 45.5% (5/11) of studies used longitudinal follow-up or randomized designs, while 54.5% (6/11) of studies relied on cross-sectional analyses. Direct-evidence studies included randomized intervention trials reporting outcomes such as slower progression to dementia, improved gait speed, or increased adherence to preventive programs, as well as longitudinal cohort studies showing that disrupted rest–activity rhythms, sleep fragmentation, and physical activity patterns predicted incident dementia or MCI over follow-up periods extending up to 8-15 years. Several studies further demonstrated that wearable-derived motor activity features could forecast clinical Alzheimer disease onset, with reported C-index values ranging from approximately 0.80 to 0.84.

In contrast, studies contributing indirect evidence (n=38) more frequently adopted cross-sectional designs. Of these, 52.6% (20/38) of studies were cross-sectional, whereas 47.4% (18/38) of studies used longitudinal designs. These studies primarily focused on identifying associations between wearable-derived sleep, circadian rhythm, activity, or physiological measures and cognitive outcomes, including global cognitive scores, neuropsychological performance, biomarkers, or clinical diagnoses, rather than on prediction or intervention.

Across all included studies, longitudinal designs accounted for 44.9% (22/49) of studies, while 55.1% (27/49) of studies used cross-sectional designs. This distribution reflects differing study aims, with direct evidence studies more often incorporating longitudinal follow-up or intervention components, and indirect evidence studies emphasizing associative analyses.

Overall, wearable technologies contributed to prevention-oriented research both directly, by demonstrating predictive and early detection capabilities in longitudinal and interventional settings, and indirectly, by identifying behavioral and physiological markers associated with future cognitive decline. This dual contribution reflects the role of wearables as both measurement tools for preventive interventions and sources of early behavioral risk markers in dementia research.

DiscussionPrincipal Findings

This systematic review synthesized 49 studies published since 2020 on wearable devices for the early detection and prevention of cognitive impairment and dementia. The findings are presented in 4 dimensions corresponding to the subobjectives described earlier.

Wearable Devices and Measurement Contexts

Research-grade actigraphy and accelerometry devices were used in most of the included studies (43/49, 87.8%), reflecting both a strong methodological emphasis on validated access to raw accelerometry data and the relative maturity of actigraphy-based approaches in this field [,]. Evidence from these studies was derived predominantly from observational designs, including cross-sectional and longitudinal cohorts, and was frequently assessed as having low risk of bias, particularly among cohort studies with large samples [,,]. Across actigraphy-based studies, sample sizes were heterogeneous but predominantly large, with nearly four-fifths enrolling 100 participants or more, and large samples were strongly associated with low risk of bias. While research-grade devices enable robust investigation of behavioral markers associated with cognitive impairment, their limited scalability and accessibility may constrain broader clinical and public health deployment [].

In contrast, consumer-grade wearable devices were comparatively underrepresented despite their widespread adoption and potential for large-scale, longitudinal monitoring. Evidence from consumer-wearable studies was derived predominantly from cross-sectional designs and exhibited greater heterogeneity in sample size and methodological quality, with smaller studies more frequently assessed as having moderate risk of bias, reflecting restricted access to raw data and reliance on proprietary signal processing [,,]. However, consumer-wearable evidence also included a small number of large cross-sectional studies assessed as having low risk of bias, indicating that methodological rigor is driven more by study scale and design than by device class alone [,,]. Taken together, these findings suggest that different wearable device classes capture complementary aspects of behavior and physiology rather than interchangeable measurements, supporting the potential value of multimodal integration or purpose-driven device selection aligned with specific research or clinical objectives [].

Wearable-Derived Features and Cognitive Outcomes

Across included studies, cognition was frequently assessed using brief global screening instruments or standardized clinical diagnostic classifications, reflecting a pragmatic choice aligned with clinical practice and the feasibility requirements of observational research. Evidence derived from commonly used cognitive measures was drawn primarily from cross-sectional and cohort designs and was frequently assessed as having low risk of bias, particularly in larger cohort studies [,,,]. While global cognitive measures facilitate standardized comparisons across heterogeneous cohorts, their limited sensitivity to subtle or domain-specific changes constrains interpretation of associations with wearable-derived features, especially in preclinical populations [].

Wearable devices were not used to measure cognition directly but to capture continuous behavioral and physiological signals that were statistically associated with cognitive outcomes. Sleep-related and circadian rhythm measures were examined most frequently and were reported across both cross-sectional and longitudinal studies, predominantly in cohort designs with large samples, and were most often assessed as having low risk of bias when derived from validated actigraphy devices and predefined metrics [,,,]. In contrast, physiological signals beyond accelerometry were examined in only a small subset of studies, which used heterogeneous, primarily noncohort designs, often involved small to medium sample sizes, and showed higher proportions of moderate-to-high–risk-of-bias assessments [,] limiting the strength of inferences that can currently be drawn from these measures and highlighting priorities for future methodological development.

Analytical Approaches and Methodological Considerations

Conventional statistical methods were the predominant analytical approach, used in 73.5% (36/49) of studies, reflecting their interpretability and familiarity in clinical and epidemiological research. These methods were applied mainly in cross-sectional and longitudinal observational designs and were frequently assessed as having low risk of bias, particularly in large cohort studies using multivariable or survival models [,,,-]. Across these studies, associations between wearable-derived features and cognitive outcomes were generally modest and sensitive to covariate adjustment, especially in large and heterogeneous samples, underscoring the importance of adequate sample size, rigorous model specification, and replication for reliable inference.

Machine learning and deep learning approaches were applied in a smaller subset of studies (13/49, 26.5%) and were used primarily for classification or prediction tasks. These studies were frequently exploratory, relied on small or imbalanced samples, and were more often assessed as having moderate to high risk of bias, largely due to limited external validation and optimistic performance estimates [,,]. Across AI-based studies, higher predictive performance was more commonly reported in cross-sectional or nonlongitudinal designs using constrained datasets, whereas applications in larger or longitudinal cohorts generally yielded more modest performance estimates but were more often assessed as having low or moderate risk of bias, reflecting improved robustness and generalizability [,]. Overall, this pattern suggests a trade-off between performance and methodological robustness, in which performance gains observed in small or exploratory samples may reflect overfitting or cohort-specific structure rather than generalizable predictive signal.

Implications for Early Detection and Prevention

Evidence supporting early detection or prevention was derived from a limited subset of studies providing direct prevention evidence, which more often used cohort-based or predictive modeling designs, enrolled larger samples, and were predominantly assessed as having low risk of bias. Representative examples include large longitudinal or predictive studies demonstrating that wearable-derived behavioral measures, particularly sleep–wake organization and circadian regularity, can precede clinical cognitive impairment by several years [,,]. These findings support the potential role of wearables in early risk stratification rather than post hoc characterization.

In contrast, most of the included studies contributed indirect prevention evidence and relied primarily on cross-sectional or association-focused designs. Although these studies were frequently assessed as having low to moderate risk of bias and consistently reported associations between wearable-derived markers and cognitive status, their typically limited temporal resolution and lack of predictive validation constrained their ability to establish preventive relevance [,,,]. As a result, their contribution to prevention remains inferential rather than actionable.

Overall, the current evidence supports wearable devices as tools for monitoring and early risk identification rather than as stand-alone preventive interventions []. Across both direct and indirect evidence, disrupted sleep–wake patterns and circadian irregularity consistently emerged as markers of elevated cognitive risk [,,]. Translating these associations into effective prevention strategies will require confirmation in larger, longitudinal, and externally validated studies [] that integrate wearable-based risk stratification with clinical decision-making and behavioral intervention frameworks.

Limitations

Several limitations should be considered when interpreting these findings. The included studies were heterogeneous in design, target populations, device types, and outcome definitions. Devices ranged from consumer-grade wearables to research actigraphy and multimodal physiological instruments, and the metrics derived from them were inconsistently defined. Cognitive outcomes also varied: global screening tools such as the MMSE and MoCA were most frequent, but other studies used different neuropsychological tests, functional measures, or clinical diagnoses. This heterogeneity precluded formal meta-analysis and necessitated a structured narrative synthesis. We did not formally assess the certainty of evidence (eg, using Grading of Recommendations Assessment, Development, and Evaluation), given the methodological heterogeneity of included studies and the predominance of observational designs.

The evidence base is also constrained by small sample sizes and short monitoring periods. In total, 8.2% (4/49) of studies enrolled 30 participants or fewer, while an additional 24.5% (12/49) included between 30 and 100 participants, indicating that more than one-third of the evidence relies on small cohorts. Furthermore, 67.3% (33/49) of studies monitored participants for only 1 week or less, limiting the ability to capture long-term variability in activity, sleep, or circadian rhythms. Only 18.4% (9/49) of studies systematically assessed real-world feasibility, including adherence, comfort, or long-term usability. Moreover, external validation was performed in just 6.1% (3/49) of studies, restricting the generalizability of reported associations or predictive models beyond the original cohorts. Thus, a key limitation of the current evidence is the risk of overinterpretation. Most studies were cross-sectional or small cohorts, and only few have undergone external validation [,,]. In several studies, high accuracy or AUROC values were reported in the context of small or imbalanced datasets, conditions that increase the risk of overfitting and optimism bias. As a result, these performance estimates should be interpreted cautiously in the absence of external validation or evaluation in independent cohorts. While models often report high accuracy, these findings may not generalize. Preventive claims remain speculative until tested in large prospective cohorts or intervention trials. In addition, research-grade devices typically provide access to raw, high-resolution data and validated measurement pipelines, whereas consumer devices often rely on proprietary algorithms and offer limited transparency, which may affect comparability across studies.

The risk of bias was present to varying degrees. Although most studies were rated as low risk (34/49, 69.4%), nearly one-third (13/49, 26.5%) were classified as medium risk, and 4.1% (2/49) as high risk. The high-risk studies exemplify common problems such as selective sampling, inadequate reference standards, and small or restricted populations, all of which likely led to overestimation of diagnostic performance and limited the generalizability of their findings. Publication bias is also possible, as studies reporting positive or novel results are more likely to be published. Together, these issues highlight that the field remains fragmented, with methodological and reporting inconsistencies that restrict the strength and reproducibility of current evidence.

Limitations of the review process itself must also be acknowledged. The restriction to English-language publications and peer-reviewed journals may have introduced language or publication bias, potentially excluding relevant gray literature or local studies. Additionally, while we searched for 4 major interdisciplinary databases, the exclusion of specialized clinical indices (eg, PsycINFO or Embase) could have resulted in the omission of some relevant records.

Implications for Future Research and Practice

This review highlights several priorities for future research and practice. A key challenge is bridging the gap between research-grade actigraphy and consumer wearables. While actigraphy remains the most validated tool for assessing sleep, activity, and circadian rhythms, limited use of consumer devices constrains scalability []. Evidence suggests that some multisensor consumer wearables can achieve accuracy comparable to research-grade actigraphs under controlled conditions [,], but direct comparisons across diverse populations and real-world settings remain needed. Where sufficient accuracy and reliability are demonstrated, consumer wearables could support large-scale, longitudinal monitoring for research and preventive care [].

Another priority is the expanded use of advanced analytics and digital phenotyping. Longitudinal wearable data enable modeling of dynamic behavioral and physiological changes, supporting early and personalized prevention. However, machine learning and deep learning methods remain underused, particularly sequence-based models such as recurrent neural networks, temporal convolutional models, and transformer-based approaches (eg, Informer and TimesFM) that are well suited for time-series health data []. In addition, large language model–based frameworks (eg, HealthLLM) offer opportunities to integrate wearable-derived time series with clinical and contextual information []. Progress in this area will depend on access to larger, well-annotated datasets and multicenter collaboration, as well as the incorporation of explainable AI methods to ensure interpretability and clinical trust [].

Finally, more prevention-oriented research is needed. Only 2 randomized controlled trials tested wearable-assisted interventions, and a small subset of observational studies examined prevention-relevant behaviors. Yet prevention is central to dementia care given the absence of disease-modifying treatments. Wearables could play a dual role, both by detecting early signs of decline and by monitoring modifiable lifestyle factors such as sleep, circadian rhythms, and physical activity [,]. Positioning wearables within preventive frameworks could therefore be among the most clinically meaningful directions for future research.

From a translational perspective, ethical and regulatory considerations will be critical for the clinical adoption of AI-based dementia risk prediction. Issues such as data privacy, transparency of algorithms, potential psychological impact of early risk labeling, and compliance with regulatory frameworks must be carefully addressed. At present, research-grade wearable devices and analytically transparent models appear more suitable for clinical research settings, whereas many consumer-grade devices and advanced AI approaches remain primarily experimental. Further validation, standardization, and regulatory oversight will be necessary before these methods can be routinely integrated into clinical practice.

Overall, this review advances the field by framing the transition from descriptive statistics to a predictive digital phenotyping framework. Distinct from prior reviews limited to established dementia, it synthesizes evidence specifically for the preclinical window, distinguishing findings with direct predictive utility from those offering only indirect correlational insights. It contributes a critical assessment of methodological maturity, identifying the heavy reliance on research-grade devices and the lack of external validation as primary barriers to implementation. Ultimately, these findings suggest that shifting to continuous, passive monitoring offers a scalable method to detect subtle behavioral deviations, creating opportunities for earlier, personalized risk reduction strategies [].

The authors thank the Vicomtech Foundation for supporting this research. The authors declare the use of generative artificial intelligence (GAI) in the research and writing process. According to the GAIDeT taxonomy (2025), the following tasks were delegated to GAI tools under full human supervision: proofreading and editing, reformatting, and quality assessment. The GAI tool used was: ChatGPT-5.1 (OpenAI). Responsibility for the final manuscript lies entirely with the authors. GAI tools are not listed as authors and do not bear responsibility for the final outcomes. Declaration submitted by: “collective responsibility.”

This research was funded by the Vicomtech Foundation. The funder had no role in the study design, data collection, analysis, interpretation, or writing of the manuscript.

No new primary data were generated in this systematic review. Data extracted from the included studies and the curated list of screened articles are available from the corresponding author upon reasonable request. The Python code used to support automated screening and study management is not publicly available, as it was developed for internal use; however, it can be shared upon reasonable request for purposes of methodological transparency.

Conceptualization was carried out by AC. Methodology was developed by AC and MA, and software was prepared by AC. Validation was conducted by CM and AA. Data curation was performed by AC and MA. The original draft of the manuscript was written by AC and MA, and review and editing were undertaken by AC, MA, CM, and AA. Supervision was provided by CM and AA, while project administration was managed by CM. Funding acquisition was secured by CM. All authors have read and agreed to the published version of the manuscript.

None declared.

Edited by S Brini; submitted 22.Oct.2025; peer-reviewed by B Howell, S Ajayi, S Ye; comments to author 27.Nov.2025; revised version received 23.Jan.2026; accepted 26.Jan.2026; published 23.Feb.2026.

©Ander Cejudo, Markel Arrojo, Cristina Martín, Aitor Almeida. Originally published in the Journal of Medical Internet Research (https://www.jmir.org), 23.Feb.2026.

This is an open-access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work, first published in the Journal of Medical Internet Research (ISSN 1438-8871), is properly cited. The complete bibliographic information, a link to the original publication on https://www.jmir.org/, as well as this copyright and license information must be included.