The second is now used in countless scientific definitions, and once we started counting time units smaller than a second, scientists did move to a metric system, breaking it down to milli and microseconds (a thousandth and a millionth of a second, respectively).

In the 20th Century, atomic clocks allowed scientists to redefine the second more precisely, moving from defining it based on rotations of the Sun to a precise value based on the absorption and emission of microwave radiation by caesium-133 atoms. Today, our global network of atomic clocks keep the time of pretty much every modern clock, and is behind everything from the internet to GPS to super accurate MRI imaging.

Tracing the history of timekeeping, though, reveals that it is actually a human construct, determined by human decisions. Hours, minutes and seconds arrived with us through a series of choices, coincidences and happenstance. But they stayed with us as useful heritage through the centuries, a hangover from ancient times so deeply ingrained that changing the system now would probably just be too much to handle.

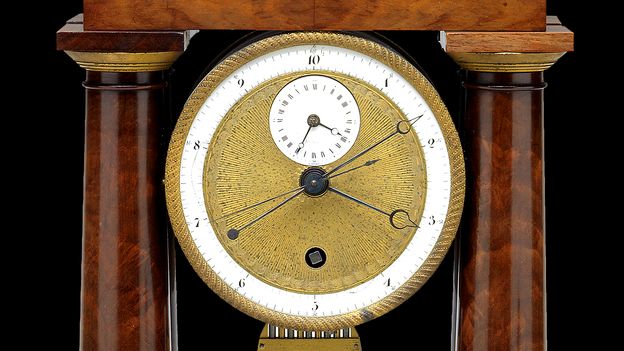

Even during France’s 18th Century attempt to decimalise time, in practice the new system was barely used, even while the Republic’s similar efforts to decimalise distance measurements and currency were adopted and are used to this day. Decimal time lasted just 17 months, although the calendar stayed in some use for about a decade. “It was tried, but it was unsuccessful, it didn’t take off,” says Burridge.

A 1795 speech by Claude-Antoine Prieur, a member of the French National convention, may have been what put the final nail in the coffin of decimal time. As well as offering hardly anyone a marked advantage, he argued, it cast a bad light on the other new metric measurement systems – which were, in contrast, he said, useful.