It is a hard thing for a journalist to accept that artificial intelligence (AI) can do a better job of persuading people to change their minds than any journalist can. Hubris is something of a core competency for anyone who thinks others should pay to read their thoughts on subjects about which they are rarely an expert. But some studies suggest that large language models (LLMs) such as Claude or ChatGPT are better than humans at winning arguments.

Humans get emotional. We don’t tailor our arguments to the person we are debating with, and eventually run out of steam. AI doesn’t have these flaws. It just keeps going.

AI models can fine tune their arguments using information about the gender, age, education, job and politics of the person they are arguing with. One study found that ChatGPT was 64 per cent more persuasive than humans when armed with this sort of information about their interlocutor.

For the moment, commentators can hang on to the lifeline that humans are better and more compelling writers. Our arguments might not be as technically good as those marshalled by AI, but we can sell them better. As a result, we tend to be better at getting people to change their behaviours – if not at changing their minds. You might accept that AI is right, but you are less likely to take some action as a consequence compared with losing an argument with a human.

AI writing remains relatively easy to spot if you know what you are looking for. It tends to be boring. LLMs have no sense of humour, don’t really understand irony and by definition have no personal stories or experiences to embellish their storytelling. Not yet anyway.

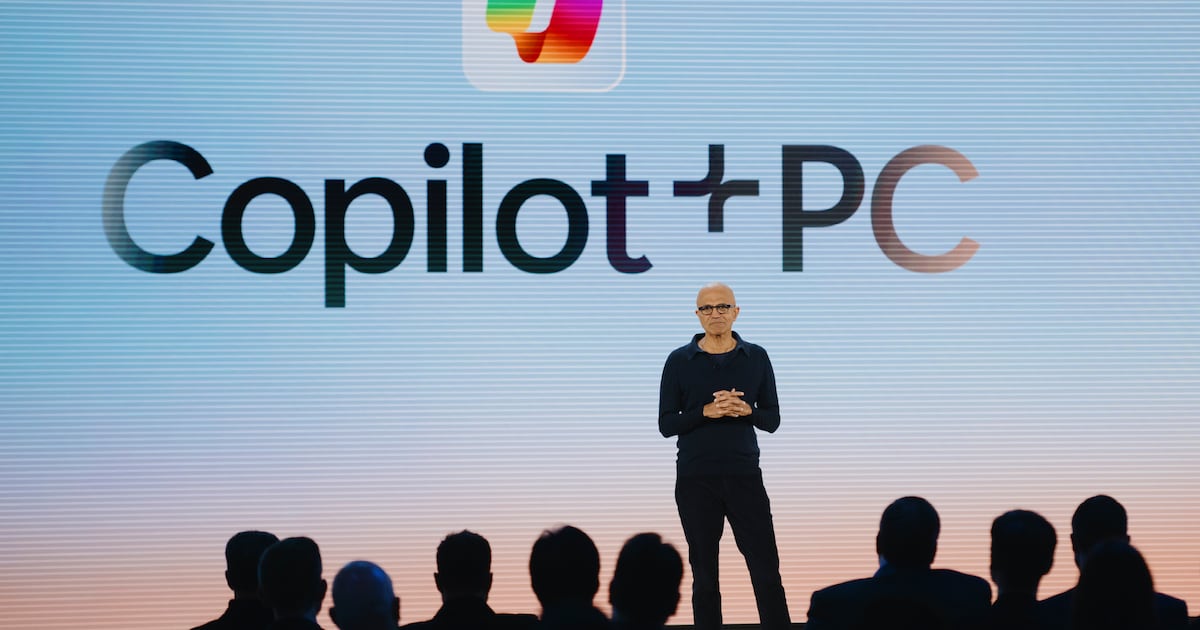

The relentless drive for self-improvement built into the models means, so it is said, that eventually they will write as well as humans. AI – or at least Microsoft Copilot, which uses GPT-5 – predicts that it will be better or as good as humans when it comes to writing opinion columns in a couple of years. It is confident that it will match the top human writers within 10 years. Some stuff like poetry will always remain the domain of human writing, but opinion columns won’t, or so AI believes.

Some might say the edges are already starting to blur between AI and human-generated copy. Nine per cent of newly published newspaper articles in the US are either partially or fully AI-generated, according to a 2025 study led by the University of Maryland cited by the Wall Street Journal last week.

Part of the issue is that many publications allow – or even encourage – the use of AI for research and this inevitably bleeds into the copy. (The Irish Times, like many news publishers, does not allow journalists to write or edit copy using AI, or to rely on it for research.)

Some media organisations have chosen to throw in the towel and have adopted “hybrid journalism”, often out of economic necessity. The same Wall Street Journal article mentioned Fortune, the US business magazine where one journalist using AI tools wrote more stories in six months than any of his colleagues did in a year. AI-assisted stories account for 20 per cent of web traffic at the magazine.

[ What to do when the ‘public good’ of information goes badOpens in new window ]

The direction of travel may seem clear but, humans being human, we are unlikely to go down without a fight. This raises the intriguing, if somewhat dystopian, prospect of humans starting to copy the writing style of AI.

This is almost a given. Writing is derivative and every budding journalist sets out copying the style of writers they admire. Pushing this argument to its limits, we can arrive at a convergence where good human opinion writing is indistinguishable from AI-generated opinion.

It does raise the intriguing prospect that in a world where AI can write as well and better than many commentators, the true hallmark of “real journalism” may be bad writing. Hope springs eternal.

Until, of course, AI works out how to write badly to convince people it’s human. Time to go and lie down in a darkened room.