Academic writing is the backbone of research, but it’s also a heavy lift.

For many researchers, developing a scientific manuscript is packed with dense information, complex ideas, and time-consuming analysis. This is when artificial intelligence (AI) can ease that burden, but as a new UNC study suggests, it’s best to proceed with caution.

Published in Clinical Gastroenterology and Hepatology, the study – titled “Artificial Intelligence Tools for GI Research: A Practical Guide,” describes how UNC School of Medicine Gastroenterology researchers created a practical, step-by-step framework for using AI tools — including ChatGPT, Claude, and Gemini — to help prepare scientific manuscripts. Their findings show that while AI can help streamline many labor-intensive tasks, sound clinical judgment remains firmly in human hands.

“AI is a powerful assistant, but it is not a replacement for the researcher,” said Christina Gainey, MD, an Advanced Endoscopy Fellow in the UNC Department of Gastroenterology and corresponding author of the study, which she co-authored with Hersh Shroff, MD, MPA, and Oren Fix, MD, MSc. “These tools can handle the mechanical work of manuscript preparation — organizing references, writing code, formatting tables — but clinical reasoning, methodological judgment, and scientific integrity remain entirely human responsibilities.”

The Pros and Cons

The study walked through every stage of the process when writing a scientific paper: finding and organizing published research, analyzing data, creating charts and figures, editing drafts, and even building presentations to share work. For each task, researchers tested the tools with real GI examples, showing what they do well, and flagging where they fall short.

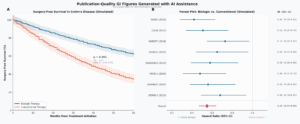

Below is an example of publication-quality data visualizations that AI can generate from a single text prompt, with no coding required.

“AI dramatically accelerates tasks that used to take hours: generating statistical code for forest plots or Kaplan-Meier curves, drafting baseline characteristics tables, and organizing literature across dozens of papers.” said Gainey. “These tools lower the technical barrier so researchers can focus on the science rather than the formatting.”

The study also compared individual platforms based on the advantages and associated risks of each.

AI Platform Evaluations:

The research stressed that these tools are not meant to replace a researcher’s existing knowledge of literature. Rather, they help investigators efficiently locate, organize, synthesize, and verify specific references during manuscript preparation.

“If you are writing a paper on biologics for refractory ulcerative colitis, for example, these tools can help you quickly confirm a citation, discover a study your keyword search missed, or check whether a finding has been replicated — tasks that complement rather than substitute for deep familiarity with the field,” said Gainey.

Key Risks to Watch

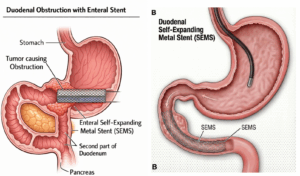

One notable concern is that while AI image tools like DALL‑E, Gemini, and Midjourney can make eye‑catching drawings, they can’t be trusted for medical images. Research across many medical fields shows that these tools often get important details wrong, making their medical illustrations inaccurate.

For example, below are two illustrations of a duodenal stent procedure, in which a metal stent is placed through a cancer-caused blockage in the small intestine to restore the flow of food. In the AI-generated version (left), the stent appears as a rigid, rectangular block incorrectly positioned relative to the surrounding anatomy. The physician-corrected version (right) shows the stent as it truly appears — a tapered, flexible wire mesh that conforms to the curved shape of the intestine, deployed through a scope guided into position.

“AI-generated medical illustrations remain anatomically unreliable — models produce ‘hallucinated’ anatomy like incorrectly positioned stents or impossible surgical configurations,” said Gainey. “There’s also an equity concern: many of the best tools require paid subscriptions, which creates a real divide for researchers at community hospitals or in lower-resource settings.”

Another significant risk is that general-purpose AI models fabricate references. Dr. Gainey says an AI-produced reference can look completely legitimate (correct journal name, plausible author list, realistic title) and still not exist. She cautions that every reference must be independently verified.

“Beyond that, any patient data uploaded to these tools must be fully de-identified per HIPAA standards, and researchers should check with their IRB and compliance office before using cloud-based AI platforms with clinical data,” said Gainey. “Finally, most major journals now require disclosure of AI use — transparency isn’t optional.”

Use with Discretion

AI isn’t just changing how researchers write papers; it’s speeding up how quickly new medical insights reach the people who need them. By cutting down the time scientists spend sorting through data, formatting tables, or polishing language, AI helps move discoveries out of the research phase and into the hands of clinicians faster.

“When researchers can prepare and publish their findings more efficiently, the science reaches the medical community faster. If these tools help more clinicians share their work, the evidence base grows, and that ultimately benefits the patients we treat,” said Gainey.

Media Contact: Brittany Phillips, Communications Specialist, UNC Health | UNC School of Medicine