Researchers at Harvard, publishing in the journal Nature on Monday, have demonstrated a new quantum system that can detect and correct errors below a critical threshold.

The breakthrough offers a potential solution to quantum error correction, which has long been considered the single greatest obstacle to building practical, large-scale quantum computers.

The central challenge has been that “qubits”—the basic units of quantum information—are inherently fragile.

Unlike the stable “bits” (ones and zeros) in conventional computers, qubits are subatomic particles that are highly susceptible to slipping out of their quantum states and losing their encoded information due to environmental noise.

This fragility has been a formidable roadblock because the very properties that make quantum computers so powerful also make them prone to errors. These machines promise to leverage quantum phenomena, such as entanglement, to achieve processing power that grows exponentially.

“In theory, a system of 300 quantum bits can store more information than the number of particles in the known universe,” said the researchers in a press release.

A fault-tolerant system

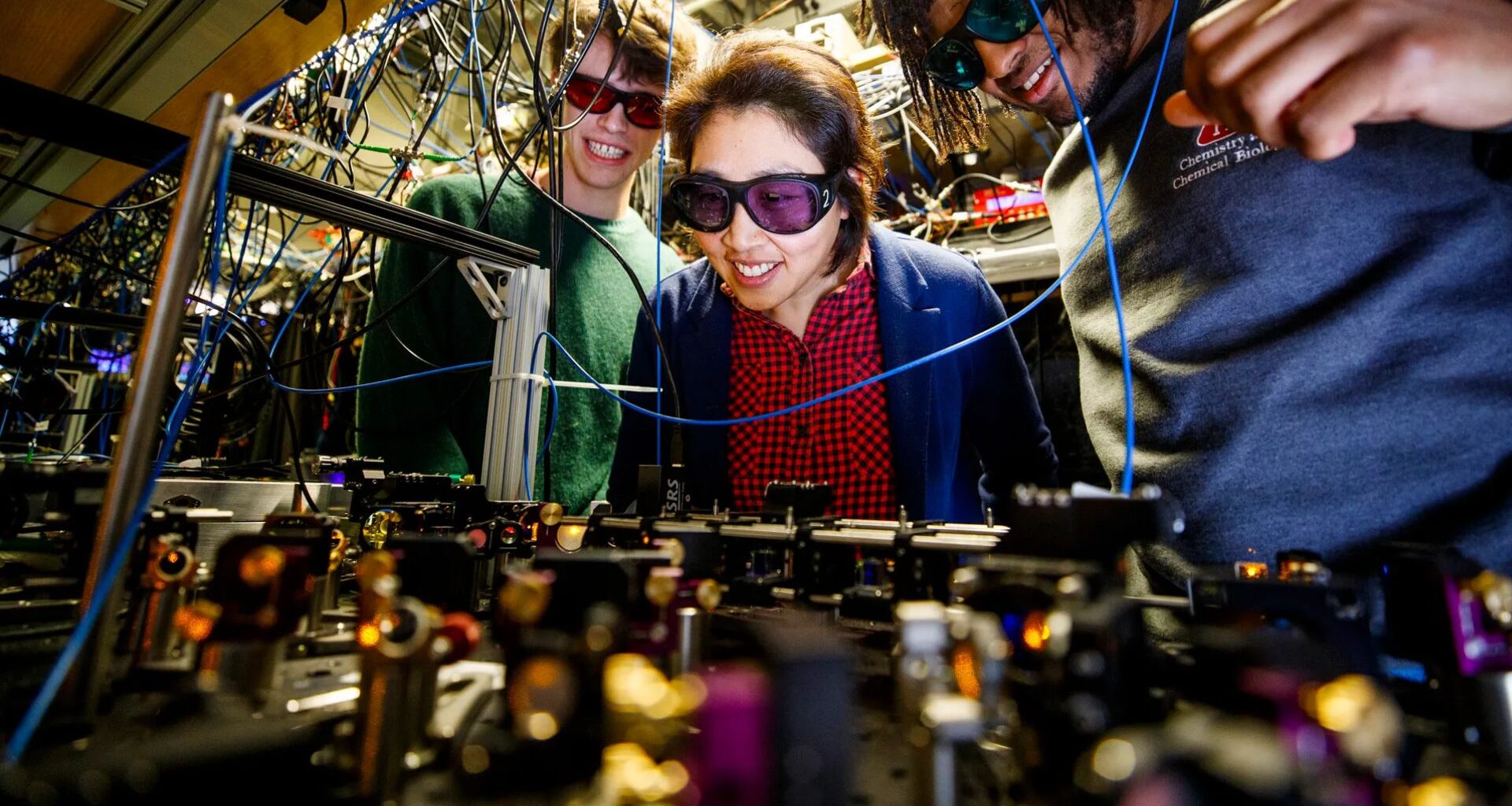

To overcome the error hurdle, the Harvard-led team built a “fault-tolerant” system using 448 atomic qubits. The system, which specializes in using neutral atoms of the element rubidium, employs an intricate sequence of techniques, including physical and logical entanglement, logical magic, and even “quantum teleportation”—the transfer of a quantum state from one particle to another without physical contact.

This new architecture is the first to suppress errors below the critical point, where adding more qubits to the system further reduces errors rather than increasing them, a requirement for scaling up the technology.

“For the first time, we combined all essential elements for a scalable, error-corrected quantum computation in an integrated architecture,” said Mikhail Lukin, co-director of the Quantum Science and Engineering Initiative at Harvard and the senior author of the paper.

Dolev Bluvstein, the paper’s lead author and now an assistant professor at Caltech, emphasized the system’s scalability.

“There are still a lot of technical challenges remaining to get to a very large-scale computer with millions of qubits, but this is the first time we have an architecture that is conceptually scalable,” stated Bluvstein.

“It’s becoming clear that we can build fault-tolerant quantum computers.”

A series of advances

The research was a collaboration between Harvard and MIT, jointly headed by Lukin, Markus Greiner of Harvard, and Vladan Vuletić of MIT. The team also collaborates with researchers at QuEra Computing, the Joint Quantum Institute at the University of Maryland, and the National Institute of Standards and Technology.

This success is the latest in a series of advances from the group. In September, they published another Nature paper demonstrating a system with over 3,000 qubits that could operate for more than two hours, solving a different technical hurdle related to atom loss.

Lukin believes the long-held goal of functional quantum computing is finally becoming a reality.

“This big dream that many of us had for several decades, for the first time, is really in direct sight,” he said.