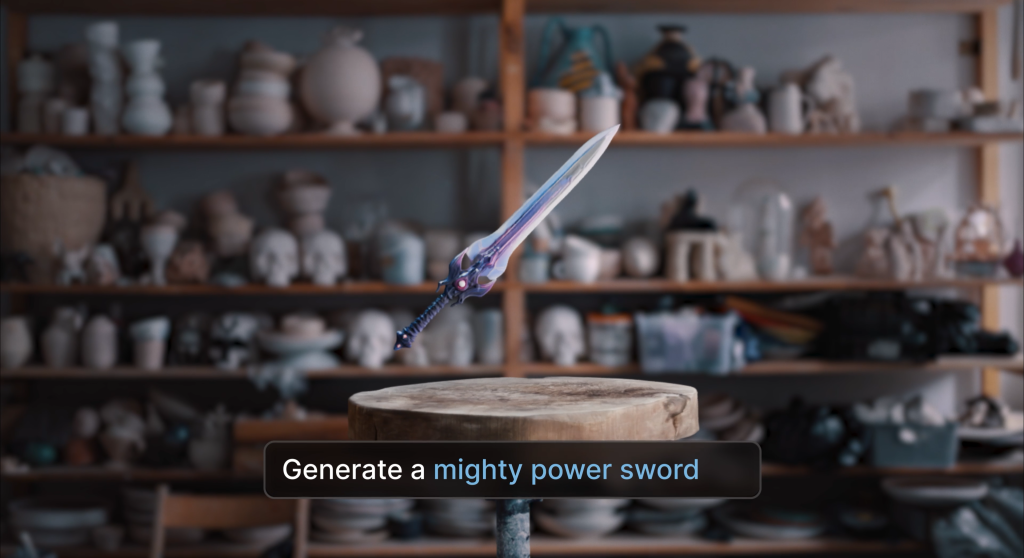

Autodesk has launched Wonder 3D, a generative AI model within its cloud-based Autodesk Flow Studio platform that allows creators to generate fully editable 3D assets from text prompts or images. Announced on March 4, 2026, the tool enables users to produce 3D characters, objects, and concept visuals using text-to-3D, image-to-3D, and text-to-image workflows. Wonder 3D is available under the Wonder Tools suite for all Autodesk Flow Studio subscription tiers.

Autodesk Flow Studio, formerly known as Wonder Studio, is an AI-powered platform designed to automate complex visual effects workflows such as motion capture, camera tracking, and character animation. The new Wonder 3D model extends these capabilities by allowing users to generate 3D geometry and textures directly from prompts or reference images, helping creators to move from early concepts to editable assets more quickly.

According to Autodesk, the system simplifies traditional 3D content creation workflows, which often require extensive manual modeling and technical expertise. The system aims to reduce production bottlenecks and speed up design iteration during development by automating parts of the asset creation process.

“Creating 3D assets, whether characters or props, has traditionally required serious technical expertise and significant manual effort,” said Nikola Todorovic, co-founder of Wonder Dynamics, an Autodesk company. “We created Wonder 3D to help remove these pain points and help creators of all skill levels generate high-quality 3D assets quickly and iterate freely, without slowing down production.”

AI-assisted workflows for 3D asset generation

Wonder 3D supports several AI-assisted workflows intended to accelerate early-stage asset development. Users can generate characters, creatures, or props from written prompts. Generated models include geometry and textures that can be refined and reused in other projects. The image-to-3D capability can convert sketches, concept art, or reference images into textured 3D models, allowing creators to adjust form, materials, and structural details during the design process. A text-to-image feature is also included to generate concept visuals that can later be translated into 3D assets.

The tool also supports .OBJ exports, enabling generated models to be used outside Autodesk Flow Studio. This allows Wonder 3D models to be integrated into external pipelines for applications such as game development, virtual production, prototyping, and 3D printing.

The system is intended to support a broad range of creators and production environments. Gaming studios can use the tool to generate characters and props for prototyping, while virtual production and extended reality teams may use it to populate scenes with assets during early development. Independent developers and smaller studios may also benefit from generating game-ready assets without large art teams.

Beyond professional production environments, Wonder 3D is positioned as an entry point for creators working across digital and physical media. The company says content creators can incorporate 3D elements into video and advertising projects, while hobbyists and makers can generate models that can later be manufactured as physical objects through processes such as 3D printing.

By combining generative AI models with editable 3D workflows, Autodesk aims to reduce the time required to move from concept development to production-ready models while expanding access to 3D asset creation.

Introducing Wonder 3D | Text and Image to 3D in Autodesk Flow Studio. Video via Autodesk.

AI tools for generating 3D models from prompts and images continue to emerge

AI systems capable of generating 3D models from prompts or images have begun to emerge across design and fabrication workflows. In 2024, Bambu Lab introduced an AI model generator integrated with its MakerWorld platform, allowing users to create 3D printable character models from text prompts or reference images. Similarly, Womp launched an AI platform for accessible 3D model creation that enables users without traditional 3D modeling experience to generate printable objects through simplified text-based inputs, aiming to lower the barrier to entry for digital design.

Research organizations and technology companies are also exploring methods for converting visual inputs into editable 3D geometry. NVIDIA recently introduced an AI system that converts 2D images into editable 3D models, enabling new workflows for turning visual data into usable geometry. These developments reflect a broader push toward AI-assisted 3D creation tools that reduce the time and expertise traditionally required to produce digital models.

3D Printing Industry is inviting speakers for its 2026 Additive Manufacturing Applications (AMA) series, covering Energy, Healthcare, Automotive and Mobility, Aerospace, Space and Defense, and Software. Each online event focuses on real production deployments, qualification, and supply chain integration. Practitioners interested in contributing can complete the call for speakers form here.

To stay up to date with the latest 3D printing news, don’t forget to subscribe to the 3D Printing Industry newsletter or follow us on LinkedIn.

Explore the full Future of 3D Printing and Executive Survey series from 3D Printing Industry, featuring perspectives from CEOs, engineers, and industry leaders on the industrialization of additive manufacturing, 3D printing industry trends 2026, qualification, supply chains, and additive manufacturing industry analysis.

Feature image shows a sword model generated by Wonder 3D. Image via Autodesk.