Over the past year, I have had conversations with security leaders across a variety of disciplines, and the energy around AI is undeniable. Organizations are moving fast, and security teams are rising to meet the moment. Time and again, the question comes back to the same thing: “We’re adopting AI fast, how do we make sure our security keeps pace?”

It’s the right question, and it’s the one we’ve been working to answer by updating the tools and guidance you already rely on. We’re announcing Microsoft’s approach to Zero Trust for AI (ZT4AI). Zero Trust for AI extends proven Zero Trust principles to the full AI lifecycle—from data ingestion and model training to deployment and agent behavior. Today, we’re releasing a new set of tools and guidance to help you move forward with confidence:

A new AI pillar in the Zero Trust Workshop.

Updated Data and Networking pillars in the Zero Trust Assessment tool.

A new Zero Trust reference architecture for AI.

Practical patterns and practices for securing AI at scale.

Here’s what’s new and how to use it.

Why Zero Trust principles must extend to AI

AI systems don’t fit neatly into traditional security models. They introduce new trust boundaries—between users and agents, models and data, and humans and automated decision-making. As organizations adopt autonomous and semi-autonomous AI agents, a new class of risk emerges: agents that are overprivileged, manipulated, or misaligned can act like “double agents,” working against the very outcomes they were built to support.

By applying three foundational principles of Zero Trust to AI:

Verify explicitly—Continuously evaluate the identity and behavior of AI agents, workloads, and users.

Apply least privilege—Restrict access to models, prompts, plugins, and data sources to only what’s needed.

Assume breach—Design AI systems to be resilient to prompt injection, data poisoning, and lateral movement.

These aren’t new principles. What’s new is how we apply them systematically to AI environments.

A unified journey: Strategy → assessment → implementation

The most common challenge we hear from security leaders and practitioners is a lack of a clear, structured path from knowing what to do to doing it. That’s what Microsoft’s approach to Zero Trust for AI is designed to solve—to help you get to next steps and actions, quickly.

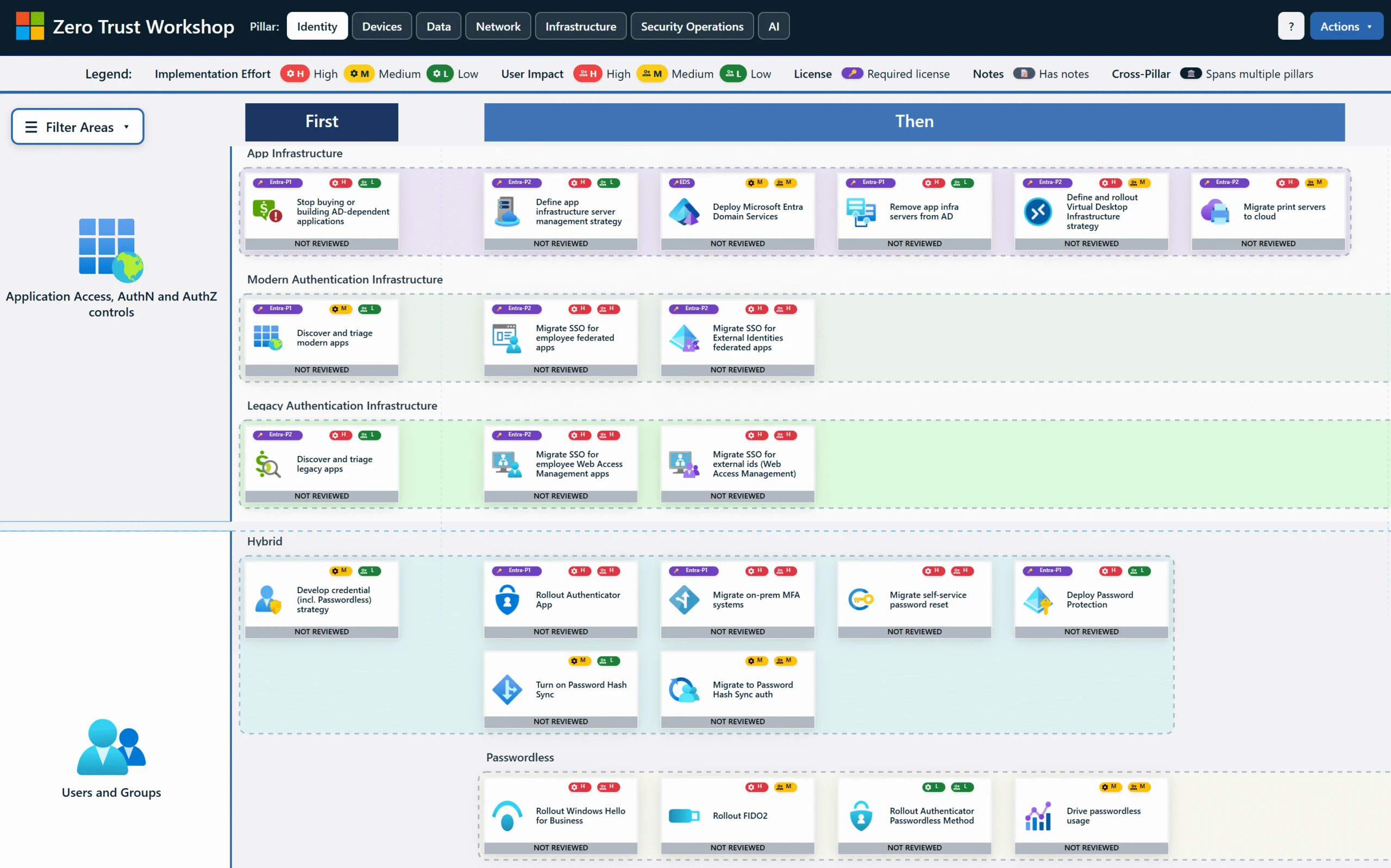

Zero Trust Workshop—now with an AI pillar

Building on last year’s announcement, the Zero Trust Workshop has been updated with a dedicated AI pillar, now covering 700 security controls across 116 logical groups and 33 functional swim lanes. It is scenario-based and prescriptive, designed to move teams from assessment to execution with clarity and speed.

The workshop helps organizations:

Align security, IT, and business stakeholders on shared outcomes.

Apply Zero Trust principles across all pillars, including AI.

Explore real-world AI scenarios and the specific risks they introduce.

Identify cross-product integrations that break down silos and drive measurable progress.

The new AI pillar specifically evaluates how organizations secure AI access and agent identities, protect sensitive data used by and generated through AI, monitor AI usage and behavior across the enterprise, and govern AI responsibly in alignment with risk and compliance objectives.

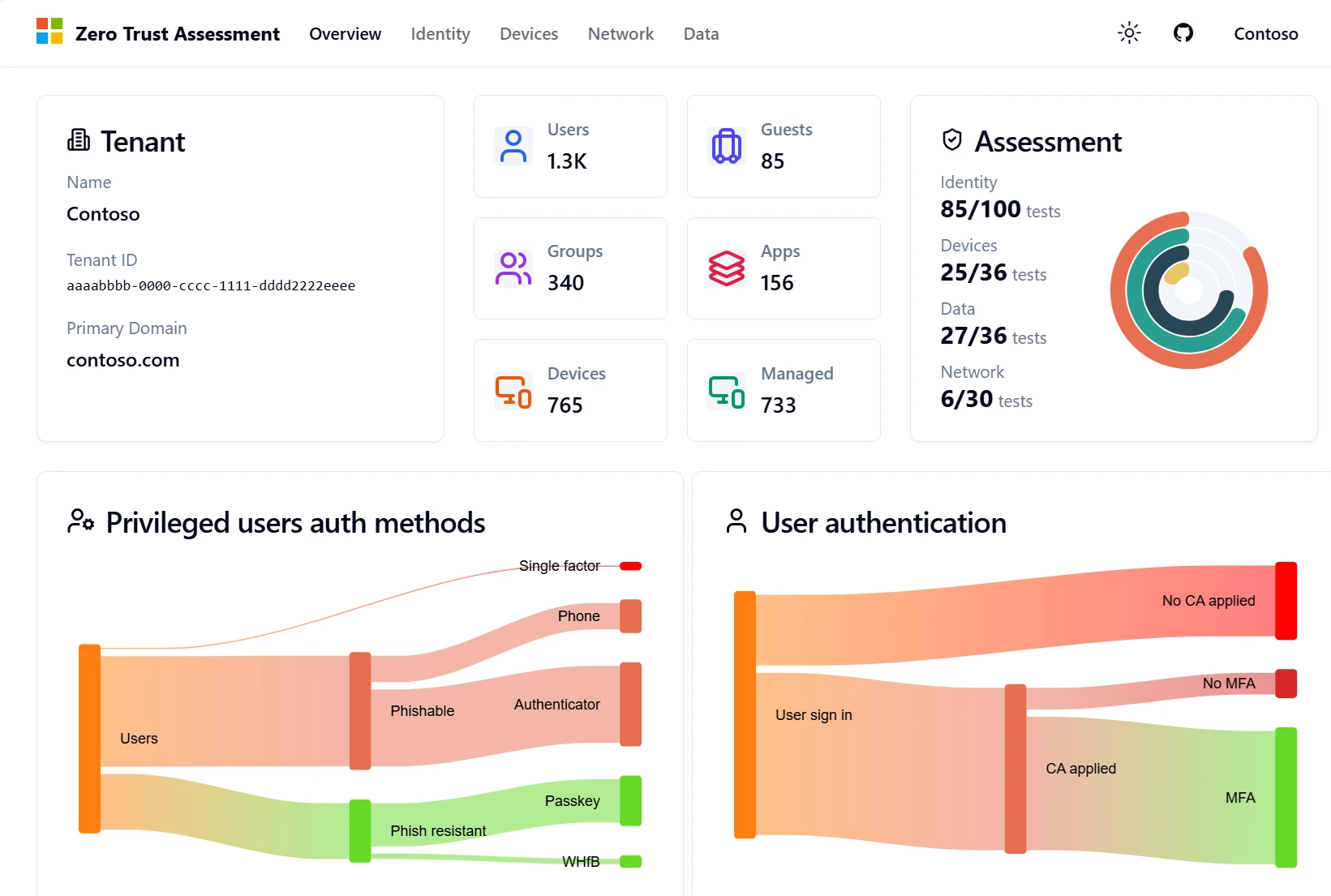

Zero Trust Assessment—expanded to Data and Networking

As AI agents become more capable, the stakes around data and network security have never been higher. Agents that are insufficiently governed can expose sensitive data, act on malicious prompts, or leak information in ways that are difficult to detect and costly to remediate. Data classification, labeling, governance, and loss prevention are essential controls. So are network-layer defenses that inspect agent behavior, block prompt injections, and prevent unauthorized data exposure.

Yet, manually evaluating security configurations across identity, endpoints, data, and network controls is time consuming and error prone. That is why we built the Zero Trust Assessment to automate it. The Zero Trust Assessment evaluates hundreds of controls aligned to Zero Trust principles, informed by learnings from Microsoft’s Secure Future Initiative (SFI). Today, we are adding Data and Network as new pillars alongside the existing Identity and Devices coverage.

Zero Trust Assessment tests are derived from trusted industry sources including:

Industry standards such as the National Institute of Standards and Technology (NIST), the Cybersecurity and Infrastructure Security Agency (CISA), and the Center for Internet Security (CIS).

Microsoft’s own learnings from SFI.

Real-world customer insights from thousands of security implementations.

And we are not stopping here. A Zero Trust Assessment for AI pillar is currently in development and will be available in summer 2026, extending automated evaluation to AI-specific scenarios and controls.

Overall, the redesigned experience delivers:

Clearer insights—Simplified views that help teams quickly identify strengths, gaps, and next steps.

Deep(er) alignment with the Workshop—Assessment insights directly inform workshop discussions, exercises, and deployment paths.

Actionable, prioritized recommendations—Concrete implementation steps mapped to maturity levels, so you can sequence improvements over time.

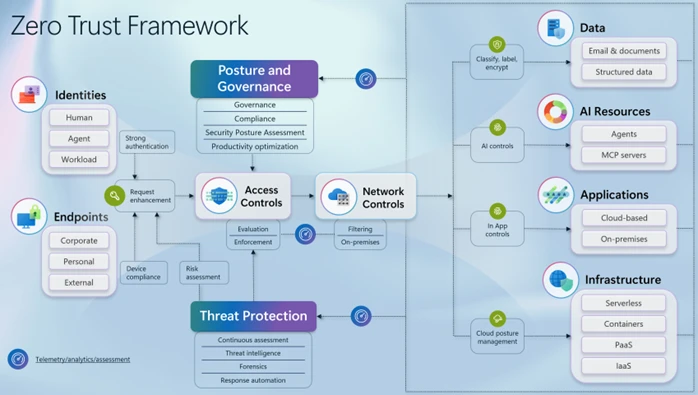

Zero Trust for AI reference architecture

Our new Zero Trust for AI reference architecture (extends our existing Zero Trust reference architecture) shows how policy-driven access controls, continuous verification, monitoring, and governance work together to secure AI systems, while increasing resilience when incidents occur.

The architecture gives security, IT, and engineering teams a shared mental model by clarifying where controls apply, how trust boundaries shift with AI, and why defense-in-depth remains essential for agentic workloads.

Practical patterns and practices for AI security

Knowing what to do is one thing. Knowing how to operationalize it at scale is another. Our patterns and practices provide repeatable, proven approaches to the most complex AI security challenges, much like software design patterns offer reusable solutions to common engineering problems.

PatternWhat it helps you doThreat modeling for AIWhy traditional threat modeling breaks down for AI—and how to redesign it for real-world risk at AI scale.AI observabilityEnd-to-end logging, traceability, and monitoring to enable oversight, incident response, and trust at scale.Securing agentic systemsActionable guidance on agent lifecycle management, identity and access controls, policy enforcement, and operational guardrails.Principles of robust safety engineeringCore safety engineering principles and how to apply them when designing and operating real-world AI systems.Defense-in-depth for Indirect prompt injection (XPIA)How Indirect Prompt Injection works, why traditional mitigations fail, and how a defense‑in‑depth approach—spanning input handling, tool isolation, identity, memory controls, and runtime monitoring—can meaningfully reduce risk.

See it live at RSAC 2026

If you’re attending RSAC™ 2026 Conference, join us for three sessions focused on Zero Trust for AI—from expanding attack surfaces to hands-on, actionable guidance.

WhenSessionTitleMonday, March 23, 2026, 1:00 PM PT-2:00 PM PTRSA Partner Roundtable, by Lorena Mora (Senior Product Manager CxE), Charis Babokov (Senior Product Marketing Manager, Microsoft Intune), and Jodi Dyer (Senior Product Marketing Manager, Microsoft Intune)Zero Trust Workshop: Devices PillarWednesday, March 25, 2026, 11:00 AM PT-11:20 AM PTZero Trust Theatre Session, by Tarek Dawoud (Principal Group Product Manager, Microsoft Security) and Hammad Rajjoub (Director, Microsoft Secure Future Initiative and Zero Trust)Zero Trust for AI: Securing the Expanding Attack SurfaceWednesday, March 25, 2026, 12:00 PM PT-1:00 PM PTAncillary Executive Session, by Travis Gross (Principal Group Product Manager, Microsoft Security), Eric Sachs (Corporate Vice President, Microsoft Security), and Marco Pietro (Executive Vice President, Global Head of Cybersecurity, Capgemini), moderated by Mia Reyes (Director of Security, Microsoft). Building Trust for a Secure Future: From Zero Trust to AI ConfidenceThursday, March 26, 2026, 11:00 AM PT-12:00 PM PTRSAC Post-Day Workshop, by Travis Gross, Tarek Dawoud, Hammad RajjoubZero Trust, SFI, and ZT4AI: Practical, actionable guidance for CISOs

Get started with Zero Trust for AI

Zero Trust for AI brings proven security principles to the realities of modern AI. Whether you’re governing agents, protecting models and data, or scaling AI without introducing new risk, the tools, architecture, and guidance are ready for you today.

Get started:

To continue the conversation, join the Microsoft Security Community, where security practitioners and Microsoft experts share insights, guidance, and real world experiences across Zero Trust and AI security.

Learn more about Microsoft Security solutions on our website and bookmark the Microsoft Security blog for expert insights on security matters. Follow us on LinkedIn (Microsoft Security) and X (@MSFTSecurity) for the latest cybersecurity news and updates.