A Singapore-headquartered robotics firm claims a breakthrough is bringing machines closer to human-level dexterity.

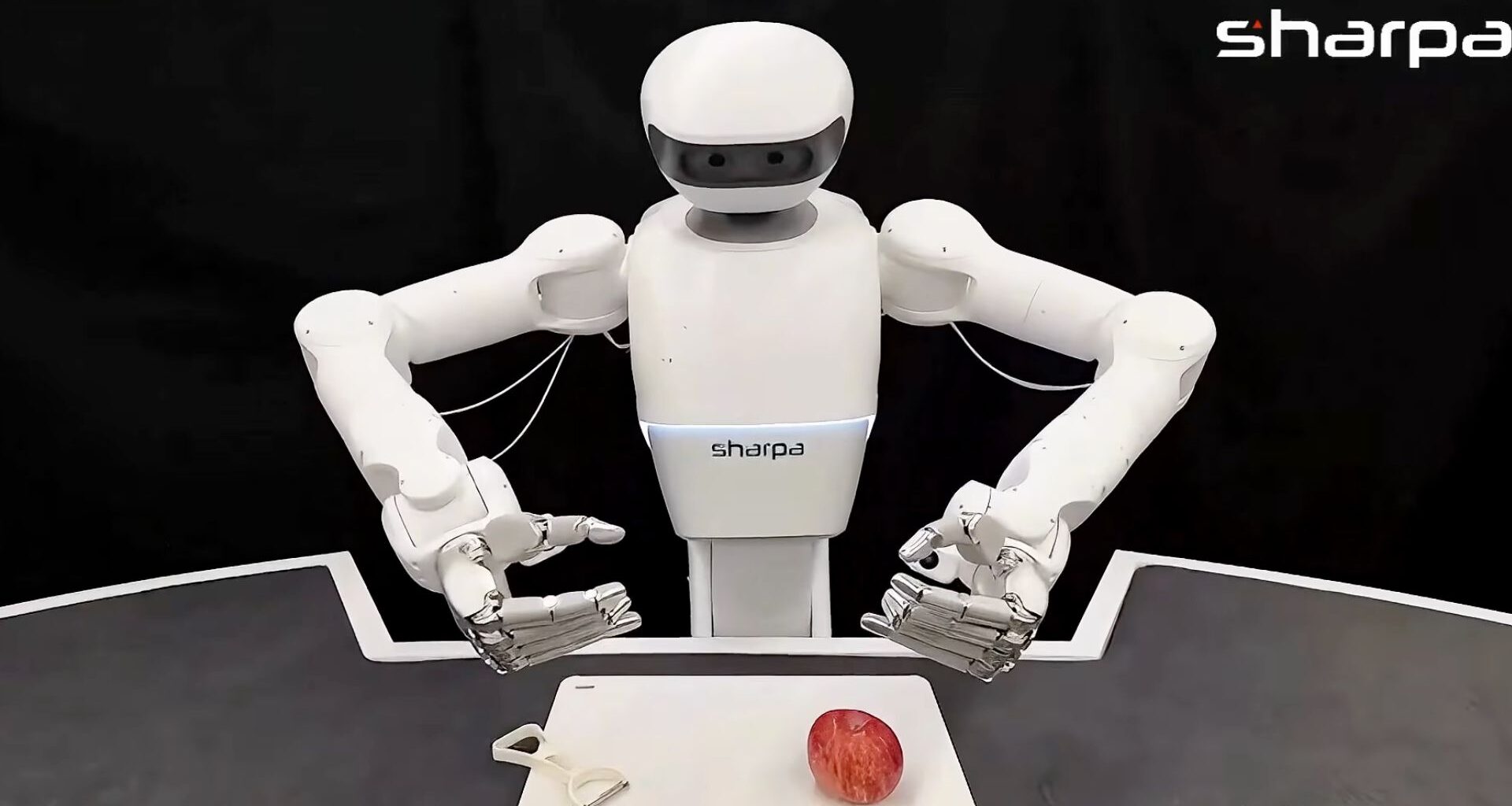

Sharpa has unveiled a robot capable of autonomously peeling an apple using dual, human-like hands—an achievement that tackles one of the field’s toughest challenges: precise, contact-rich manipulation.

Powered by a new system called MoDE-VLA (Mixture of Dexterous Experts), the robot combines vision, language, touch, and force sensing to execute complex movements.

Instead of micromanaging every finger, humans provide high-level input while the AI handles coordination. According to the firm, the hybrid approach could mark a major step toward robots safely performing delicate, real-world tasks in homes and workplaces.

In December, Sharpa moved its flagship dexterous hand, SharpaWave, into mass production, offering 22 active degrees of freedom, with claims of offering near-human levels of manipulation and control.

Robots gain dexterity

Robotic manipulation has advanced rapidly with Vision-Language-Action (VLA) models, enabling machines to handle everyday tasks like sorting laundry or picking up objects. Yet these systems have largely been limited to simple pick-and-place actions using basic grippers.

Human-like tasks such as peeling an apple remain difficult for robots, requiring precise coordination, force control, and constant in-hand adjustments. The company notes that this task involves handling 63 degrees of freedom, switching between multiple skills, and integrating diverse sensory inputs—challenges that traditional models still struggle to manage reliably.

New research by Sharpa introduces a novel solution. The approach combines a shared-autonomy “Copilot” that assists with intricate finger movements and a Mixture-of-Experts architecture that fuses vision, touch, and force data effectively. This enables robots to perform complex, bimanual tasks with greater stability.

To overcome key limitations in dexterous robotics, researchers introduced a two-part framework: IMCopilot and MoDE-VLA. IMCopilot (In-hand Manipulation Copilot) is a set of reinforcement learning–trained micro-skills designed to simplify complex finger movements. During data collection, it enables shared autonomy—humans control broad arm motions while IMCopilot handles delicate in-hand tasks like rotating the apple. During execution, it acts as a callable low-level skill for the robot whenever fine manipulation is required.

MoDE-VLA addresses the challenge of combining diverse sensory inputs. Instead of treating all data uniformly, it processes vision, force, and tactile signals through specialized pathways. Using a mixture-of-experts approach, it dynamically activates relevant modules—such as detecting contact events—and refines actions in real time. This allows the robot to respond more precisely and reliably during complex manipulation tasks.

Human-level manipulation

The researchers evaluated their framework across four increasingly complex tasks: gear assembling, charger plugging, tube rearranging, and apple peeling, demonstrating consistent performance gains.

MoDE-VLA significantly outperformed baseline models, doubling success rates in contact-rich manipulation scenarios. In the most demanding test—apple peeling—the system achieved a 73 percent peel completion ratio, successfully executing repeated peel-and-rotate cycles.

The framework also showed high precision in tasks like charger plugging, where millimeter-level accuracy is critical. Here, force-specialized experts enabled the compliance needed to succeed in situations where vision-only models typically fail.

Sharpa claims its SharpaWave 22-DoF dexterous hand further enhances these capabilities through its advanced sensory integration and control architecture. By combining 6-DoF force sensing with tactile feedback from all ten fingertips, it can detect subtle interaction cues such as slip or resistance. Its integration with a Vision-Language-Action backbone enables more responsive and adaptive manipulation.

Additionally, the IMCopilot system allows the robot to offload complex in-hand coordination to reinforcement learning–trained micro-skills, addressing traditional data collection challenges. Operating under MoDE-VLA, the system continuously refines its actions based on real-time physical feedback, resulting in substantial improvements in dexterous task performance.

“By delegating complex, high-frequency finger movements to specialized “copilots” and using experts to interpret touch, we are paving the way for robots that can perform intricate household chores and industrial assembly with the same dexterity as a human,” said the team in a statement.

Jijo is an automotive and business journalist based in India. Armed with a BA in History (Honors) from St. Stephen’s College, Delhi University, and a PG diploma in Journalism from the Indian Institute of Mass Communication, Delhi, he has worked for news agencies, national newspapers, and automotive magazines. In his spare time, he likes to go off-roading, engage in political discourse, travel, and teach languages.