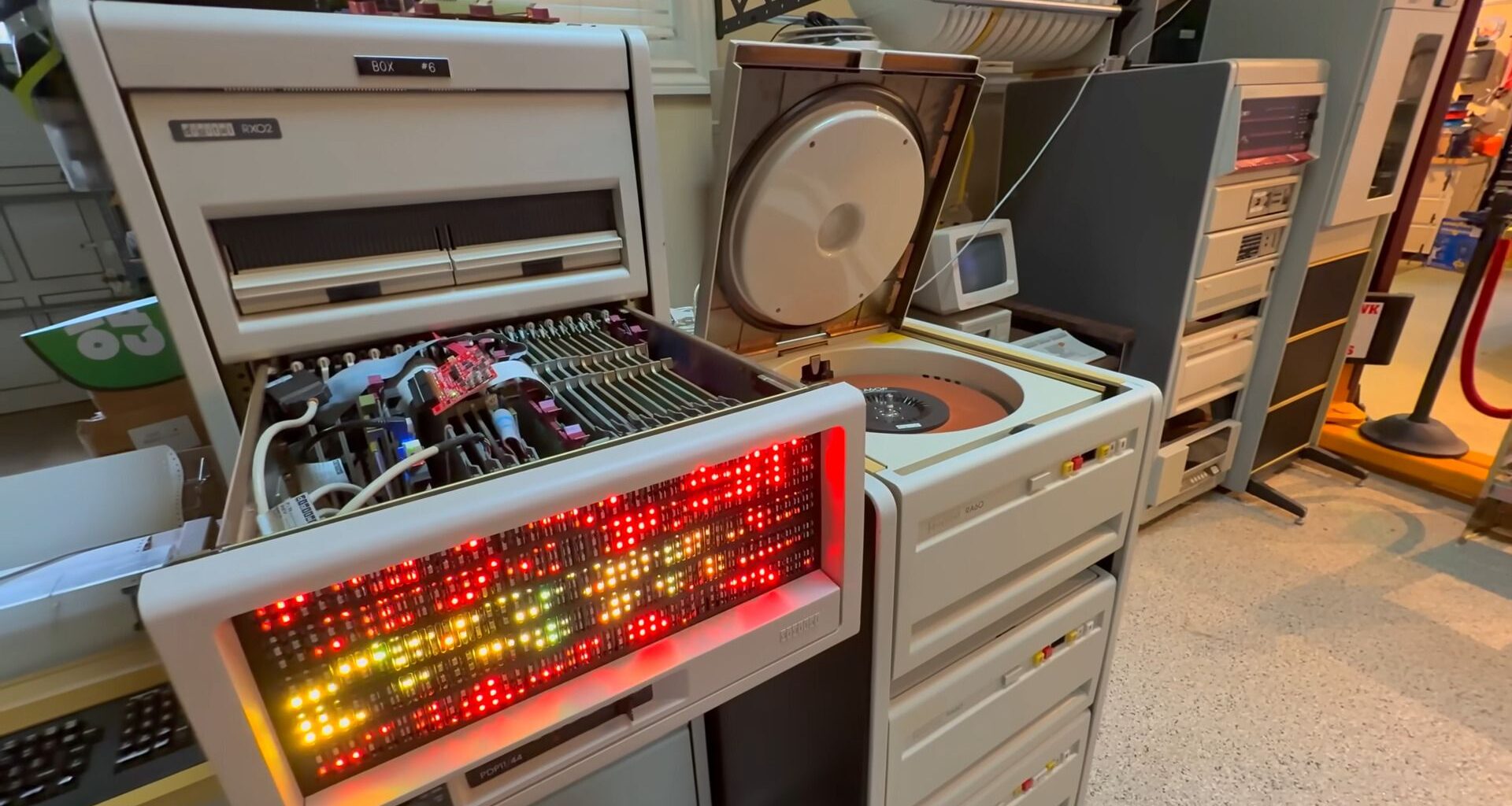

Veteran Windows developer Dave Plummer is back in his computer-stuffed garage, this time hoping to demystify AI by exposing its “dirty little secret.” This secret is largely revealed in the first line of the video description: “Dave uses a PDP-11 to train a real Neural Network complete with Transformers and Attention so you can see them at their most basic.” For context, the retired dev demos his 47-year-old PDP-11 system with a 6 MHz CPU and 64KB RAM. This runs a transformer model called ‘Attention 11’ written in PDP-11 assembly language by Damien Buret.

EXPOSED: The Dirty Little Secret of AI (On a 1979 PDP-11) – YouTube

On the surface, the task that the PDP-11 will ‘learn’ to do seems elementary – reverse a sequence of eight digits. However, the model must learn a structural rule to succeed for every given input, which Dave argues captures the soul of how modern LLMs like ChatGPT work.

“This is one person taking a class of algorithms that the world currently treats like sacred fire and proving that at least their essence can be reduced, understood, implemented, and trained on a machine old enough to remember when software came with toggle switches and three ring binders,” said Dave. “…now you know what that process actually is. It’s not AI magic. It is the machine repeatedly updating the strength of thousands of little weighted links so that the next answer will be slightly less wrong than the last one.”

Article continues below

You may like

Despite using Attention 11, a single-layer, single-head transformer written entirely in PDP-11 assembly language, Dave still has to optimize for the system constraints. “Constraints are not the enemy of engineering. Constraints are what force creative engineering to happen.” But it may be surprising how little scaffolding is required for intelligence to emerge. For example, the model used has just 1,216 parameters; it uses fixed-point math, precision is pruned to 8-bit for the forward pass, and every cycle is optimized to ensure the machine can finish training before “the heat death of the universe.”

Dave comments that “We’re watching the stripped-down anatomy of learning itself. The model begins dumb. The loss begins high. Accuracy stumbles around like a man trying to assemble IKEA furniture in the back of a moving van. And then somewhere along the way, the weights settle into a pattern. And the attention discovers the reversal map. And the machine crosses that invisible line from guessing into knowing.”

The results of the AI training experiment on an ancient 6 MHz computer were pleasing. Dave managed to get the model to achieve 100% accuracy in the number reversing task after about 350 training steps. To achieve this training level took about 3.5 minutes on the PDP-11/44, aided by a cache board. Quite a success, and Dave insists that modern AI is just the same mechanical – not mystical – technique with massively scaled-up error correction and arithmetic. “This old machine is not thinking in some mystical sense. It’s just grinding through arithmetic to update a few thousand carefully stored numbers. And that’s the whole game. The glamour of modern AI mostly comes from doing that on a staggering scale. But the essential act of learning is already here fully in miniature,” explained the legendary Windows dev.

Lastly, Plummer concludes that, with the compute resources crunch becoming a limiting factor, any company that can embrace the old-school obsession with efficiency and optimization could gain a significant advantage.

Follow Tom’s Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.