If you’re involved in developing embedded systems, YOU NEED TO READ THIS. I’m sure that, like me, you’ve been exposed to a lot of AI-powered design tools over the past couple of years. Suffice it to say that the one I’m poised to tell you about just blew my socks off (don’t worry, I’ll retrieve them later).

I’m so excited about all this that rather than wiffle and waffle as is my wont, I’m moved to dive headfirst into the fray. I was just chatting with Ethan Gibbs, who is the CEO and co-founder of Embedder. The folks at Embedder say their tool, also called Embedder, is the only AI coding agent that’s truly targeted at embedded software engineers. After seeing Embedder run, I’m inclined to agree.

If you’ve ever brought up a new embedded design—especially one involving unfamiliar sensors, communications chips, or mixed-signal devices—you’ll know that the process can be as much archaeology as engineering. By this I mean that before you can write a meaningful line of code, you must first excavate the relevant facts from a mountain of documentation: datasheets, reference manuals, register maps, timing diagrams, errata sheets, and application notes. Somewhere in that lot are the “magic numbers,” initialization sequences, timing constraints, and edge cases that will make all the difference between a working system and a board that sits there glaring at you in silent reproach.

The traditional workflow is well understood, if not universally loved. You read the documentation, write some code, build it, flash it onto the target hardware, and see what happens. Then begins the real fun: connecting debuggers, watching serial logs, probing signals, stepping through code, running static checks, desperately trying to discover why the thing that looked perfectly sensible on your screen has reduced your shiny new

board to the electronic equivalent of a sulk. So you tweak the code, rebuild, reflash, retest, and repeat—over and over and over again—until everything eventually behaves as intended and you can finally wipe away your tears (speaking for a friend).

Against this backdrop, it’s easy to see why the recent flood of AI-powered coding assistants has attracted so much attention. In many domains, these tools can be genuinely impressive. They can spit out code that looks perfectly respectable, point you toward useful software interfaces, and take much of the drudgery out of writing the routine setup stuff. But embedded systems are not like web apps or desktop utilities. Here, “looks about right” is nowhere near good enough. A hallucinated register value, an incorrect peripheral initialization sequence, or a missed timing requirement can leave

you staring at a dead board, wondering which one of a thousand assumptions just betrayed you. Worse still, if the AI wrote the code in the first place, debugging it can feel like trying to unravel someone else’s half-remembered dream.

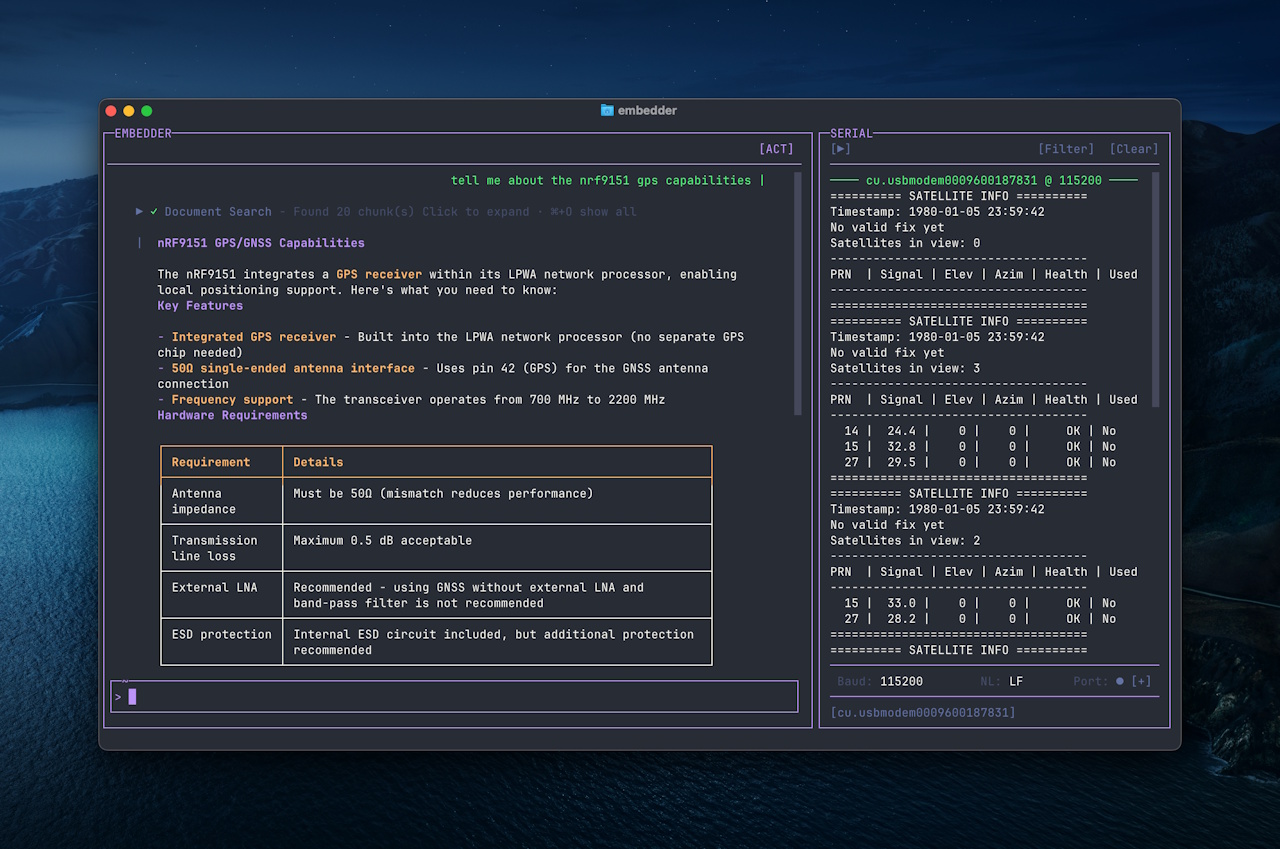

Yes, of course Embedded includes the ability to peruse and ponder data sheets and then respond to natural language queries like “Tell me about the nrf9151 gps capabilities,” as illustrated below, but this sort of thing is just the tiniest tip of the iceberg.

Embedder responding to a quest about nrf9151 gps capabilities (Source: Embedder)

Hold onto your hats (and socks), because this is where Embedder turns the whole idea of AI-assisted embedded development on its head. Instead of treating firmware generation as a one-shot code-writing exercise, the company has built what is essentially an agentic AI firmware engineer with a built-in verification loop. The really clever thing here is that this isn’t just about generating code; it’s about proving that the generated code actually works.

To do this, Embedder grounds its AI agents in the actual technical documentation for the target hardware. Its proprietary hardware catalog—covering more than 300 platforms from vendors including NXP, Nordic, Infineon, and others—lets the AI query exact register definitions, timing values, and configuration details in real time, rather than guessing based on patterns it has seen elsewhere. In effect, the documentation becomes the AI’s working memory.

Once the code is generated, the system can build it, flash it to the physical target, monitor serial ports, perform static analysis, observe what actually happens on the hardware, and then use that feedback to refine the code. In short, it automates the same painstaking loop that embedded engineers have always followed—only much faster, and with a lot less repetitive grind. You need to see this to believe it, so watch this video.

The verification loop may be the headline act, but it’s far from the only thing that makes Embedder stand out. Just as important are the company’s focus on IP protection, deployment flexibility, and platform agnosticism—three areas that are absolutely critical in the embedded world.

One of the biggest concerns companies have about AI is its impact on their intellectual property. For many embedded firms, their codebase, board support packages, and internal documentation are core competitive assets. They may be perfectly happy to use AI to accelerate development, but not if it means shipping sensitive information to some black-box cloud service, with “who-knows-what” happening next.

The guys and gals at Embedder have clearly thought long and hard about this. For customers who are comfortable with cloud-based workflows, the platform can be hosted in Embedder’s own AWS or Google Cloud environments. For organizations with stricter security boundaries, it can also be deployed on a customer’s own private cloud infrastructure. And for the really sensitive stuff—defense, aerospace, industrial control, or anything subject to export restrictions such as International Traffic in Arms Regulations (ITAR)—the whole framework is containerized, meaning it can run fully air-gapped behind the customer’s firewall, using whichever AI models and on-prem document sources they trust. In short, customers get the benefits of AI without losing sleep over their IP.

Then there’s platform agnosticism. Unlike some tools that lock you into a single-vendor ecosystem, Embedder is designed to work the way embedded engineers actually work: across multiple chips, toolchains, languages, and operating environments. It supports popular embedded ecosystems and RTOS environments, including Zephyr and FreeRTOS, as well as multiple programming languages, including C, C++, Rust, and MicroPython. Better still, it can ingest an existing codebase and learn a company’s preferred coding style, abstractions, and directory structures, allowing it to slot into existing workflows rather than forcing teams to change the way they work.

Embedder’s long-term vision is to abstract away as much low-level tedium as possible, so engineers can focus more on what they want the hardware to do and less on wrestling with the minutiae of how to make it behave.

And here’s the thing: The chaps and chapesses at Embedder have achieved all this less than a year after officially incorporating in April 2025. Which naturally raises the question: what on earth do they do for an encore? I’m so glad you asked; otherwise, I would have been forced to come up with a clever way to introduce this next part into the conversation.

During our chat, Ethan’s go-to example was the classic “make the LED blink” test. That resonated with me more than he perhaps realized, because blinking LEDs have been a recurring motif throughout my engineering life. More than once, I’ve found myself staring at a stubbornly unblinking LED, willing it to burst into life while wondering which obscure line of code had betrayed me.

Of course, as every embedded engineer knows, the awkward truth is that sometimes the code is innocent. The LED may not be flashing because it’s plugged in backward, or because you’ve connected it to the wrong pin, or because your “temporary” jumper lead has become more temporary than you’d expected, quietly working its way loose while you were looking the other way.

I put this point to Ethan. It’s all very well for an AI system to compile code, flash the target, read back registers, monitor logs, and tell you that, according to the software, everything ought to be working and the LED should be flashing. But what if the hardware is lying and the LED flasheth not, as it were?

It turns out that the boffins and bods at Embedder are already thinking several steps ahead. Ethan told me the company is actively working to integrate vision models into the platform, thereby allowing the system to visually verify the state of the physical hardware. In the short term, that could mean confirming that an LED is actually blinking when it’s supposed to. In the longer term, it opens the door to something far more powerful: checking that components are present, cables are connected correctly, jumpers are set properly, and boards are physically configured as the software expects before the test even begins.

At this point, I may have let out an involuntary squeak of excitement. I immediately had flashbacks to the countless hours I’ve wasted over the years chasing what I was convinced was a subtle software bug, only to discover—usually after much gnashing of teeth and rending of garb—that the real culprit was some laughable “gotcha” on the hardware side. A misplaced wire. A reversed connector. A board plugged in one row off. You know the sort of thing.

If Embedder can bring that kind of physical awareness into the loop as well, then what they’re building starts to look less like a clever coding tool and more like the beginnings of a genuinely intelligent hardware development partner.

All I can say is that I have a sneaking suspicion we’re going to be hearing a lot more from Embedder, and that the really interesting stuff is only just getting started.

Related