While the AI race chases $40,000 GPUs, a 27-year-old processor with just 128 MB of RAM is elbowing its way onto the track. What that experiment revealed could upend assumptions about who can run AI and at what cost.

A 350 MHz Pentium II from 1998, paired with 128 MB of RAM, has been coaxed by EXO Labs, founded by Oxford University researchers, into running a LLama2.c-based language model at 39.31 tokens per second. The demo even produced a simple, coherent tale starring “Sleepy Joe” and “Spot.” Set against data center silicon like Nvidia’s Blackwell B200, a $30,000 to $40,000 part, the result challenges the belief that only cutting-edge hardware can handle AI. If this approach scales, it hints at affordable, energy-efficient intelligence for aging PCs and everyday electronics.

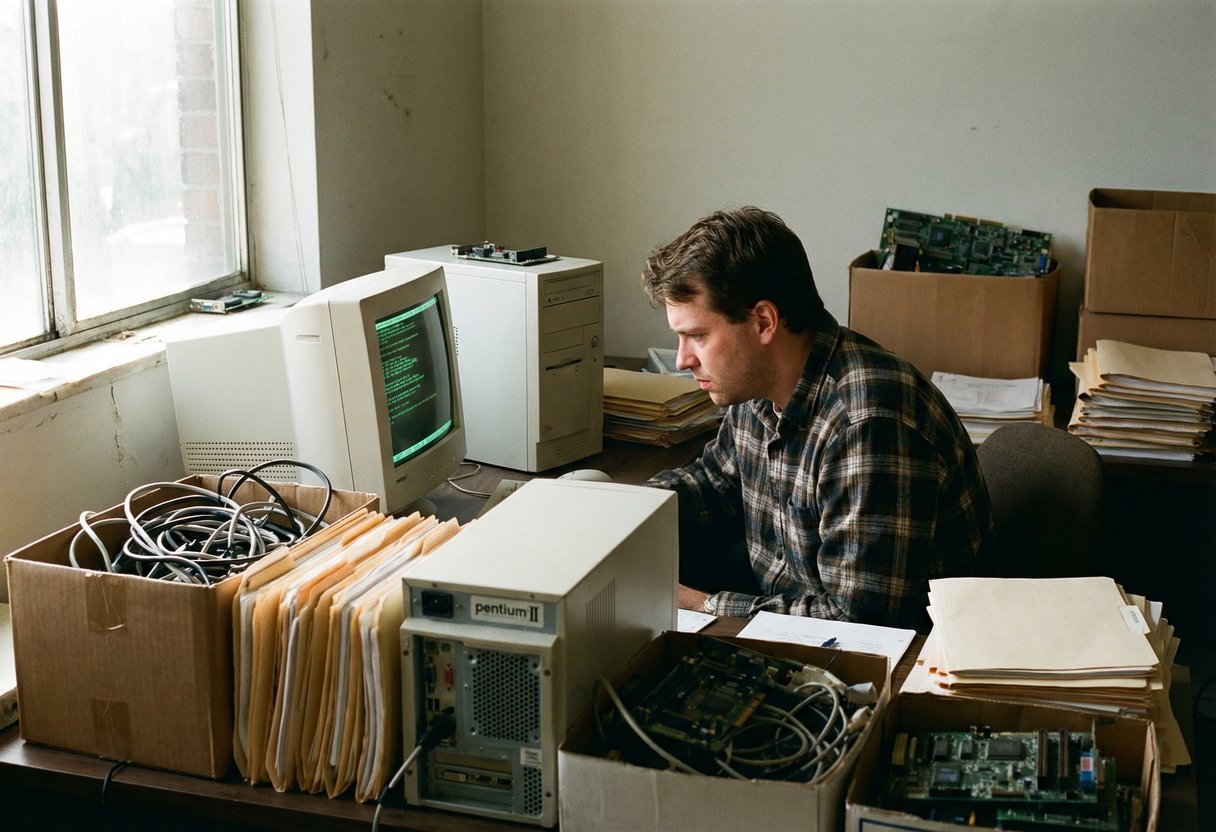

An experiment that breaks expectations

Could a 1998 processor with 128 MB really run AI? Yes—according to researchers at Oxford, who dusted off a Pentium II and made it talk. Their goal wasn’t nostalgia. It was to prove that careful software design can squeeze surprising capability from modest hardware, and to show that performance isn’t the only path to progress. The result challenges the reflex to equate AI with sprawling server farms.

A stark contrast with modern AI hardware

Today’s AI boom runs on chips like Nvidia’s Blackwell B200, often priced between $30,000–$40,000 per unit. Those processors excel at training and serving large models across vast datasets. Set against that, a 350 MHz Pentium II feels like a relic. Yet this contrast is the point: efficiency gains can unlock utility without the massive costs, energy budgets, and supply-chain friction of premium GPUs.

The unconventional setup explained

The team at EXO Labs, a collective founded by Oxford researchers, used LLama2.c—lean, efficiency-first code—to run a compact language model on the Pentium II at 350 MHz with 128 MB of RAM. The setup reached 39.31 tokens/s on a model with 260,000 parameters. Tokens are the small chunks of text an AI processes (letters, syllables, or short word pieces). Scale it up to 1,000,000,000 parameters and throughput collapses to 0.0093 token/s—proof that optimization beats brute force only within sensible limits.

What low-tech AI can produce

Despite tight constraints, the model produced understandable language. It spun a quirky tale about “Sleepy Joe” and a dog named Spot on a Windows 98 machine—hardly prizewinning, but coherent enough to follow. This is the case where utility emerges from simplicity: small models can summarize logs, auto-complete forms, or guide interfaces (not state-of-the-art, but fit for purpose) without constant cloud access.

The bigger picture: democratizing AI

Lowering the hardware bar hints at broader access and lower emissions. Older PCs could gain fresh roles, and regions with scarce infrastructure might adopt local AI for everyday tasks. Beyond cost, local inference can mean resilience and privacy (think schools, libraries, clinics). Consider the potential upsides:

Cheaper deployment on legacy or low-power devices

Reduced energy use and longer device lifecycles

Offline functionality for privacy and reliability

Oxford’s exploration doesn’t replace cutting-edge models. It reframes the canvas. By proving AI can run on yesterday’s silicon, it invites a future where useful intelligence is widely distributed—and where progress is measured not only in scale, but in access and restraint.