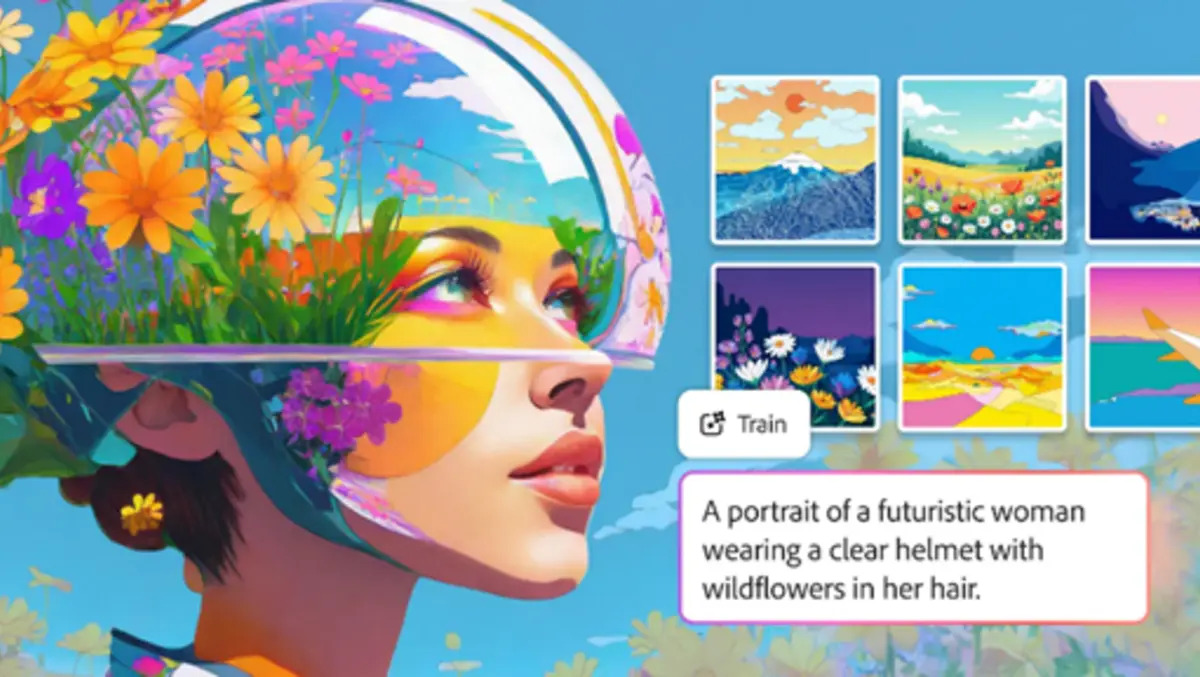

Adobe has launched Custom Models in public beta within its Firefly creative AI platform, giving users a way to train image generation on their own visual style.

The feature lets creators upload their own images so Firefly can build a reusable model based on a chosen aesthetic, character design or photographic look. The beta is aimed at ideation in illustration, character work and photography, helping users maintain a consistent visual identity across multiple outputs.

The move expands Adobe’s push to make Firefly a central workspace for AI-assisted image and video production. Firefly now also offers access to more than 30 AI models from Adobe and other suppliers, including Google, OpenAI, Runway and Kling.

Newly available models include Google’s Nano Banana 2 and Veo 3.1, Runway’s Gen-4.5, Adobe’s Firefly Image Model 5 and Kling 2.5 Turbo. Adobe says Firefly customers can use unlimited image and video generations across a wide range of those models.

Style Control

Adobe is positioning Custom Models as a way for creative professionals and brands to maintain stylistic consistency when producing large volumes of content. The system is designed to preserve elements such as colour palette, lighting, stroke weight and recurring character features, allowing teams to generate new material without resetting the visual baseline each time.

Trained models are private by default, a point likely to matter to brands and agencies concerned about control over proprietary assets and internal campaign material.

Rather than asking users to switch between separate services, Adobe is trying to combine model choice, generation and editing in one environment. In practice, users can create an image or video with one model, compare the results with another, and continue editing within Adobe’s own tools.

Editing Tools

Adobe also introduced additional image and video tools in Firefly. One, called Quick Cut, is designed to turn raw footage into an initial edited sequence within minutes.

Expanded image editing options will let users add or remove objects, extend scenes and make more detailed adjustments to generated visuals. The changes show Adobe’s attempt to position Firefly not only as a prompt-based generator, but as a broader production workflow for draft creation and refinement.

That reflects a wider shift in the generative AI market, where software groups are moving beyond stand-alone text prompts and single-image outputs. Providers are increasingly trying to keep users inside one system from concept development through editing, revision and delivery.

Conversational Push

Adobe is also widening access to Project Moonlight, a private beta product that uses a conversational interface across Adobe applications. The system is designed to let users describe what they want in chat and then work through tasks across apps including Photoshop, Express and Acrobat.

Project Moonlight was first shown earlier and remains in private beta, but its expansion underlines Adobe’s view that conversational interfaces will become a more common way to manage creative software. Instead of entering one prompt at a time, users would direct software through an ongoing exchange while still being able to adjust and refine the work.

Adobe described Firefly as an “all-in-one creative AI studio” that brings together outside models and Adobe’s own editing tools. The company is betting that customers will prefer a single workflow where generation and post-production sit side by side.

Competition in the segment has intensified as AI image and video companies seek stronger positions in professional creative work. Adobe’s response has been to build Firefly not just around its own models, but also around access to third-party systems, while using its established editing software as a point of differentiation.

The latest release also suggests Adobe wants to address one of the more practical concerns for professional users of generative AI: how to make outputs repeatable. For many design teams, consistency across campaigns, formats and channels can matter as much as novelty. A model trained on internal assets offers a route to that, particularly for character-led work, branded illustration and recurring photographic styles.

Adobe says the custom training approach lets users turn their own image libraries into a reusable foundation for future briefs and projects, making the resulting model part of their regular workflow.