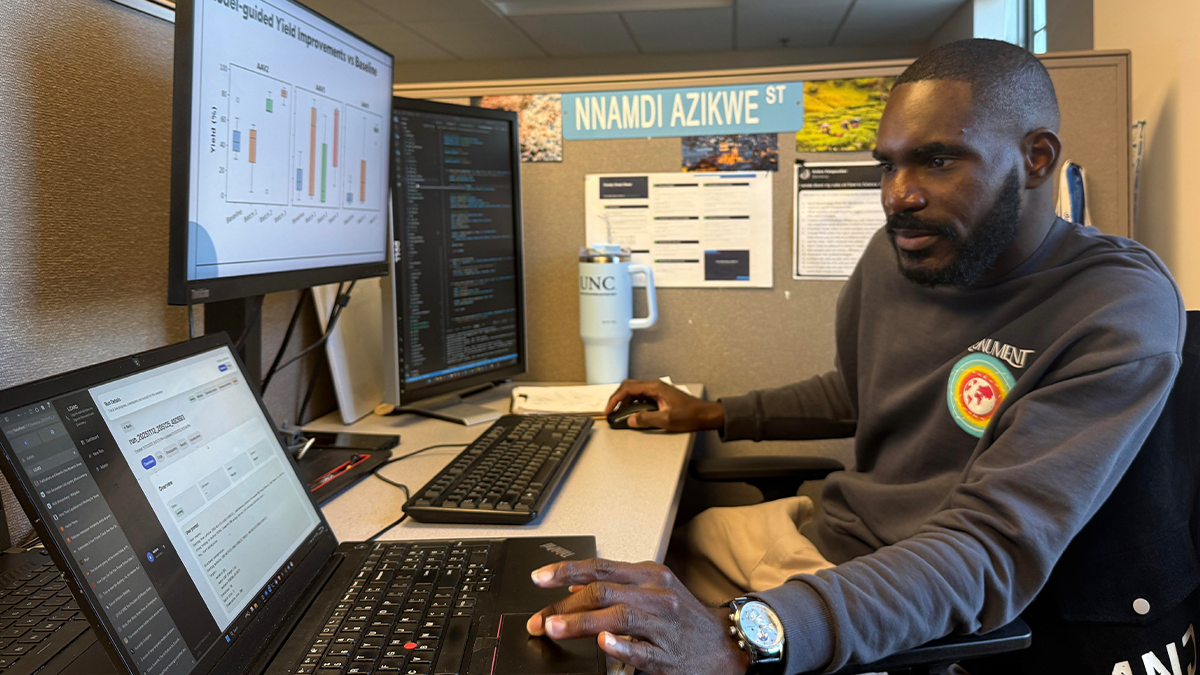

The future of gene therapy may not lie in the lab bench but in the algorithm. At UNC-Chapel Hill, chemistry doctoral student Kelvin Idanwekhai is using machine learning to transform how viruses — the microscopic couriers of genetic medicine — are purified, making the process faster, cheaper and more precise.

He works with tools designed to help scientists explore complicated problems efficiently, especially when they have too many variables to test one by one.

“In chemistry and bioprocessing, people often rely on design-of-experiment methods,” said Idanwekhai. “Those work fine when you’re dealing with a small number of parameters. But when you’re trying to adjust 10 or more things at once, like pH, salt concentration, temperature and flow rate, it becomes impossible to test everything manually.”

That is where machine learning comes in. Instead of trying every combination, Idanwekhai’s algorithm “learns” which experiments are most likely to yield satisfactory results and tests only those. Over time, it uses what it learns to predict even better outcomes.

To test this approach, Idanwekhai applied his system to one of the most promising areas of medicine: gene therapy. Gene therapy uses harmless viruses to deliver healthy genes into a patient’s cells. The virus acts like a delivery vehicle, carrying the genetic material where it is needed. Producing these delivery vehicles, however, is expensive and complex. Each step requires precise control of multiple factors, traditionally optimized through trial and error.

Idanwekhai wanted to replace that slow, empirical process with something smarter. With new tools, “we can explore a search space that might include 900,000 possible experiments, and we can find an optimal set of parameters we need in just 30,” he said.

In his study, Idanwekhai and his collaborators in Stefano Menegatti’s lab at NC State University applied this method to optimize purification for three different viruses. Each behaves differently at the molecular level. Still, Idanwekhai’s model handled them all. After just three rounds of optimization, the team increased viral yields from 70% to 99% while cutting impurities and preserving the viruses’ biological activity.

Even better, the process he used can show which variables matter most. “You can look inside the model and see, for example, that pH has the biggest effect on yield,” said Idanwekhai. “That gives you both speed and insight.”

Behind the scenes, however, the project faced practical challenges. Most laboratory machines do not easily share data. He hopes future lab equipment will include simple ways to connect with computer systems so that experiments can truly run in a closed loop, where data flow automatically from the instruments to the AI and back again.

“A lot of experimental data are stuck in the instruments themselves,” said Idanwekhai. “I had to go to the machines and extract the data manually. It took months.”

Looking ahead, Idanwekhai envisions integrating reinforcement learning and large language models, like those behind chatbots, into future systems. These tools could read scientific papers, understand previous results and suggest smarter experiments. His lab has already started developing an AI platform that is designed to autonomously search for and optimize potential drug molecules.

They have developed another software tool that lets lab scientists use the same approach without needing to write code or understand how to build computer models, said Idanwekhai. “Our other collaborations are doing something similar. We’re using AI and machine learning to help guide experiments in real time and make the research process faster and more efficient.”

Under the guidance of professor Alexander Tropsha, Idanwekhai said he has found the perfect environment to innovate. “Dr. Tropsha really values independence,” he said. “He tells us, ‘Your Ph.D. is not my Ph.D.’ That freedom lets me chase ideas I believe in.”