“It’s definitely an arms race,” he said.

“The bad guys have a lot less restrictions in place for advancing quickly. They don’t have the policies that maybe a business or the government has in place in order to put protections in.

Joshua Alcock leads California-based Fortinet’s operational technology and critical infrastructure cybersecurity division across New Zealand and Australia.

“They’re using stolen resources and underground tools that have [already] been built … They don’t necessarily have the financial limitations that a small business has. It’s a challenge for those sorts of people as targets.”

On text-based mediums, things like incorrect grammar, suspicious email signatures, foreign country codes, distorted logos and unsolicited links to unfamiliar domains are among the most common red flags.

In turn, AI has enabled scammers to operate faster, at scale and with more convincing outputs, with voice-cloning technology, deepfake videos and deceptive advertising slowly coming to the fore.

As messages can only mimic legitimate organisations to a point, AI-generated deepfakes and voices are becoming more prevalent in phishing scams impersonating banks, government agencies and authoritative figures, Alcock said.

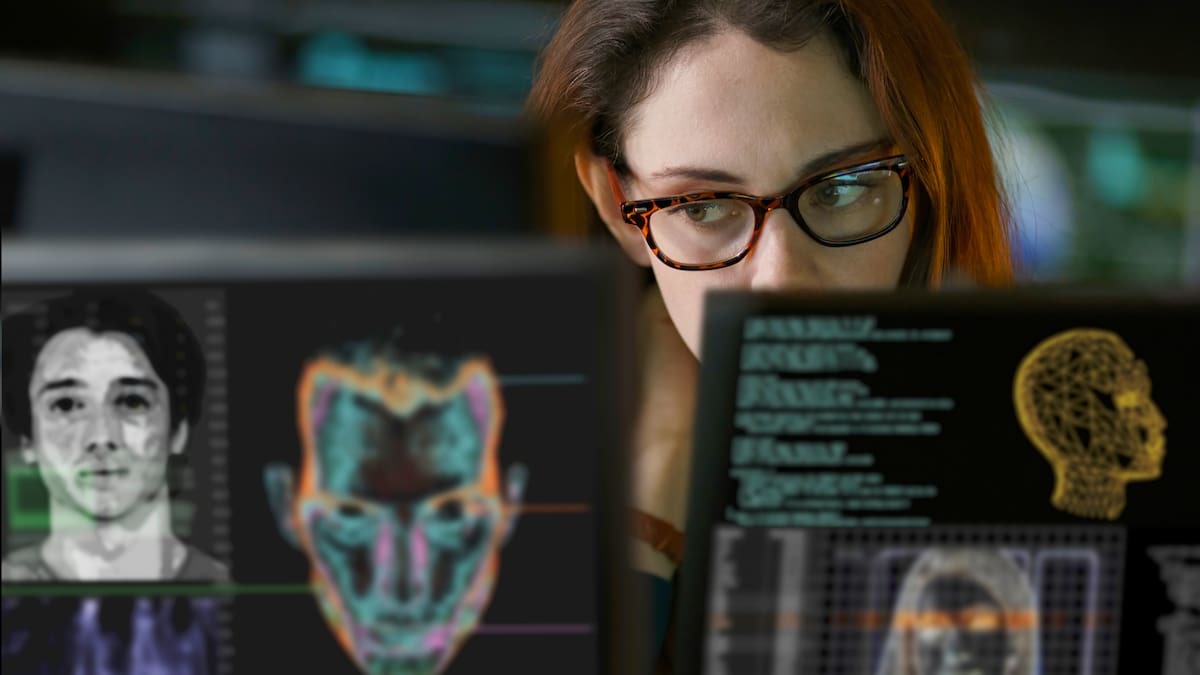

Cybersecurity experts are responding in kind by employing similar tools and strengthening verification methods to flag threats before they reach inboxes or infiltrate accounts.

“At the end of the day, this stuff always comes down to people, right? And it’s the basics. It’s about having that security awareness,” Alcock said.

“They’re still just targeting the lowest common denominator … send it out to a thousand people and hope that one person bites.”

Building on what works, modern scams are designed to provoke fast, emotional reactions that bypass rational, measured decision-making.

Experts warn AI-powered deepfakes and voice-cloning technology are now being used to mimic businesses, government agencies and prominent figures. Photo / 123rf

Techniques used elicit feelings of fear and urgency, exploiting human behaviour by trapping victims into thinking they’re responding to time-sensitive requests from an authoritative source.

Alcock noted the latest AI tools draw from the endless amounts of training data and information already publicly available, and can build a synthetic person whose voice or features are co-opted for its intended recipient.

“People trip up by just not looking close enough at what it is,” he said.

“As they get better and better, it becomes harder and harder to tell.”

Organisations like the National Cyber Security Centre (NCSC) have stepped up public awareness campaigns, but Alcock said individuals still need to slow down and critically assess what’s in front of them before taking action.

“If it’s something that invokes an emotional response, you’re probably less likely to stop and think,” he said.

“They’ll create a sense of urgency … it needs to be done, it’s expiring soon or it needs to be done by this date.

“People can go into a little bit of a state of shock when they see that, and then don’t think the rest of the things through.”

Netsafe says nearly $65,000 has already been lost to increasingly sophisticated, hard-to-detect scams this year. Photo / Getty Images

The psychological gameplay is a core part of the digital “scamscape”, with distracted, complacent or time-poor people most likely to fall victim.

Alcock said New Zealanders are mostly targeted by offshore scammers who prove difficult to trace, and with few enforcement measures available, preventing a successful swindle from happening in the first place remains the only real protective measure.

He recommended adding extra protections to accounts and devices – enabling multi-factor authentication, using trusted password managers, verifying requests through official channels and staying on top of hardware and software updates – to maximise security guarantees.

Simple reporting measures, such as flagging phishing texts or emails, also help improve detection systems that telco providers and businesses use.

Stigma often prevents people from speaking up after being caught up in a scam, but Alcock believes normalising discussions around cybersecurity and online safety remain key to keeping an edge over the bad actors.

“It’s not anything to be ashamed of, but it’s something that people need to learn from,” he said.

“Scams evolve in terms of how they might do something, and then we evolve in terms of how we detect and protect against those.

“But at the end of the day, if you override that, it’s the awareness that’s key.”

Sign up to The Daily H, a free newsletter curated by our editors and delivered straight to your inbox every weekday.