In the redemption story that became his calling card, a convicted drug dealer named James Keene agreed to work for the FBI as an undercover informant in a psychiatric jail, tasked with befriending a suspected serial killer named Larry Hall.

Keene was credited with gathering crucial evidence that ensured Hall was denied parole. In return Keene was released early from prison and his story was eventually told in several books and the Apple TV miniseries Black Bird.

But over the summer, Keene learned that another version of events was apparently being generated by an AI bot that posts summaries of search results on Google.

The bot declared that Keene was “serving a life sentence without parole for multiple murders”, Keene complains, in a lawsuit. It suggested he was responsible for killing three women, his suit alleges.

• Sign up for The Times’s weekly US newsletter

“Mr Keene has a past history but his history is what makes him such a remarkable character,” said his lawyer, Paul Chawla. “What’s happening with AI is devastating.” An artificial intelligence bot had called him “everything from a murderer to a rapist to a terrorist”, Chawla said.

In Keene’s first complaint to the company earlier this year, he said: “This is all evil twisted lies Google and it is coming from you in one sense or another.”

Taron Egerton starred as Keene in Black Bird, released in 2022

APPLE TV

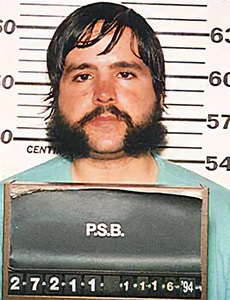

Larry Hall, the serial killer who Keene was instructed to collect information on

Keene’s lawsuit is one of at least six complaints that have been filed so far in the US by Americans who say they have been defamed by AI, according to the Stanford law professor Eugene Volokh. The alleged libels have been attributed to an occasional tendency by AI large language models to suffer “hallucination”: veering into fiction and sometimes generating false sources to back up what they are saying.

This tendency, recalling the title of the Philip K Dick novel Do Androids Dream of Electric Sheep?, is not quite a bug in the systems, said Volokh, who also trained as a computer programmer. “A typical bug is, you know, I made a mistake in this line, and I fix it and then everything works,” he said. “But the nature of this software is not … that it knows things in any normal sense of ‘know’, it’s not that it reasons … these large language models take advantage of information about the frequency of words and how often they [occur] and how one follows another in certain contexts,” he said.

The astonishing thing, he said, is that most of the time it is “pretty accurate” — but “there’s no particular reason to think that the results are going to be accurate all the time”.

• Google’s AI overviews are hallucinating — and it’s getting worse

One of the first libel suits was filed by a radio DJ named Mark Walters, who complained that the OpenAI bot ChatGPT was accusing him of embezzlement. A judge dismissed the complaint, concluding that the false statement had been made by the bot to a journalist who did not believe it.

In Maryland, a plaintiff named Jeremy Battle claims Microsoft’s AI bot has allegedly labelled him a terrorist who had been “levying war against the United States”. A judge has paused the case, ordering that the parties attempt to resolve it using Microsoft’s own arbitration mechanism.

Another suit was filed by a conservative activist named Robby Starbuck, who alleges a Meta chatbot had falsely claimed he was involved in the riot at the Capitol on January 6, 2021. Meta settled the suit. Starbuck made similar claims against Google in a state court in Delaware. In a filing on Monday, Google suggested that Starbuck had purposefully sought to cause its AI tools to “hallucinate”, generating false statements about him. Starbuck’s lawyer called the response “victim blaming”.

Robby Starbuck made claims against Meta and Google

Perhaps the strongest of all the AI libel lawsuits has been filed by a renewable energy company in Minnesota called Wolf River Electric, which installs solar panels on homes and businesses.

“We noticed a sudden rocketing in cancellation rates towards the last quarter of 2024,” said Nick Kasprowicz, the company’s general counsel. Typically about 3 per cent of their customers had cancelled contracts, he said. This had now risen to 47 per cent. When they attempted to ask people why they were cancelling “we started getting screenshots of these AI overviews”, he alleged. These claimed that the company faced a lawsuit from the Minnesota attorney-general and allegations of “deceptive sales practices”.

The AI summary appeared on the top of Google’s search page and provided several links to websites that did not back up the assertions, Kasprowicz said. It also generated false claims about the leaders of the company, he added.

One AI summary said Jonathan Latcham, Wolf River Electric’s chief financial officer (CFO), was accused of fraud, of engaging in “high-pressure sales tactics” and of failing “to disclose crucial information to customers”.

Latcham felt it was a particularly damaging allegation to make against a certified public accountant. “That’s my career and reputation that I’ve spent 13 years building,” he said. “So many people read that first and they take it for granted that that’s true information.”

He added: “If a customer sees that, they’re like: ‘Oh, the CFO is promoting these things? These guys are a bunch of dirtbags.’”

Justin Nielsen, one of the founders of the company that now employs more than 200 people, said he was one of “three guys that started this from literally a basement”. They relied on word of mouth, he said. “We put our entire lives into Wolf River, into developing a trustworthy, reputable household name.”

There are about a million Google searches for the company each year, Kasprowicz said. He added that it appeared that many people typing the name Wolf River Electric into a Google search box received a prompt that suggested they search for a lawsuit, or legal action.

“We believe we’ve had approximately $100 million in cancelled contracts,” he said. If Google was simply linking to newspaper articles that made the claims, they would be protected from libel suits under an act of Congress known as Section 230. “But in these instances Google is truly the author of these defamatory statements,” he said. “We’re hoping for some kind of accountability.”

Justin Nielsen, right, with other executives of Wolf River Electric

TIM GRUBER/THE NEW YORK TIMES/REDUX/EYEVINE

Google is contesting the suit. A spokesman for the company said that “the vast majority of our AI Overviews are accurate and helpful but like with any new technology, mistakes can happen. As soon as we found out about the problem, we acted quickly to fix it”.

In the case of Keene, the one-time prisoner who became an undercover FBI informant, the company argues that a “reasonable user” of its search tools would note “Google’s own warnings about AI-generated responses” and suspect that he was not actually serving a life sentence without parole.

He is a public figure, as a “self-described celebrity, American author, television executive producer and former FBI operative” who should therefore expect to be a subject of debate and commentary, Google says. He must show that Google acted with malice, it says, arguing that his suit fails to do so.

In Keene’s initial complaint to Google, via emails he sent in May, he appears to grapple with the challenge of who precisely is making the false statements against him. “Your Google AI just makes things up completely that are not true!” he wrote. “I am not serving life in prison without parole! I have never had any of my assets confiscated!”

• Can AI be made trustworthy? Alexa inventor may have the answer

Volokh, the law professor who tracks AI-related suits, said companies generally take steps to halt “hallucinations” once they are identified. “Now, sometimes the steps are very broad,” he said. In some cases, the response appeared to be: “Let’s just say nothing at all,” he said.

Jonathan Turley, a professor at George Washington University Law School, said he suffered this fate after ChatGPT falsely accused him of making inappropriate comments to a student during a field trip to Alaska. Apart from anything else, “I have never taken students on any field trips,” he said.

“What was also chilling was the response,” he said. “I had people who reported to me that ChatGPT says I don’t exist,” he said. “In some ways this is more chilling than the original mistake.”

The message from AI companies, he believes, was “you allow us to defame you or we will ghost you”.