Alberts, B., Hanson, B. & Kelner, K. L. Editorial: reviewing peer review. Science 321, 15–15 (2008).

Kelly, J., Sadeghieh, T. & Adeli, K. Peer review in scientific publications: benefits, critiques, & a survival guide. EJIFCC 25, 227 (2014).

Publons Global State of Peer Review 2018 (Clarivate Analytics, 2018).

Azad, A. & Banu, A. Publication trends in artificial intelligence conferences: the rise of super prolific authors. Preprint at https://doi.org/10.48550/arXiv.2412.07793 (2024).

McCook, A. Is peer review broken? Submissions are up, reviewers are overtaxed, and authors are lodging complaint after complaint about the process at top-tier journals. What’s wrong with peer review? The Scientist (1 February 2006).

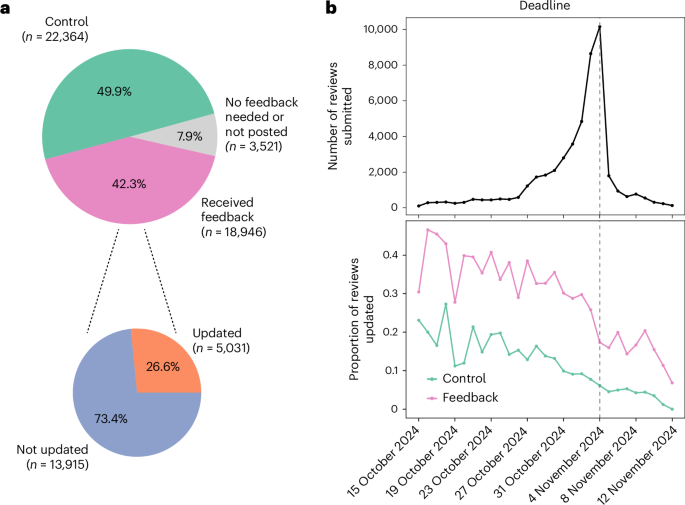

Thakkar, N. et al. Assisting ICLR 2025 reviewers with feedback. Blog post by the ICLR 2025 Review Feedback Agent Team and Program Chairs. ICLR Blog https://blog.iclr.cc/2024/10/09/iclr2025-assisting-reviewers/ (2024).

Rogers, A. & Augenstein, I. What can we do to improve peer review in NLP? In Findings of the Association for Computational Linguistics: EMNLP 2020 (eds Cohn, T., He, Y. & Liu, Y.) 1256–1262 (ACL, 2020).

Rogers, A., Karpinska, M., Boyd-Graber, J. & Okazaki, N. Program chairs’ report on peer review at acl 2023. In Proc. 61st Annual Meeting of the Association for Computational Linguistics Vol. 1, xl–lxxv (ACL, 2023).

Arns, M. Open access is tiring out peer reviewers. Nature 515, 467 (2014).

Cortes, C. & Lawrence, N. D. Inconsistency in conference peer review: revisiting the 2014 NeurIPS experiment. Preprint at https://doi.org/10.48550/arXiv.2109.09774 (2021).

Claude 3.5 Sonnet (Anthropic, 2024).

Liang, W. et al. Can large language models provide useful feedback on research papers? a large-scale empirical analysis. NEJM AI 1, AIoa2400196 (2024).

Yuksekgonul, M. et al. Optimizing generative AI by backpropagating language model feedback. Nature 639, 609–616 (2025).

Madaan, A. et al. Self-refi ne: iterative refi nement with self-feedback. Adv. Neural Inf. Process. Syst. 36, 46534–46594 (2023).

Hosseini, M. & Horbach, S. P. J. M. Fighting reviewer fatigue or amplifying bias? Considerations and recommendations for use of GhatGPT and other large language models in scholarly peer review. Res. Integr. Peer Rev. 8, 4 (2023).

Liang, W. et al. Monitoring AI-modified content at scale: a case study on the impact of ChatGPT on AI conference peer reviews. In Proc. 41st International Conference on Machine Learning 29575–29620 (ICML, 2024).

Zhang, Y. et al. Siren’s song in the AI ocean: a survey on hallucination in large language models. Computational Linguistics 51, 1373–1418 (2025).

Zhou, J. et al. Instruction-following evaluation for large language models. Preprint at https://doi.org/10.48550/arXiv.2311.07911 (2023).

Liu, R. & Shah, N. B. ReviewerGPT? an exploratory study on using large language models for paper reviewing. Preprint at https://doi.org/10.48550/arXiv.2306.00622 (2023).

Biswas, S., Dobaria, D. & Cohen, H. L. ChatGPTand the future of journal reviews: a feasibility study. Yale J. Biol. Med. 96, 415–420 (2023).

Liang, W. et al. Mapping the increasing use of LLMs in scientific papers. In Proc. 1st Conference on Language Modeling (COLM) (2024).

Shah, N. B. Challenges, experiments, and computational solutions in peer review. Commun. ACM 65, 76–87 (2022).

Price, S. & Flach, P. A. Computational support for academic peer review: a perspective from artificial intelligence. Commun. ACM 60, 70–79 (2017).

Kankanhalli, A. Peer review in the age of generative AI. J. Assoc. Inf. Syst. 25, 76–84 (2024).

Kuznetsov, I. et al. What can natural language processing do for peer review? Preprint at https://doi.org/10.48550/arXiv.2405.06563 (2024).

Leung, T. I., Taiane de Azevedo, C., Mavragani, A. & Eysenbach, G. Best practices for using AI tools as an author, peer reviewer, or editor. J. Med. Internet Res. 25, e51584 (2023).

Checco, A., Bracciale, L., Loreti, P., Pinfield, S. & Bianchi, G. AI-assisted peer review. Humanit. Soc. Sci. Commun. 8, 25 (2021).

Kousha, K. & Thelwall, M. Artificial intelligence to support publishing and peer review: a summary and review. Learn. Publ. 37, 4–12 (2024).

Goldberg, A. et al. Usefulness of LLMs as an author checklist assistant for scientific papers: NeurIPS’24 experiment. Preprint at https://doi.org/10.48550/arXiv.2411.03417 (2024).

Su, X., Wambsganss, T., Rietsche, R., Neshaei, S. P. & Käser, T. Reviewriter: AI-generated instructions for peer review writing. In Proc. 18th Workshop on Innovative Use of NLP for Building Educational Applications (eds Kochmar, E. et al.) 57–71 (ACL, 2023).

D’Arcy, M., Hope, T., Birnbaum, L. & Downey, D. MARG: multi-agent review generation for scientific papers. Preprint at https://doi.org/10.48550/arXiv.2401.04259 (2024).

GPT-4 Technical Report (OpenAI, 2024).

Goldberg, A. et al. Peer reviews of peer reviews: a randomized controlled trial and other experiments. PLoS ONE 20, e0320444 (2025).

Kocak, B., Onur, M. R., Park, S. H., Baltzer, P. & Dietzel, M. Ensuring peer review integrity in the era of large language models: a critical stocktaking of challenges, red flags, and recommendations. Eur. J. Radiol. Artif. Intell. 2, 100018 (2025).

Ye, R. et al. Are we there yet? Revealing the risks of utilizing large language models in scholarly peer review. Preprint at https://doi.org/10.48550/arXiv.2412.01708 (2024).

Shin, H. et al. Mind the blind spots: a focus-level evaluation framework for LLM reviews. In Proc. Conference on Empirical Methods in Natural Language Processing 35630–35656 (EMNLP, 2025).

Luo, M. et al. Benchmark on peer review toxic detection: a challenging task with a new dataset. Preprint at https://doi.org/10.48550/arXiv.2502.01676 (2025).

Tamkin, A. et al. Clio: privacy-preserving insights into real-world AI use. Preprint at https://doi.org/10.48550/arXiv.2412.13678 (2024).

Saad-Falcon, J. et al. LMUnit: fine-grained evaluation with natural language unit tests. In Findings of the Association for Computational Linguistics 3303–3324 (ACL, 2025).

Prasad, A., Stengel-Eskin, E., Chen, J. C.-Y., Khan, Z. & Bansal, M. Learning to generate unit tests for automated debugging. Preprint at https://doi.org/10.48550/arXiv.2502.01619 (2025).

Charlin, L., Zemel, R. S. & Boutilier, C. A framework for optimizing paper matching. In Proc. 27th Conference on Uncertainty in Artificial Intelligence 11, 86–95 (AUAI Press, 2011).

ICML 2023 Reviewer Tutorial (ICML 2023 Program Committee, 2023).

How to Be a Good Reviewer? Reviewer Tutorial for ICML 2022 (ICML 2022 Program Chairs, 2022).

Last Minute Reviewing Advice (ACL PC Chairs, 2017).

Valdenegro, M. LXCV @ CVPR 2021 Reviewer Mentoring Program: and How to Write Good Reviews. Presentation at LatinX in Computer Vision (LXCV) Workshop, CVPR 2021 (2021).

Rogers, A. ARR Reviewer Guidelines (Association for Computational Linguistics, 2021).

Silbiger, N. J. & Stubler, A. D. Unprofessional peer reviews disproportionately harm underrepresented groups in stem. PeerJ 7, e8247 (2019).

Fenniak, M. et al. The PyPDF library. https://pypi.org/project/pypdf/ (2024).

Ribeiro, M. T. & Lundberg, S. Testing Language Models (and Prompts) Like We Test Software (Medium, 2023).

Thakkar, N. zou-group/review_feedback_agent: first release. Zenodo https://doi.org/10.5281/zenodo.17903957 (2025).