It makes me glad to say that I’ve grown up during a time when generational updates between graphics cards meant something. Each new generation, we saw double the power, double the cores, and so many more features that we couldn’t believe just how fast things were progressing. This was a time when horsepower was… enough. Buying a faster GPU meant getting more frames, and that was that. Sadly, that relationship feels almost quaint now.

We’ve hit a point where throwing more silicon at the problem doesn’t solve it. It barely contains it. And in that gap between what games demand and what hardware can realistically deliver, AI, which was meant to help, has now quietly taken over.

Related

Stop calling it AI slop — upscaling is democratizing high-end gaming for the 99%

Better visuals for those not on the bleeding edge.

The “demand” has spiraled out of control

Native graphics have stopped being a realistic target now

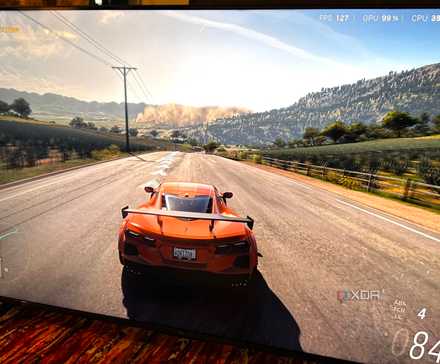

Today, PC gaming’s demand keeps on stacking. For starters, 1080p simply is no longer the gold standard, but 1440p and 4K are. That’s four times the pixels, and the entire while, gamers expect ray tracing, dense foliage, massive open worlds, and triple-digit frame rates on their 144Hz or higher panels. At some point, you have to step back and realize that any reasonably sized chip would simply bend under its own weight trying to compute all of that in real time, natively.

And yet, that’s exactly what modern games are built around. The expectation is no longer just visual fidelity, either. Now, we need scale, stability, and spectacle, all at once. Games now have to justify their $70 price tags with sprawling environments and impeccable visuals, while still trying to make sure that they run stably across a wide range of hardware configurations.

That’s exactly where things begin to shift. Now, when it came out, upscaling was originally meant to democratize high-end visuals by helping lower-end cards punch above their weight by internally rendering a fraction of the resolution. Half a decade later, it stopped being a support system, because developers are now actively making games with DLSS and similar technologies in mind, effectively treating upscaling as the new native baseline.

As a result, improvements in upscalers’ temporal stability and image quality are now the new technological progress milestones that we look forward to, instead of pure GPUs that boast more nodes and raw horsepower. As such, native 4K gaming, the promise that Nvidia made when this decade started with the RTX 30-series, in many cases, is just no longer the goal. Instead, it seems like an illusion we’ve all already moved past.

Brute forcing silicon is no longer the answer

Raw horsepower gains have stopped scaling

The truth is something we’ve known for a while now: silicon alone can only do so much. Moore’s Law is pretty much dead now, and we’re seeing that reality play out in real time. GPUs simply aren’t scaling the way they used to with each generation, and that’s not because companies don’t want them to, but because physics (and reality) is starting to push back. We’re hitting core limits, clock limits, thermal ceilings, and increasingly uncomfortable power requirements. Nobody wants a 600W GPU, their connectors blowing off, or a graphics card that practically needs a PSU of its own just to function. The idea of brute forcing performance gains generation after generation is running into diminishing returns.

This is not a problem unique to one company, by the way. AMD, Nvidia, and Intel are all converging on the same realization because the silicon process is the same for all GPU makers. There’s only so much you can extract from it before efficiency collapses. For years, the answer had been simple enough. Just shrink more nodes, add more cores, push higher clocks, and increase memory bandwidth. Now, however, every incremental gain comes at a disproportionately higher cost. The industry is just running out of room to do things the old way.

There’s a limit to scaling memory and economics

The point of diminishing returns is now in the rearview mirror

Credit: Wikimedia Commons

Another constraint that’s quietly tightening in the background is memory. VRAM is no longer a scalable solution, and throwing more of it at the problem was never going to fix things in the first place. Nvidia has already slashed almost all of its GPUs down to a single option per VRAM category in the 50-series, and even those cards haven’t really brought any meaningful increases over the previous generation. The expectation that more VRAM would future-proof performance is, quite frankly, starting to fall apart.

At the same time, VRAM, DRAM, and memory in general are reaching sky-high prices because a significant portion of the global supply is being diverted toward powering AI data centers the size of football stadiums. Now, gaming hardware is straight up competing with an entirely different industry, and looking at the availability and prices of PC parts today, it’s losing.

This creates a strange feedback loop. The same AI models that are helping games run better are also driving up the cost and scarcity of the very resources that GPUs rely on. Scaling memory isn’t the answer. It’s just another ceiling we’re beginning to hit. That’s why it’s simply not surprising that software is now doing the heavy lifting, with upscalers, frame generation, and dynamic resolution scaling being the major talking points at every new GPU launch.

Related

I game at 4K, and it’s more accessible than ever thanks to DLSS 4.5

I believed I was happy with 1440p, and then DLSS 4.5 came along

Upscaling and frame generation are no longer optional

DLSS and FSR have forever changed rendering itself

When it came out, upscaling technology like DLSS was meant to be nothing more than assistance. Two years later, it became genuinely impressive, and soon enough, ray-tracing and DLSS began going hand-in-hand. Then came frame generation, adding frames in between what the GPU rendered. And now, with technologies pushing toward multi-frame generation at extreme levels like 6x, we’re looking at a pipeline where most of what you see isn’t “traditionally” rendered at all.

At the same time, the industry has been quietly pushing another frontier, which is refresh rates. Higher refresh rates are becoming part of the conversation, and feeding those displays with native rendering alone would be absurdly expensive, if not outright impossible, and that too at modern visual standards. Now, it seems that the industry has collectively realized that rendering every frame, pixel, and lighting calculation natively is simply no longer scalable. This is a decided shift, because if you look at recent leaps in upscaling tech and how new GPU generations are presented with AI features leading the brochures, you’ll realize that the fundamental question has changed. We’re no longer asking, “How do we render this faster?” but rather, “How little do we actually need to render?”

Related

Native 4K was supposed to be the endgame, but upscaling changed that

What’s the point when upscaled 4K is almost just as good?

AI has become the performance benchmark now

Fake frames will be the only frames in the near future

At this point, we have to come to terms with the fact that AI has become performance itself instead of simply existing to enhance performance. Every time a new GPU drops, benchmarks that don’t include DLSS or FSR feel nothing more than theoretical, because nobody actually plays that way. Nvidia themselves have reported that over 80% of GeForce RTX players are using DLSS actively. Even GPU marketing has changed now, with AI and tensor cores leading the conversation, promising more AI-generated and AI-upscaled visuals instead of purely raster gains.

With FSR 4.1 and DLSS 4.5, especially, we’re now seeing rather aggressive presets with internal resolutions as low as 50%, still managing to deliver impressive and remarkably stable visuals. Of course, there’s no denying that temporal reconstruction and machine learning models have advanced remarkably, but the one thing they are also doing is redefining what actually counts as acceptable rendering for the player base.

AI may be replacing rendering, sure, but it still needs rendering to stand on regardless. Frame generation is about perceived smoothness, so it works best when there’s already a solid base to build on, and it often needs tools like NVIDIA Reflex to manage latency. So, we can talk about “fake frames” all the live long day, but the truth now is that what we’re optimizing isn’t raw output anymore, but rather what the player feels.

Related

6 rules of thumb PC newbies should know about the GPU market in 2025

AMD & Intel are back in the game, and advertised prices are a scam

So, where does performance go from here?

Once the line between rendered and generated graphics disappears, we’ll have a new normal.

The path forward doesn’t look like the past. It’s not unreasonable to believe that progress will no longer come from doubling cores or chasing higher clocks. Now, it’ll come from redefining what we consider real in the first place.

The line between rendered and generated, between computed and reconstructed, will only continue to blur. And once it disappears completely, we’ll have the new normal, and only memories of what pure raster power, native resolution graphics, and rendered frames used to be.