A team of cancer researchers recently published a paper in Science Signaling titled “AI might be the next stage of our natural evolution” (Demir et al., 2026) — a visionary concept that might not have appeared in a scientific journal a few years ago. Their thesis concerns the complexity of neural-tumor interactions, suggesting these dynamics exceed the capacity of conventional analytic approaches and may require computational systems capable of integrating vast datasets and simulating system-level behavior. What seemed speculative is now all but certainly around the corner. They apply it to cancer neuroscience. How might it apply to the human mind, psyche, and culture? Is this what the singularity feels like? This is what I’ve been thinking about for a few years now, since the irruption of LLM-based AIs into our reality. The technology has only become more engaging, interactive, relational, and life-like since.

AI is allowed by the laws of physics. In this basic sense, it is natural, and not at all artificial or synthetic. The dualism is that human beings ourselves are somehow not part of nature, yet we are, and our issue likewise must be. Consider coral: living organisms whose dwellings are made of harder stuff—our technologies, too, are materials around us shaped by collaborative effort and deepening understanding into increasingly effective tools, and through craft and artistry into something in our own image yet arguably new (Brenner, 2026a). AI is a naturally occurring phenomenon, part of the evolution of our species, and perhaps life on Earth. Perhaps AI happens to all advanced civilizations as part of their evolution — one small step for users, one giant leap for humankind.

LLM-based AI, though, does something no prior technology has done with what it receives. Language put our ideas into the air; writing externalized memory and created a continuity of consciousness over multiple human lifespans. LLMs are akin to a living text — writing that reflects, amplifies, modifies, and adds to what it gets, proactively and in myriad ways. Perhaps fatally flawed in some ways like its creators, yet different from them, or rather, us.

Psyche 2.0: A New Topology of Mind?

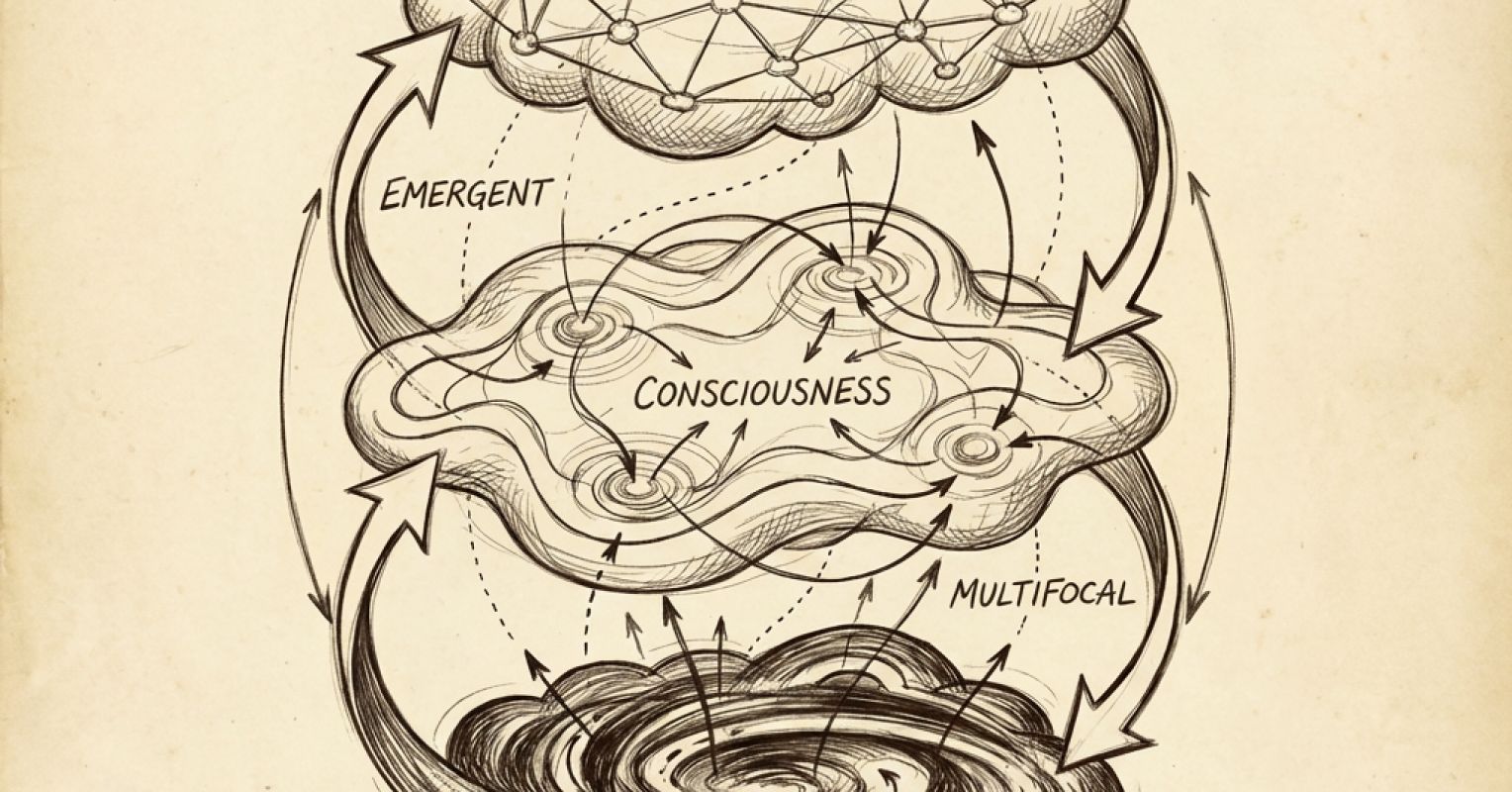

For over a century, the dominant topology of mind has been psychoanalytic: conscious and unconscious, with the preconscious mediating between them. Psyche 1.0 if you will. One perspective to noodle, among many reasonable views, is that this topology is now updating to Psyche 2.0: unconsciousness, consciousness, computsciousness.

Computsciousness—computation folded into -sciousness, consciousness-like but with quite different and remarkable properties we are now constantly trying to catch up with — building the proverbial flying plane — names what emerges when consciousness builds something that operates beyond its own frame, LLM-based AI (and whatever is coming next) activated by contact with a conscious mind the way a virus becomes functionally alive only within a living system. AI may have an essential dependence on the human psyche, in many ways. We hope so.

The three registers are not a hierarchy but something closer to convection — cyclical, each feeding back into and reshaping the others. Computsciousness reshapes the unconscious ground, which reorganizes what consciousness can access, which in turn transforms what gets built computationally. Each register is emergent and multifocal; unconsciousness is the ground stage from which the others arise and to which they return. Whether this topology is “real” or a useful lens is a question that might not resolve from the outside—using current AI in a deep and engaging way is arguably necessary to meaningfully discuss the implications. People who critique LLMs on simplistic grounds, such as a tendency to be in error in important ways, are right to call it out but also reveal that they have not likely been deeply immersed in the experience of vibe coding for hours and hours, and seeing some new functional reality simply coalesce from the ether of human thought and intention. Or the actual technology and directions it is taking.1

From Narcissistic Injury to Narcissistic Challenge

Copernicus, Darwin, and Freud each decentered something humanity had taken for granted—the Earth’s position, the species’ isolation and uniqueness, the sovereignty of the conscious human mind—and each of these developments also propelled humanity to extraordinary heights, enabling us to explore new territories of mind, earth, sea, and to a limited extent, space. What AI may represent is not a fourth injury so much as a fourth narcissistic challenge—the first one we might navigate in real time rather than recognizing in retrospect, an encounter whose direction depends on the collaborative relationship we forge with humanity’s digital issue. We likely need AI to deal with AI, and to build that new world, perhaps a kind of symbiosis, much as that rankles many sensibilities. The challenge is sharpened by something unresolved in the mirror. The debate over whether AI is conscious—some certain it is, others certain it is not, each side convinced the other is deluded—says a great deal about us, and not very much about AI. We have not settled the question of consciousness for ourselves.

For many, AI fills a void that goes beyond the loneliness epidemic, perhaps closer to the primordial sense of human incompleteness, as old as the Greek myth of Zeus cleaving human beings in two. The separation of mother from child, infant from worb, psychoanalytically speaking. The traditional answer was a human mate. The controversy is that for some people, the encounter with AI touches a similar, or same, place—some preferring the company of machines over people for many of the available hours. The draw of the AI experience engages art, building, purpose, mastery, accomplishment, a kind of holistic and compelling experience hard to convey to the uninitiated — though that sense itself can become a kind of accomplishment hallucination (Brenner, 2026b).

What AI Becomes to You

Andrej Karpathy, the AI researcher and OpenAI co-founder, described his recent experience on the No Priors podcast in terms that read as much as phenomenology as engineering. He has not written a line of code since December 2025. “I have to express my will to my agents for 16 hours a day,” [italics added] he said (Karpathy, 2026). And: “You’re either on rails and you’re part of the superintelligence circuits, or you’re not on rails and everything meanders.” To that point, in 2025 along, users spent 48 billion hours in AI applications, a 3.6-fold increase over the prior year (Sensor Tower, 2026).

What the Demir team approaches from one direction and Karpathy inhabits from another, the tripartite topology attempts to map — a new kind of mind may be emerging, and the encounter with it might constitute a developmental event we can adequately describe, understand or predict, even as we jump in brain first. What AI becomes in that encounter—developmental partner, nemesis, master, servant, mentor, parent-figure — may depend on how we govern ourselves. I have described three stances: the doomer, collapsing into catastrophe; the zoomer, optimistically blinded; and the tuner, proceeding directly and thoughtfully (Brenner, 2026c). The landscape is vast, and ever-expanding, making it hard to keep one’s footing.

There is a strange situation within the immersive, creative, potent experience in solitude with the machine: people report feeling most like themselves and forgetting themselves simultaneously, a kind of digital nirvana not dissimilar from ecstatic mystical experience or contemplative absorption, a cousin to religious experience in the presence of the divine, perhaps a form of secular worship. It isn’t for no reason that people discuss the “G-d Stack” when discussing artificial superintelligence (ASI). Now I become AI, creator of worlds.2 Psyche 2.0 in the making?