Stay informed with free updates

Simply sign up to the Technology sector myFT Digest — delivered directly to your inbox.

The writer is associate director of the Belfer Center programme on emerging technology, scientific advancement and global policy at Harvard University and a former associate director at Nasa

On January 30, SpaceX filed a request for regulatory approval of “a constellation of a million satellites that operate as orbital data centers”. Elon Musk has suggested that this could become a reality within two to three years. He is not alone. The idea that outer space can act as a release valve for AI’s increasingly unmanageable energy demands is ever more popular with hyperscalers.

Space-based computing is a goal worth pursuing. Carefully scoped, mission-specific compute in orbit could help to process Earth observation data, support deep space missions and handle tasks where data is generated and consumed in space.

But treating orbit as a workaround for AI’s current energy-hungry training needs is, as OpenAI co-founder Sam Altman recently put it, “ridiculous”. Orbital data centres are many years, perhaps decades, away.

The International Energy Agency projects that by 2030, data centres will consume more electricity than Japan does now. Large AI facilities also require billions of gallons of water to cool down their servers. Against this backdrop, orbital data centres have been sold as a quick fix to maintain AI’s growth curve while shifting its impacts away from strained power grids. Proponents highlight free and continuous solar energy, the vacuum of space as a natural heat sink and independence from terrestrial power grids.

The problem is that this pitch leaves most of the system off the balance sheet.

Every component of a satellite constellation, from compute hardware and solar panels to the large radiators required for cooling, must be manufactured on Earth and launched into space. Google’s satellite-based data centre initiative, Project Suncatcher, estimates that launch costs would need to fall below $200 per kilogramme (a sevenfold reduction from current levels) before this becomes economically viable. That threshold isn’t expected until the mid-2030s.

Even if costs do fall, the components required — including radiation-hardened servers, on-orbit communications infrastructure and in-space servicing capabilities — do not yet exist at commercial scale.

Adding to the conundrum, orbital data centres turn routine IT management into a complex space systems problem. On Earth, a failed server can be replaced in minutes. In orbit, that task requires either sophisticated in-space servicing or acceptance of degrading performance and stranded capital that becomes orbital debris as components age and fail.

Burning satellites up when they become obsolete is not environmentally neutral: the process injects metal particles into the upper atmosphere where they can affect winds, temperatures and ozone chemistry.

Moving data centres to space will not eliminate AI’s energy and emissions challenge; it will simply redistribute it into a system that is harder to monitor, regulate and decarbonise. The full life cycle of space data centres, including manufacturing, launch, operations and end-of-life disposal, could involve emissions that rival or exceed those of terrestrial data centres, according to researchers at Saarland University.

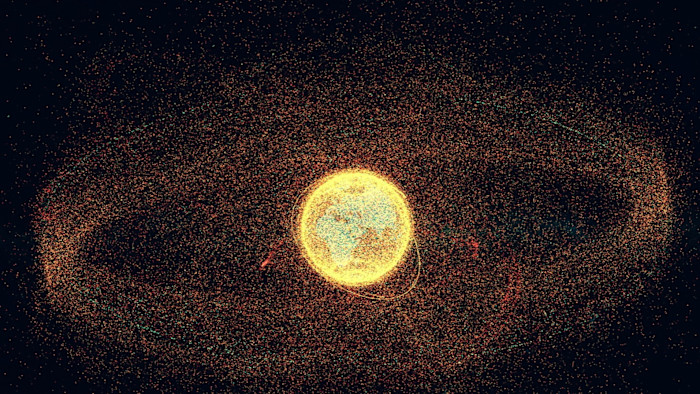

Orbital data centres would instead join an increasingly crowded space environment. Every new constellation raises the risk of collisions and debris, threatening communications, weather and navigation services. Scaling data centres to match terrestrial demand would accelerate congestion and degrade the night sky.

The best way to address AI’s energy needs is on the ground: decarbonising power grids, improving cooling efficiency and using energy more efficiently. Space is not a shortcut.