March 31, 2026

By Karan Singh

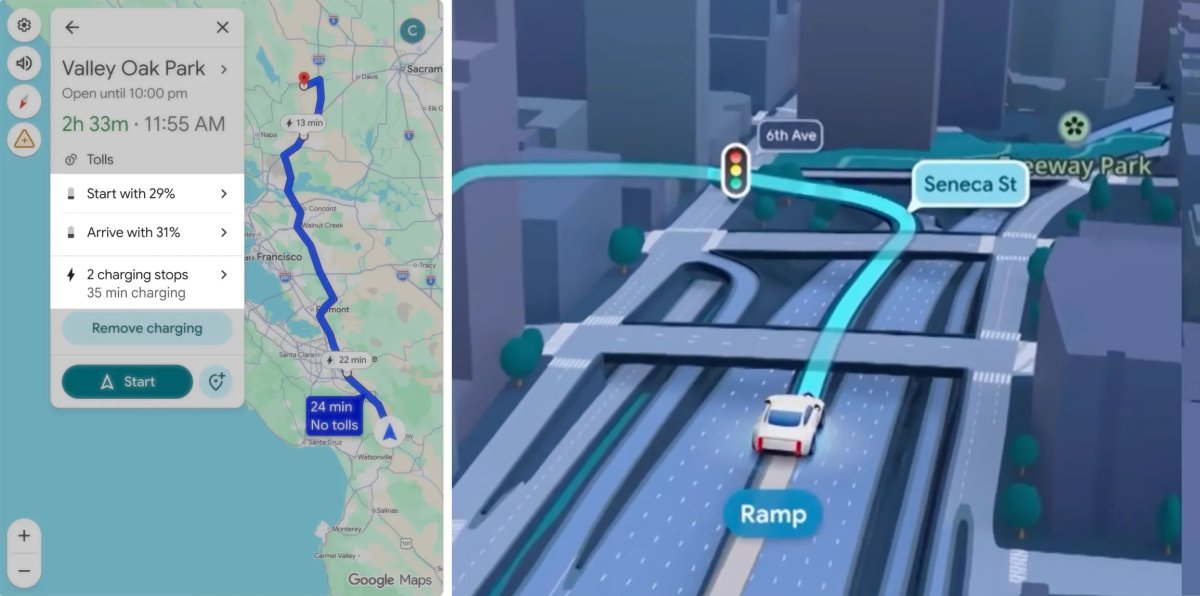

Google recently announced a massive navigation update for electric vehicles, bringing AI-powered trip planning and battery predictions to over 350 car models via Android Auto. This update recommends charging stops and adjusts arrival times based on battery levels, weather, and road elevation. It even lets you set your preferred state of charge on arrival, a feature Tesla recently added to the vehicle, but the app is still missing.

While Tesla has offered similar functionality natively for years, Google’s massive AI push has many owners wondering if Tesla could leverage this technology to finally fix its own navigation quirks.

Three Lefts Make a Right

If you have driven a Tesla for long enough, you have likely experienced some bizarre routing choices. The native trip planner will occasionally bypass an obvious route, instead leading drivers to take three left turns just to make a right, or routing them down strange residential side streets, or even having an unnecessary loop.

Getting so sick of these FSD navigation problems. I could have swerved back but it wouldn’t be safe to do.

Not even 500 miles and I’ve fixed so many nav mistakes.

FSD is SO good at driving but makes so many routing mistakes it ruins the magic. pic.twitter.com/iG1qbGBx8Q

— Dirty Tesla (@DirtyTesLa) January 14, 2026

When we see Google rolling out advanced AI routing algorithms for Google Maps, it naturally raises the question of whether Tesla can tap into this to improve its own turn-by-turn directions. The short answer is no, and the reason has to do with how Tesla’s software is built.

Why Google’s Update Doesn’t Help

Google’s new update is an incredibly powerful tool for the electric vehicle market. The tech giant is leveraging artificial intelligence and advanced energy models to analyze specific vehicle characteristics like weight and battery capacity. The system then combines that data with real-time mapping information regarding traffic, road elevation, and weather conditions.

This feature provides accurate battery predictions upon arrival, automatically recommends exactly when and where a driver needs to charge, and adjusts estimated arrival times based on those necessary charging stops. For drivers of one of the 350 supported car models, this update democratizes electric-vehicle road trips. It eliminates the need to juggle multiple third-party apps just to find a charger, bringing seamless trip planning to legacy automakers that have historically struggled with native software.

However, as impressive as this update is for the rest of the automotive industry, it is completely unrelated to the turn-by-turn routing anomalies experienced by Tesla owners. Google is fundamentally solving an energy calculation problem rather than a pathfinding problem. Since Tesla already offers industry-leading native energy predictions and doesn’t offer Android Auto in its vehicles, this new mapping update simply doesn’t affect how your car chooses its turns.

Tesla’s Split Brain

To understand why Tesla cannot simply adopt this new Google update, we have to look at the underlying architecture of the car’s navigation. Many owners assume Tesla uses Google Maps for everything because the visual map displayed on the touchscreen is indeed provided by Google. However, the actual routing engine running behind the scenes is completely separate.

For calculating routes and giving turn-by-turn directions, Tesla uses a highly customized version of an open-source routing engine called Valhalla. This system relies heavily on OpenStreetMap data rather than Google’s proprietary routing algorithms. When your car makes a strange routing decision, it is usually because of a quirk in the OpenStreetMap data or an error in the specific mathematical weights Tesla uses to calculate the fastest path.

Google Maps New Visuals

Google has done more than just improve its routing and add better support for EVs. Recently, they revamped its Maps app to introduce an AI-powered voice assistant, and they’re overhauling their visuals by leveraging AI as well. However, the latter is still being rolled out slowly.

Steps to Improve Routing

If Tesla wants to fix the weird routing anomalies, it needs to focus on refining its Valhalla routing engine and improving how the system interprets OpenStreetMap data. Tesla could theoretically pay to license Google’s actual turn-by-turn routing API to replace Valhalla, but it has deliberately moved away from doing that years ago to maintain total control over its ecosystem and seamlessly integrate the Supercharger network. However, now that Google is providing and calculating charging stops, it could be a system Tesla takes a closer look at and sees if it meets their needs, instead of continuing to develop their own.

For this to happen, Google’s routing API would need to be highly flexible and allow limiting charging stations to Tesla’s Superchargers, among other features.

Ultimately, Google’s latest AI update is a massive win for drivers of other electric vehicles. It brings crucial battery forecasting to legacy automakers for now.

Tesla Maps and Google Partnership

However, Tesla’s maps are already highly dependent on Google. Tesla leverages Google APIs for points of interest, reviews, hours of operations, map tiles, satellite imagery, 3D buildings, and even traffic information. While Tesla’s navigation system is created in-house, it relies mostly on Google data. Routing is one of the few items Tesla is creating itself, at least for now.

While Tesla incorporates many Google Maps features, the new visual updates will likely not be among them.

Subscribe to our newsletter to stay up to date on the latest Tesla news, upcoming features and software updates.

March 31, 2026

By Karan Singh

Tesla saw the departure of two significant figures from its product and retail teams last month. Jose del Corral, who served as Tesla’s Head of Product, and Ryan Torres, the Staff Program Manager for Retail Programs, both left Tesla last week.

This news comes shortly after the departure of Thomas Dmytryk, who played a crucial role in building out Tesla’s OTA update infrastructure, and more recently, worked on the Robotaxi.

Jose del Corral Moves to Crypto

After an impressive nearly eight-year run at Tesla, del Corral announced his next career move on social media. He is officially joining the cryptocurrency exchange platform Coinbase to lead its Customer Experience division.

In his public announcement, del Corral expressed his excitement about the massive industry transition. He noted that very few companies get a chance to help rebuild the financial system from the ground up, adding that it is hard to think of a more important place to be right now. He confirmed he is ready to start building and will be working closely with Coinbase CEO Brian Armstrong and President Emilie Choi.

Losing a Head of Product with nearly a decade of institutional knowledge could leave a temporary knowledge gap in the area. During his long tenure, del Corral helped oversee massive transitions in Tesla’s vehicle lineup and software ecosystems, helping shape the user experience that millions of drivers interact with every day.

Ryan Torres Steps Down

While del Corral was vocal about his next adventure, the departure of Ryan Torres has been much quieter. Torres served as Tesla’s Staff Program Manager for Retail Programs and played a key role in shaping how consumers physically interact with the brand and its showrooms.

Torres was also one of the key customer experience managers and was consistently willing to help resolve customer issues spotted on X. Losing his personal touch regarding service and outreach will undoubtedly be a significant loss for Tesla.

Torres has not yet made a public announcement regarding where he is heading next or what projects he will be taking on. However, his professional LinkedIn profile was recently updated to reflect March 2026 as the official end date for his current position at Tesla rather than marking his role as ongoing.

Executive turnover is a natural part of the fast-paced technology and automotive industries, but losing veteran leaders is always notable. As Tesla pushes forward with its highly ambitious 2026 product roadmap, the company will likely be looking to fill these critical leadership and retail roles quickly to maintain its momentum.

March 30, 2026

By Karan Singh

For owners of Tesla vehicles equipped with HW3, the wait for the latest FSD updates has become a tense waiting game. FSD v12.6.4 was the last update released on Tesla’s legacy hardware about 13 months ago, and it was an incremental update to previous versions within the same major build.

As Tesla’s end-to-end neural networks grow increasingly massive and complex, the AI team is struggling to fit its most capable versions of FSD, like v14, onto the older computers. Tesla has said it intends to prepare an FSD v14-lite build for HW3 vehicles in Summer 2026, but FSD development has slowed down drastically in recent months due to the focus on Robotaxi and Unsupervised FSD.

That leaves little time for the team to work on optimizing a modern build for legacy vehicles. However, a recent breakthrough from NVIDIA in the world of Large Language Models (LLMs) might just hold the conceptual key to how Tesla can keep HW3 highly capable without completely lobotomizing FSD.

The HW3 Bottleneck: It’s All About Memory

To understand the solution, we have to understand the bottleneck. While HW3 has less raw computational power than the newer AI4 hardware, its biggest limiting factor for modern AI is actually memory.

When you run a massive neural network, it requires a significant amount of working memory to function in real-time. In LLMs like ChatGPT, this working memory is called the KV (Key-Value) cache, which stores the context of your conversation so the AI doesn’t have to re-read the entire chat history for each new prompt.

Tesla’s FSD operates on a very similar principle. The car utilizes spatial-temporal memory to remember the driving context over time. If a pedestrian walks behind a parked delivery truck, the car’s temporal memory tracks that the pedestrian is still there, even if the cameras can no longer see them. As FSD gets smarter, this temporal memory cache grows larger, quickly exhausting the limited RAM available on the HW3 computer.

NVIDIA’s 20x Compression Breakthrough

This is where NVIDIA’s latest innovation comes in. As reported by VentureBeat last week, NVIDIA’s researchers have introduced a new technique that shrinks the memory footprint of an LLM’s working cache by a staggering 20x.

The most important part is that they did it without changing the model’s actual weights.

The technique, called KV Cache Transform Coding (KVTC), borrows a concept from classical media compression formats like JPEG. Instead of permanently deleting information, the algorithm identifies the most critical components of the working memory and compresses the rest on the fly.

Previously, to fit massive AI models onto constrained hardware, developers had to permanently alter the model through “quantization” or “pruning” (literally cutting out neural pathways). While this saves space, it often degrades the AI’s intelligence.

NVIDIA’s new approach avoids this entirely. By aggressively compressing the working memory during inference, the LLM maintains its original, uncompromised intelligence with less than a 1% accuracy penalty, all while using a fraction of the hardware memory.

Applying the JPEG Method to Neural Networks

While NVIDIA’s research is focused on text-based LLMs, the underlying math and architecture may be able to be adapted for the vision-based AI running in your Tesla.

If Tesla’s Autopilot engineering team applies a similar dynamic memory sparsification or transform coding to FSD’s spatial-temporal memory, the results for HW3 could be game-changing. By highly compressing the “video memory” of the car’s recent surroundings in real-time, Tesla could drastically reduce the total VRAM required to run the software.

Why does this matter? Because freeing up that memory cache means Tesla wouldn’t have to shrink the core intelligence of the neural network to make it fit.

Instead of delivering a heavily pruned v14-lite that removes millions of parameters and degrades the car’s driving capability, Tesla could ship a much more capable version of the v14 model to HW3. The car would still be running the highly advanced, end-to-end driving logic; it would just be utilizing a highly compressed, ultra-efficient JPEG-style temporal memory to stay within the hardware’s limits.

Squeezing the Silicon

There is no denying that HW3 is aging silicon. Eventually, the hardware will reach a hard ceiling where it simply cannot process the data fast enough to keep up with the demands of unsupervised autonomy.

However, NVIDIA’s KVTC breakthrough proves that the AI industry is finding radical new ways to optimize software inference without needing bigger, more expensive chips. As Tesla races to unify its fleet on the v14 architecture, advanced memory compression techniques like these are exactly how the company will squeeze every last drop of capability out of its legacy hardware until the HW3 upgrade happens.