April 7, 2026

By Nehal Malik

Tesla officially started releasing Full Self-Driving (Supervised) v14.3 on Tuesday. This major update, which CEO Elon Musk has previously called “the last big piece” of the self-driving puzzle, is now rolling out to early public testers. It follows a brief internal testing period where employees spent the last week validating the build.

According to the official release notes, v14.3 introduces a “completely rewritten AI compiler and runtime from the ground up with MLIR.” This technical overhaul is a big deal for the average driver because it results in a 20% faster reaction time for the vehicle. In the world of AI, speed is safety, and this faster processing allows the car to make split-second decisions with much more confidence.

Last updated: Apr 6, 12:00 am UTC

Fleet Learning and 3D Geometry

The most significant shift in v14.3 is the introduction of vehicle-to-fleet communication and reasoning. Tesla is now using its global fleet to source “infrequent events” and “hard RL examples” to train the neural network. This means your car is effectively learning from the most difficult scenarios encountered by millions of other Teslas, such as complex intersections with compound lights, curved roads, and even the behavior of small animals.

The vision encoder has also been upgraded, which we correctly predicted would strengthen 3D geometry and traffic sign understanding. This allows the car to better “see” objects that are hanging or leaning into the road, like low tree branches or construction equipment. The release notes also mention better performance in low-visibility scenarios, potentially improving how the system handles bad weather.

For Cybertruck owners, there is extra good news: the release notes are identical to the Model Y, meaning the Cybertruck is finally receiving FSD parity with Tesla’s mainstream models. FSD v14.3 also brings the “Parked Blind Spot Warning” feature to the Cybertruck, which prevents passengers from opening doors into oncoming traffic, cyclists, or pedestrians.

Smarter Parking, But No Banish

While the update is packed with driving improvements, some fans might be disappointed to see that Actually Smart Summon (ASS) didn’t get any specific love. There is also still no sign of “Banish,” the fabled feature that would allow your Tesla to drop you off and then autonomously find its own parking spot.

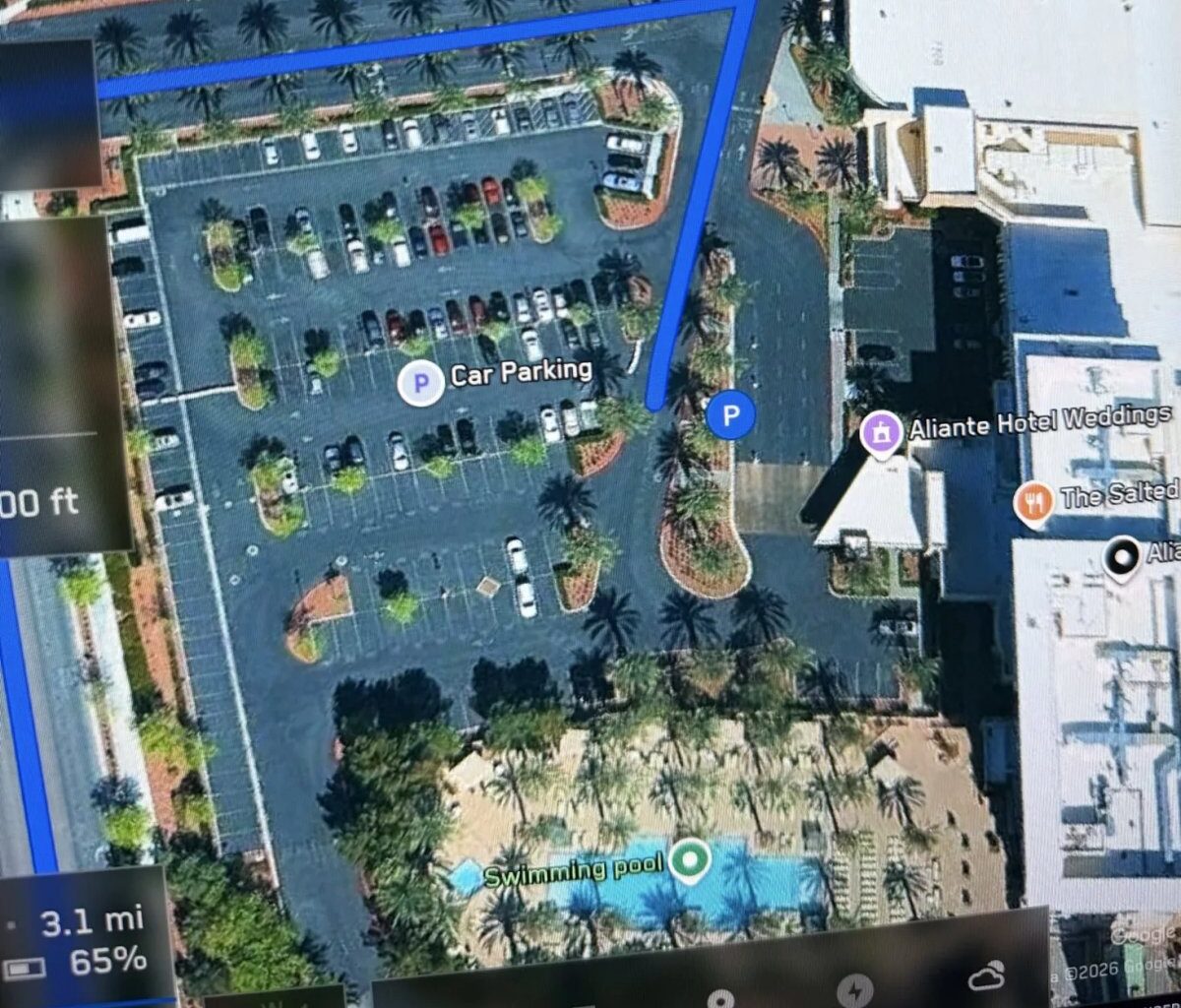

However, v14.3 does lay the groundwork for Banish with “increased decisiveness of parking spot selection and maneuvering.” The car is now much better at predicting parking spots, which will now be shown on the map with a specific “P” icon. It has also improved its ability to recover from “temporary system degradations” without needing the driver to take over, which is a key requirement for a truly driverless parking experience.

Potholes and Better Monitoring on the Horizon

The release notes also give us a rare “Upcoming Improvements” section, which will likely roll out in point releases over the coming weeks. One of the most requested features, pothole avoidance, is officially on the list. Tesla also plans to expand AI reasoning to all driving behaviors, moving beyond just destination handling, and significantly improve the driver monitoring system.

The new monitoring tech will feature higher accuracy in variable lighting and better eye gaze tracking, even for drivers wearing sunglasses. This suggests Tesla is getting very close to allowing longer periods of “hands-off” driving while ensuring the driver is still paying attention.

With v14.3, the dream of a truly autonomous Robotaxi network feels closer than ever. Tesla is no longer just writing code; it is teaching a global brain to drive.

Subscribe to our newsletter to stay up to date on the latest Tesla news, upcoming features and software updates.

April 7, 2026

By Nehal Malik

Tesla’s ambitious quest to control its own silicon destiny just gained a massive ally. Intel has officially joined the “Terafab” project, a joint venture between Tesla, SpaceX, and xAI aimed at building the most advanced semiconductor manufacturing complex in human history. The partnership was confirmed following a weekend meeting between Elon Musk and Intel CEO Lip-Bu Tan at Intel’s campus.

In an announcement on X, Intel expressed pride in joining the effort to “refactor silicon fab technology.” The company noted that its ability to fabricate and package ultra-high-performance chips at scale will be key to reaching Terafab’s goal of producing 1 terawatt (TW) of compute per year.

Completing the Chipmaking Trifecta

Completing the Chipmaking Trifecta

For years, Tesla has relied on outside foundries to produce the brains for its vehicles. Currently, the company works with TSMC and Samsung, even recently striking a massive $16 billion deal with the latter for AI6 chip production. By bringing Intel into the fold, Musk has effectively teamed up with the three biggest names in the industry to ensure his companies never face a supply bottleneck again.

The Terafab, located just outside Giga Texas in Austin, is designed to be an “Advanced Technology Fab.” Unlike traditional factories that might just make one part of a chip, this facility aims to handle logic, memory, and advanced packaging all under one roof. This setup allows for a cycle of “recursive improvement,” where engineers can make a mask, print a chip, and test it in a matter of days rather than months.

Powering Robots and Space Data Centers

The scale of the Terafab is hard to wrap your head around. During the official launch event last month, Musk explained that existing global fabs only achieve about 2% of his ultimate compute goal. To support 1 billion Optimus robots and a multi-planet civilization, humanity needs a massive leap in hardware production.

The project is split into two primary facilities. One will focus on terrestrial AI, producing the AI5 and AI6 chips that power Full Self-Driving and the Optimus humanoid robot. The second facility is even more futuristic: it will produce D3 chips designed specifically for orbital data centers. These space-based computers will be launched by SpaceX’s Starship and powered by 24/7 solar energy, bypassing the power constraints of the local electrical grids on Earth.

From Concept to Construction

Tesla has already started the hiring process for specialized talent in Austin to oversee the factory design and construction. For Intel, this partnership is a major win for its “Intel Foundry” business, which has been working to prove it can support the world’s most demanding customers after falling behind in the AI race.

“Elon has a proven track record of re-imagining entire industries. This is exactly what is needed in semiconductor manufacturing today,” said Intel CEO Lip-Bu Tan. As Tesla pivots from being just a car company to an AI and robotics powerhouse, controlling the silicon stack is no longer optional — it’s the only way to reach the era of “Sustainable Abundance” that Musk envisions.

April 7, 2026

By Karan Singh

Tesla recently published a highly detailed patent that explains the inner workings of its vision-based occupancy network. The patent is titled Artificial Intelligence Modeling Techniques For Vision-based Occupancy Determination and was officially published on March 12, 2026.

Credited to a team of engineers that includes Ashok Elluswamy, the document provides a deep dive into how Tesla uses artificial intelligence to understand the physical world without relying on radar or LiDAR.

Understanding the Voxel Grid

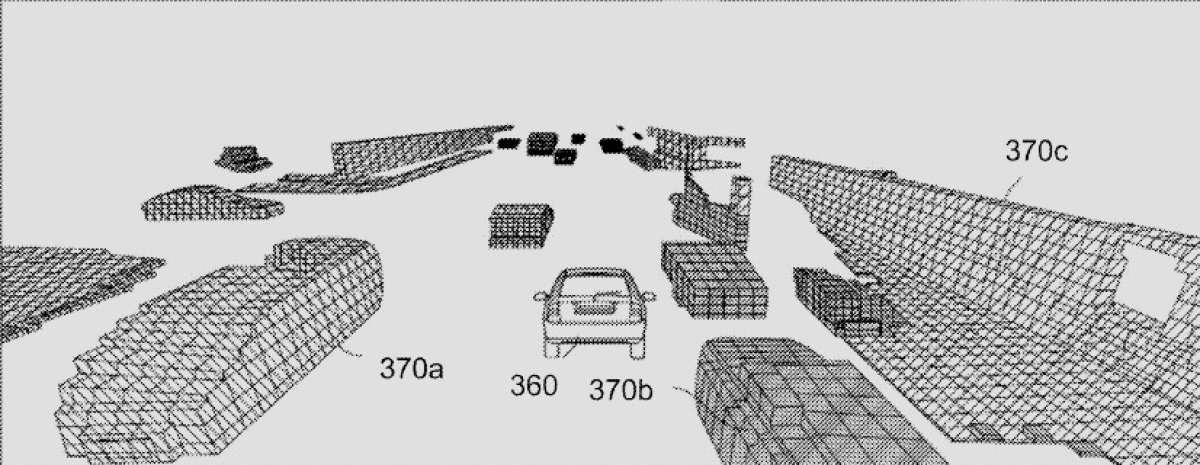

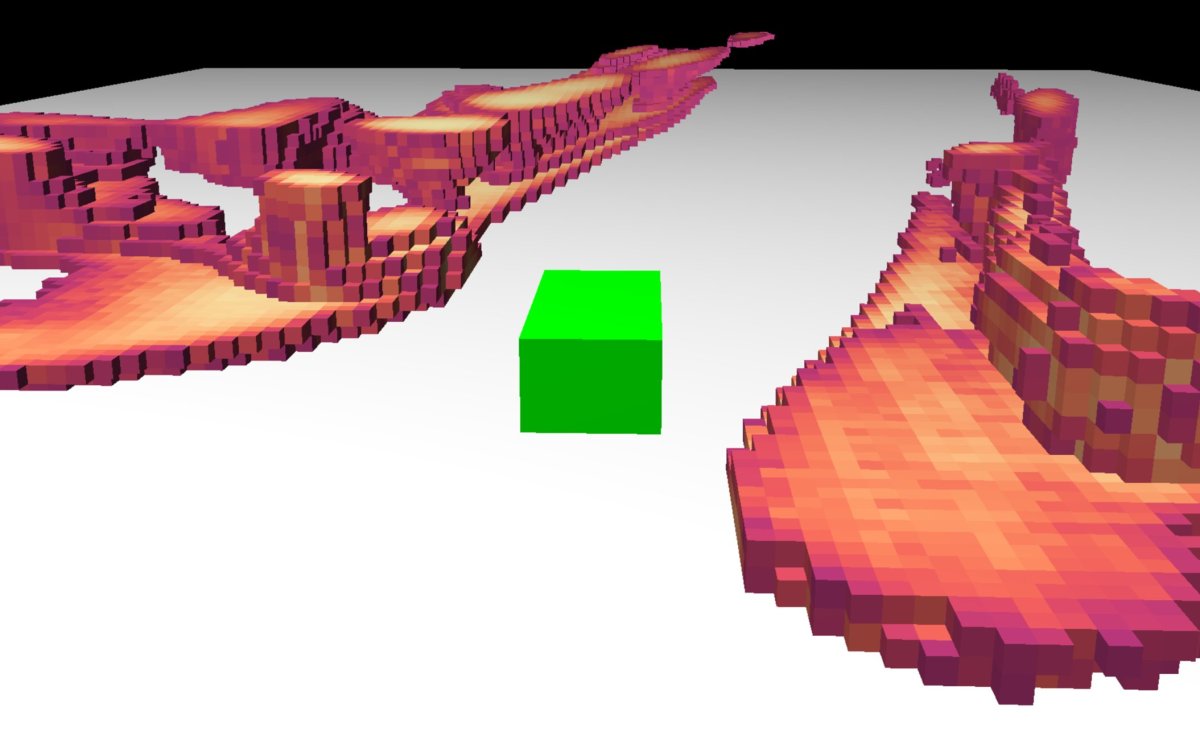

The core of Tesla’s occupancy network revolves around voxels. A voxel is essentially a three-dimensional pixel that represents a specific point within a volumetric grid surrounding the vehicle. To build this grid, the artificial intelligence model ingests image data from the eight exterior cameras of the vehicle. The system then executes the model to predict whether each voxel is occupied by an object having mass.

Because labeling millions of 3D data points manually would be impossibly time-consuming, the patent notes that Tesla relies heavily on unsupervised training methods to train these models at scale.

Variable Resolution and Sub-Voxels

Variable Resolution and Sub-Voxels

One of the most interesting details revealed in the patent is how Tesla manages computing power by dynamically adjusting the size of these voxels. The default size for a voxel is 33 centimeters on each vertex. This size is generally acceptable for objects located far away or outside of the immediate driving surface.

However, FSD can reduce the voxel size to 10 centimeters for areas that are occupied and within a threshold distance from the vehicle. This allows for much higher granularity where it matters most. The neural networks can even predict partial occupancy by dividing occupied spaces into smaller sub-voxels.

This allows FSD to identify the exact shape of a curved object accurately. The analytics server can also use trilinear interpolation to estimate the occupancy status of any specific point within a voxel.

Temporal Fusion and 3D Semantics

Tesla’s AI does not just look at static frames in isolation. The artificial intelligence model uses a transformer to aggregate the 2D image data into a unified 3D representation. It then fuses this current 3D space with representations from previous timestamps. This combination of spatial and temporal data allows the network to calculate occupancy flow. Occupancy flow indicates the exact velocity of moving voxels.

Finally, FSD applies 3D semantic data to identify what the object actually is. It can distinguish whether a group of occupied voxels represents a moving car, a static building, or a street curb. The system is designed to prioritize certain semantic shapes. For instance, a moving vehicle near the ego will be analyzed much more thoroughly than a static building located far off the roadway.

Powering Vehicles and Optimus

All of this data is continuously aggregated into a queryable dataset. FSD can constantly query this dataset to receive occupancy statuses and make real-time navigational decisions. Additionally, this same dataset is used to generate the 3D environmental map displayed on the user interface inside the vehicle.

While the patent is heavily focused on autonomous vehicles, it confirms that the underlying technology is highly adaptable. The document specifically notes that this exact same vision-based occupancy network can be utilized by a general-purpose, bipedal humanoid robot to navigate various terrains.

If you enjoyed this article, we recommend reading our full series on Tesla patents related to FSD: