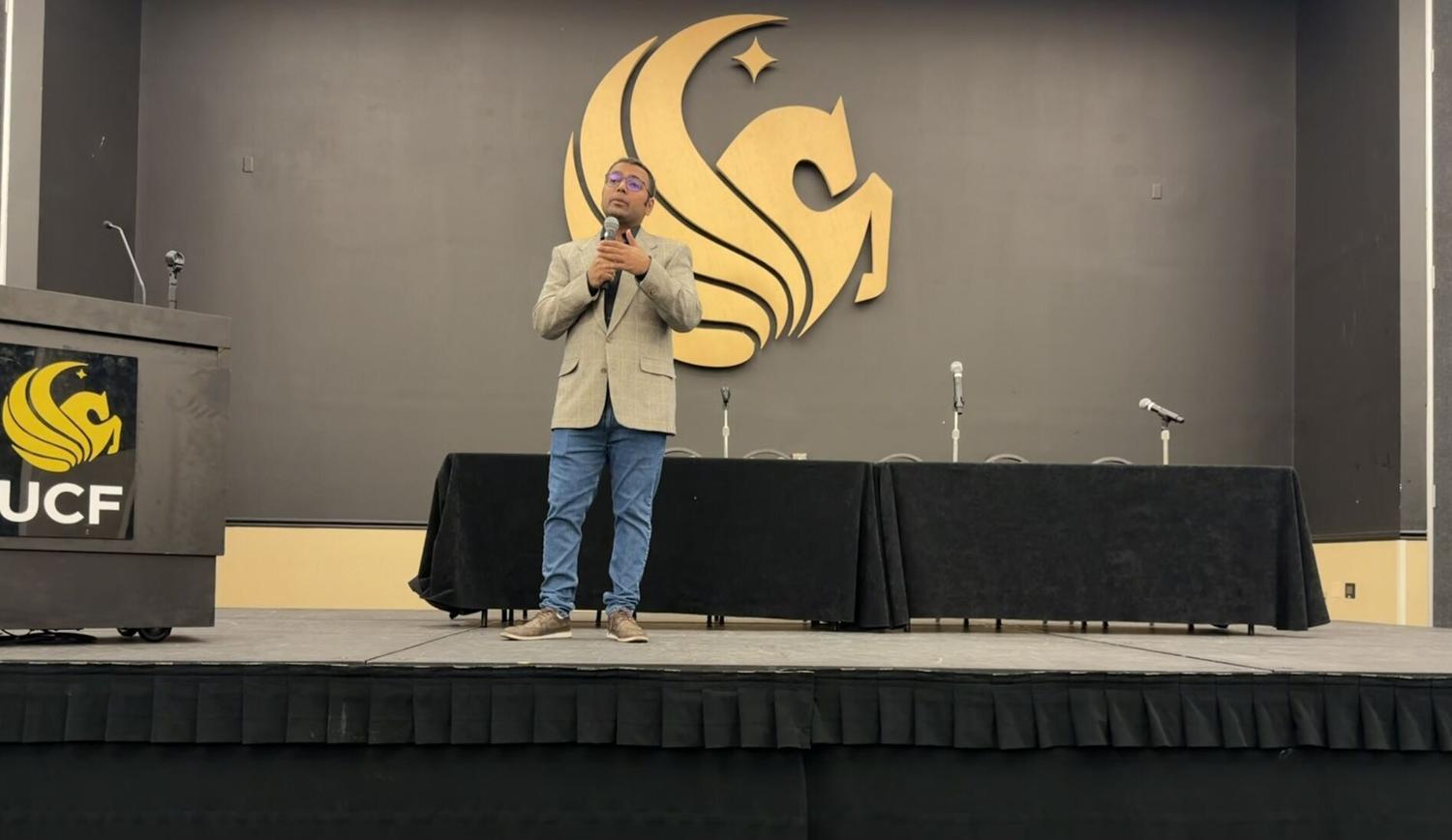

Dr. Anuji Gupta gives a keynote presentation on ‘AI-Slop’ at Pegasus Ballroom on Wednesday, opening students up to a deeper conversation about the ethical usage of AI.

Allison Smith

Dr. Anuj Gupta, professor of rhetoric and composition at the University of South Florida, presented a lecture on “AI slop” during the Knights Write Showcase at the Pegasus Grand Ballroom on Wednesday.

Gupta earned his Ph.D. in rhetoric and composition from the University of Arizona, his work focusing on generative artificial intelligence. Gupta said “AI slop” — low-quality AI-generated content — has become common on social media, from harmless posts like entertainment to harmful content such as “deep fakes,” which are designed to spread misinformation.

“Both in quantity and quality, AI slop is AI content that is misaligned with author and audience expectations,” Gupta said.

Gupta said there are seven levels to AI misalignment in his presentation: training data, algorithms, infrastructure, apps, writing workflows and AI prompts, pedagogy and policies. Preventing AI slop, Gupta said, starts with how the AI is trained, and the low-quality output can be prevented at any of these stages.

“A lot of these AI models have been trained on content from the internet,” Gupta said. “Those of us who have been on the internet know the internet is not always high-quality content. So, it’s basically trained on a lot of garbage.”

Language Learning Models seek averages in human culture to create the most typical responses, creating issues in generating content, Gupta said.

“That’s why all the content sounds sloppy or sounds mediocre,” Gupta said. “It’s because they’re really going for whatever is the average of human culture. What we can do to combat that is to include AI only in limited aspects of our writing workflows.”

Apps centered on AI, Gupta said, are seemingly launching every day. Keeping up with a new app every day can become exhausting and overwhelming, Gupta said.

“I give it at least six months to see whether it’s sticking or whether it’s fizzling out,” Gupta said.

Gupta said AI at its most useful should support work with what the user already knows how to do, but not automatically do it. Gupta said after generating a response, a person should review it and repeat the process if need be.

Students who use AI should revise the results before deciding whether to include any of the materials, Gupta said.

According to a study at the National Library of Medicine, AI systems regularly produced incorrect citations and fabricated sources, a problem commonly referred to as “hallucination.”

Pedagogy and policies made for AI help prevent AI slop from entering professional work. Creating clear guidelines can help prevent the spread of AI slop, Gupta said.

Adriana Carpenter, freshman pre-management major, attended the presentation with the goal of learning more about how AI is currently being used.

“I feel like AI is very relative to the society we live in,” Carpenter said.

When it comes to her personal use of AI, Carpenter said she normally uses it to brainstorm.

“I bounce my ideas off people, and if people aren’t available for me to bounce my ideas off of, I bounce it off of certain AI,” Carpenter said.

As someone who just left high school, Carpenter said she saw a lot of students copy and paste AI-generated answers into their assignments. She said that when other students used it to think for them, it became a crutch and they couldn’t think without it.

UCF Faculty Center Director Dr. Kevin Yee is an expert in educational development and AI technologies. He said that students who pass the burden of their school work entirely to AI are participating in something called “cognitive offloading.”

He said cognitive offloading in an educational setting causes students to miss the point of being at a university. When a student allows the AI to do the thinking for them, Yee said, they don’t give themselves the opportunity to learn.

According to a study done at the Massachusetts Institute of Technology, when someone uses AI to write essays for them, they consistently underperformed on the neural, linguistic and behavioral levels.

“When it comes to education, the work is actually the point,” Yee said. “The process is the point. And so, what we tell students is that, using Chat GPT to write your essay from start to finish is like lifting weights in the gym with a forklift.”

Yee said he advises students to consider AI to be more of a “thought partner” instead of thinking for you. When it comes to AI and student writing, he said it should not be generating the content for them, and it can come into play in the earlier “brainstorming” stages of the work.

“The struggle is the point,” Yee said. “It’s supposed to be hard. That’s how you build muscles.”