Late last month, the city released its initial guidance on the use of artificial intelligence (AI) in schools and asked for public feedback by May 8.

The response from students, parents and educators interviewed by Epicenter NYC was primarily frustration that the guidelines focus almost entirely on how staff and teachers can and cannot use AI, rather than addressing what’s most on people’s minds — what student use is permissible and what’s not.

The document states officials are developing “research-informed guidance on instructional design that ensures AI supports — rather than substitutes for — student thinking.”

Parents we spoke with said the guidelines were too ambiguous, which makes it difficult to submit input to school officials. For parents of students with disabilities, the guidelines also leave unanswered questions about the extent to which AI can be used as assistive technology to meet their children’s needs.

What the city does say about student use of AI

The preliminary guidelines state that “students may use AI for research, exploration and creative projects. Educator guidance, critical evaluation of outputs and age-appropriate context are required.”

While there’s little else that’s official, the department has given a sense of where it’s headed. At a media roundtable on April 2, city officials gave a few examples where AI can or can’t be used.

“AI should not replace students’ ability to think. It shouldn’t create for them without their critical thinking,” said Miatheresa Pate, the school system’s chief academic officer. “Think of AI in the sense of augmenting, like a thinking learning partner where students can brainstorm topics, they can get feedback on drafts, they can ask clarifying questions on complex concepts.”

Students can also use AI for writing support, like developing structure when writing. She added that AI could be used in the classroom to remove barriers for multilingual learners or students with disabilities, Pate said.

But in all these cases the guiding principle is “human judgment, human use, human support,” she said. “We are relying on the giftedness of our educators to be front and center around the use of AI within the classroom and ensuring that there is in fact an educational justification that a student would utilize AI.”

Teachers’ tool

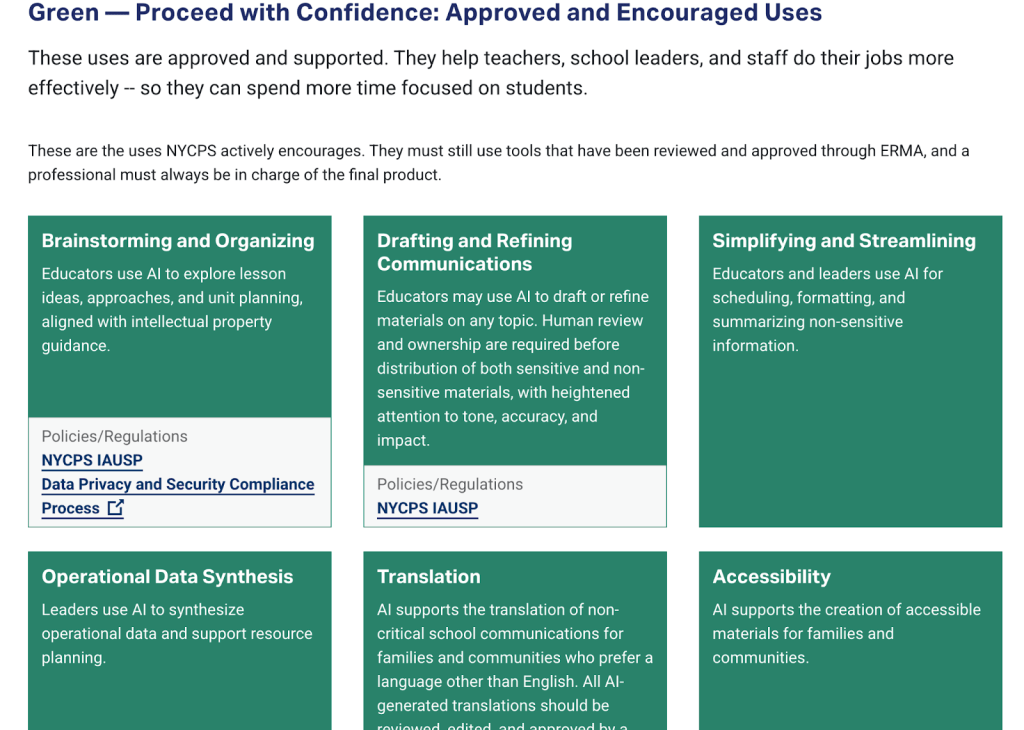

By contrast, the officially released preliminary guidelines focus on AI and its use by teachers. Several parents we spoke with took no issue with what the guidelines have literally greenlit so far — the guide uses a traffic light system in which red means prohibited, yellow to proceed with caution and green to go ahead.

New York City public school teachers can use AI to brainstorm ideas for lesson and unit plans, plan resources, conduct research, manage scheduling and format and summarize non-sensitive information. They can also deploy it to translate and create accessible materials for families and communities and to help draft some materials, as long as they’re reviewed by a human.

Credit: New York City Public Schools

Credit: New York City Public Schools

“Teachers have a lot on their plates,” said Nicole Perrino, founder of a family resource guide, Bronx Mama, who noted that AI can help lighten the load. “They still need to use their own discretion to tailor it to their specific students, but if it helps them create lesson plans and customize them for their students, I think it’s just an extra tool.”

AI can help reduce logistical tasks such as creating test layouts, allowing teachers to focus on other priorities, said Perrino, an occasional Epicenter NYC contributor.

“Everything should still be coming from the teacher’s mind in terms of their knowledge of the students and what they want the lesson plan to entail — the big meat,” Perrino said.

Outsourcing students’ cognitive skills

But when it comes to students, the questions are trickier. Many parents worry that tools that reduce “cognitive load,” the amount of mental work required by a task, can also reduce the amount students learn and can even slow cognitive development.

“It’s taking away from the foundation they’re supposed to be building,” Perrino said.

Such worries were backed up by a 2024 study that compared one group of German college students using ChatGPT and another using Google search to gather research recommendations and to justify their conclusions. The students with a lower cognitive load — those who used ChatGPT — offered weaker reasoning and justifications and showed they were less likely to have processed or critically analyzed the information.

Kelly Clancy, who holds a doctorate in political science, founded Parents for AI Caution over concerns about her children, three New York City public school students. “The guidance document leaves NYC students uniquely vulnerable to AI being unleashed in the classrooms,” she said in an email. “It lays a floor for protecting privacy without attempting in any way to consider the pernicious impact access to AI has on student cognitive development, learning [and] mental health.”

Clancy, who also sits on the community education council of District 20 in Brooklyn, added that the city guidance “gives false nods to research saying this helps vulnerable learners when the opposite is true.”

Such concerns are echoed by students: At a listening tour session held by new Schools Chancellor Kamar Samuels at John Dewey High School in Brooklyn on the evening the guidance was published, students worried about the toll AI could take on their own critical-thinking skills and those of their peers.

“As we move into the future, it’s more than just taking a test and doing well and so on,” said Nekena Randrianrison, a senior at Dewey who warned against overreliance on AI.

One parent who belongs to the AI Moratorium Coalition, which Parents for AI Caution is also part of, called out the city’s “faulty narrative on the so-called benefits of AI” in a press release in response to the city’s guidance.

“While we should be providing opportunities for our students to learn and understand engineering, technology and science, we should not be doing it at the expense of their cognitive development and their ability to be problem-solvers, critical thinkers and innovators,” said Kaliris Salas-Ramirez, a neuroscientist and parent leader in East Harlem.

AI as assistive technology

The questions around AI are different for parents of students with disabilities. Jenn Choi, a special education consultant and advocate, has seen the power of AI-mediated assistive technology for some of the school system’s learners with the greatest needs.

Choi, an occasional contributor to Epicenter NYC’s coverage of special education needs, was surprised that the guidelines included no explicit mention of the role AI tools are already playing for students with disabilities, including reducing barriers to starting tasks — such as the anxiety her son has experienced that kept him from beginning an email.

When Epicenter NYC asked about the role of AI-powered assistive technology at the media roundtable, school officials said they differentiate between AI and assistive technology such as speech-to-text software.

“For us, along the traffic light, those are very low-tech and they would be permitted to support students with disabilities,” Pate responded. “As opposed to artificial technology, which in contrary, is not very inherently assistive when it comes to students with disabilities who require assistive technology.”

But Choi doesn’t see how AI-powered assistive technology fits into the preliminary rules on what’s permissible. One of her clients, a first grader with illegible handwriting, has been using a digital keyboard with AI-assisted word prediction. While trying to spell the word “snake,” he sounds out the first letter and types “s,” continuing the process until he completes the word.

The use of AI allowed educators to see his writing ability in ways they couldn’t before, both because the writing was illegible and because he wrote less due to pain.

The goal is for AI assistive technology, like any scaffold, to eventually be phased out. But unlike this first grader, whose instructors direct him to limit use of autocomplete and speech-to-text until he has first put in the effort, many students with similar challenges may be using AI in harmful or inappropriate ways without guidance. It underscores the need for educators to teach students how to balance their needs and navigate limits, Choi says.

“They need to be taught, if it’s out there like candy on a plate, to only use it once an hour,” she said.

Regulating this — and ensuring students aren’t penalized for using AI appropriately — starts with city officials recognizing common uses of the tools, Choi said.

Their attention, she said, should be on transparency in appropriate use, preventing misuse, and understanding how and why AI is used, rather than on discipline. Once students’ reasons — which may range from skill limitations to time-management issues — are identified, those problems can be addressed while students are still developing.

“It’s not going anywhere — their parents are using it,” she said. “Maybe if they were taught how to use it appropriately, then maybe they can do it on the up and up, without being secretive about it.”

Officials are asking for feedback before publishing a final playbook in June, via a survey and public events through May 8.