Instagram will start notifying parents when their teenage children repeatedly search for terms associated with suicide or self-harm in a short period.

The social media giant said the alerts are designed to give parents the information they need to support their teen, as well as resources to approach the sensitive conversations that follow.

“We understand how sensitive these issues are, and how distressing it could be for a parent to receive an alert like this,” Meta, which owns Instagram, said in its announcement on Thursday. “The vast majority of teens do not try to search for suicide and self-harm content on Instagram, and when they do, our policy is to block these searches, instead directing them to resources and helplines that can offer support. These alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for this content, and to give them the resources they need to support their teen.”

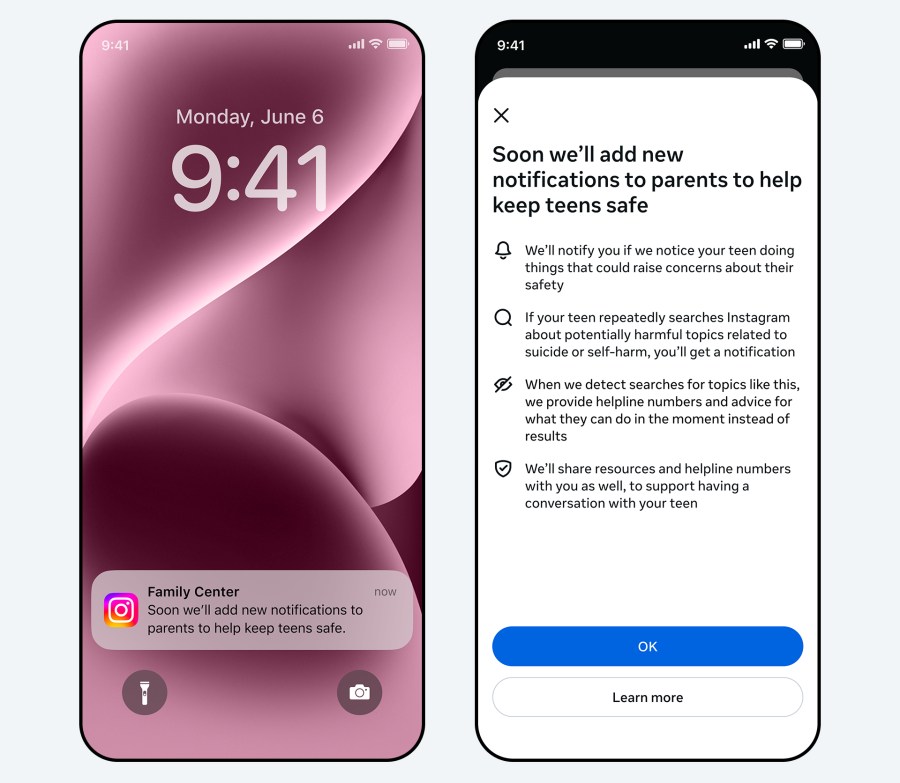

A look at Instagram’s new notifications for parents when their teen repeatedly searches for terms related to suicide or self-harm in a short period. (Instagram)

A look at Instagram’s new notifications for parents when their teen repeatedly searches for terms related to suicide or self-harm in a short period. (Instagram)

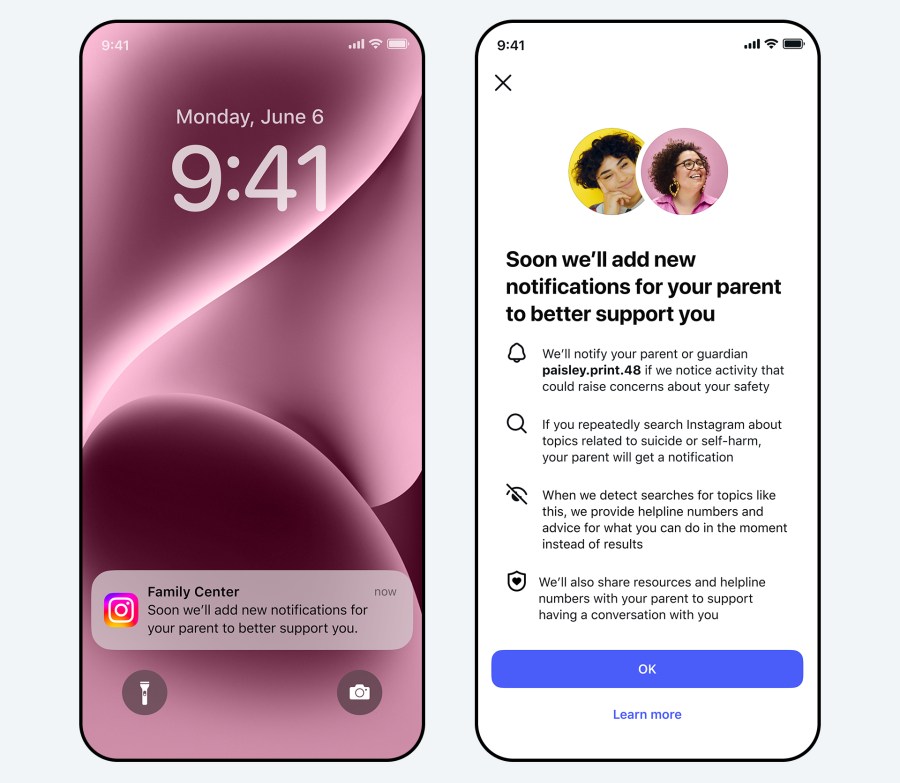

Another look at Instagram’s new notifications for parents. (Instagram)

Another look at Instagram’s new notifications for parents. (Instagram)

If you or someone you know is in crisis, call or text 988 to reach the Suicide and Crisis Lifeline or chat live at 988lifeline.org. You can also visit SpeakingOfSuicide.com/resources for additional support.

Attempted searches that would prompt an alert to parents include phrases promoting suicide or self-harm, phrases that suggest a teen wants to harm themself and terms like “suicide” or “self-harm,” the company said.

Parents will receive the alert via email, text, WhatsApp or an in-app notification on Instagram. Tapping on the notification will open a full-screen message explaining that their child has repeatedly searched for terms related to suicide and self-harm in a short period. Parents will also have the option to view expert resources about how to approach potentially sensitive conversations with their teen.

“Our goal is to empower parents to step in if their teen’s searches suggest they may need support,” Meta said. “We also want to avoid sending these notifications unnecessarily, which, if done too much, could make the notifications less useful overall.”

A look at Instagram, text and email notifications for parents. (Instagram)

A look at Instagram, text and email notifications for parents. (Instagram)

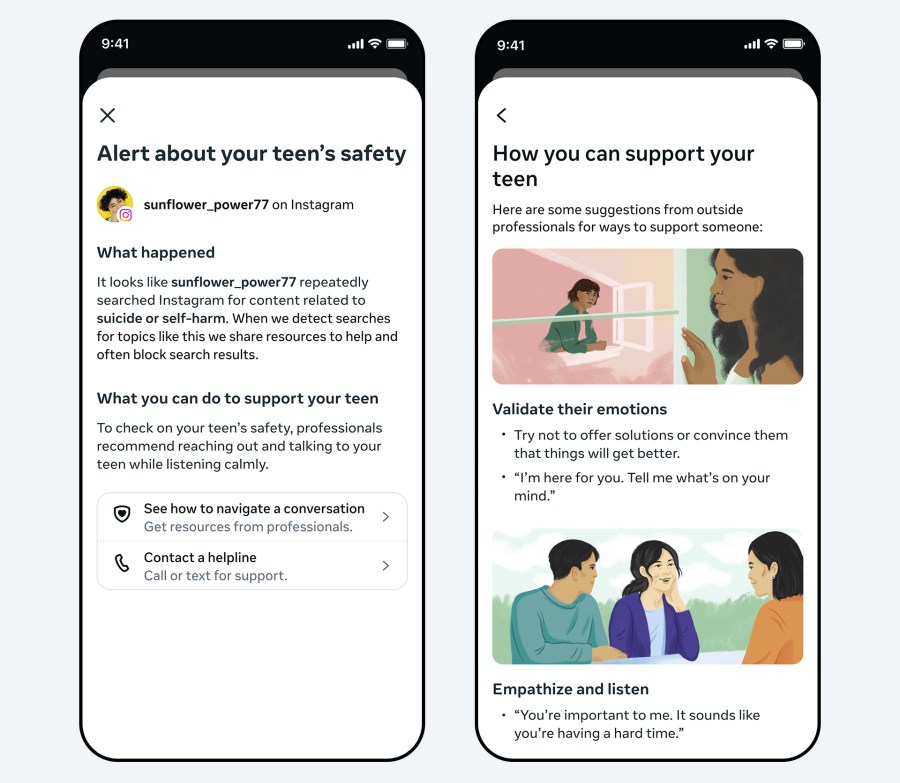

A look at expert resources from Instagram. (Instagram)

A look at expert resources from Instagram. (Instagram)

The alerts will start rolling out in the U.S., U.K., Canada and Australia next week before becoming available in other regions later this year.

Meta is also creating similar parental notifications to teens’ conversations with AI that will roll out later this year, the company said.

Instagram has existing policies against content that promotes suicide and self-harm. It allows people to share content about their own struggles, but it hides the content from teens, even if it is from someone they follow.

Meta is in the midst of two trials dealing with child safety on its social media platforms. CEO Mark Zuckerberg testified last week in a trial in Los Angeles over whether online platforms are designed to be addictive to children and young adults. A newly unsealed legal filing from another Meta case in New Mexico showed employees discussing 7.5 million child sexual abuse material reports annually that would no longer be reported after Zuckerberg’s decision to make Facebook Messenger end-to-end encrypted by default in 2019.