Joseph S. Friedman, who leads the NeuroSpinCompute Lab at UT Dallas, with the prototype neuromorphic computer designed to learn patterns using far fewer training computations than today’s AI systems.[Photo: UTD]

A UT Dallas research team has built a small neuromorphic computer that learns patterns using far fewer training computations than today’s AI systems. The early prototype points to a future where advanced models could run on smart devices without relying on energy-hungry data centers.

The work comes from Joseph S. Friedman, an associate professor of electrical and computer engineering, and his NeuroSpinCompute Laboratory at UT Dallas. His group aims to develop hardware that learns more like the human brain, cutting down the massive training requirements that make modern AI costly to build and operate.

Friedman said the prototype offers an early demonstration of how neuromorphic hardware could avoid the long, expensive training cycles that define today’s AI. “Our work shows a potential new path for building brain-inspired computers that can learn on their own,” Friedman said. He added that because these systems “do not need massive amounts of training computations,” they could eventually support advanced AI on low-power devices.

The research, published in Communications Engineering, a Nature journal, was developed with collaborators from Everspin Technologies and Texas Instruments. Friedman is co-corresponding author of the study with Dr. Sanjeev Aggarwal, president and CEO of Everspin.

Neuromorphic computers work differently from the systems used to train AI today. Instead of separating memory and processing, they combine both functions in one place, similar to how the brain stores and uses information at the same time. That design helps the computer learn more efficiently.

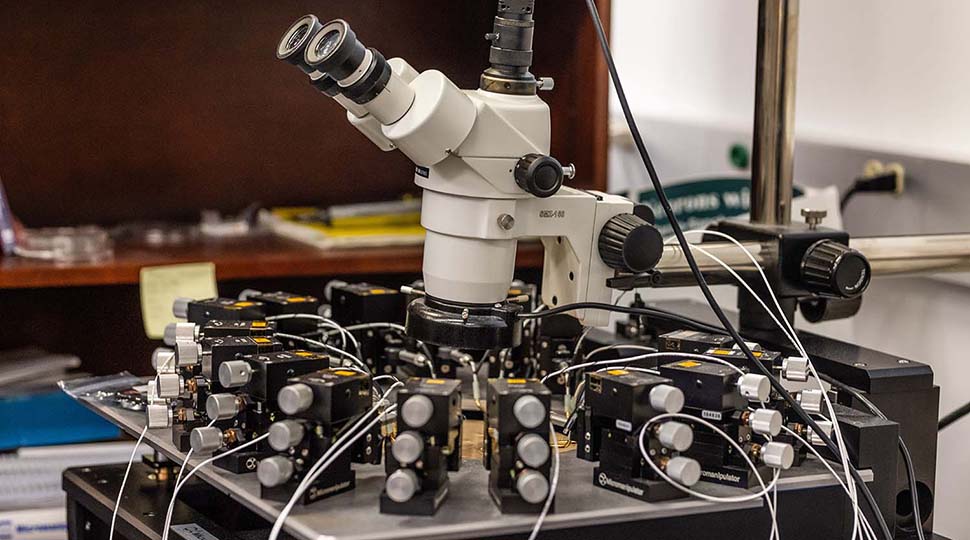

A probe station is used to test small neuromorphic devices in Dr. Joseph Friedman’s lab. [Photo: UTD]

To build their prototype, Friedman’s team used tiny devices called magnetic tunnel junctions, or MTJs. These devices change how easily electricity flows through them, which lets the system strengthen or weaken connections as it recognizes patterns.

The computer learns using a neuroscience principle known as Hebb’s law, often summarized as “neurons that fire together, wire together.” The concept describes how the brain reinforces the pathways it uses most.

Friedman said the team applied the same idea to their hardware. “The principle that we use for a computer to learn on its own is that if one artificial neuron causes another artificial neuron to fire, the synapse connecting them becomes more conductive,” he said. In the prototype, that process helps the system strengthen the pathways it relies on to identify patterns.

The study also highlights the range of collaborators involved in the work. Several UT Dallas alumni contributed to the research, including first author Peng Zhou, Alexander J. Edwards, and Stephen K. Henrich-Barna, who was also affiliated with Texas Instruments. Everspin Technologies contributed through co-corresponding author Sanjeev Aggarwal and researcher Frederick B. Mancoff.

The project is supported by several major research grants, including a National Science Foundation CAREER award and funding from the Semiconductor Research Corp. through UT Dallas’ Texas Analog Center of Excellence. In September, the U.S. Department of Energy awarded Friedman a two-year, $498,730 grant to continue advancing neuromorphic computing research.

The team’s next step is to scale the prototype to larger sizes. If successful, the approach could make future AI systems more efficient by reducing the need for energy-intensive training and allowing more learning to happen directly on devices.

Don’t miss what’s next. Subscribe to Dallas Innovates.

Track Dallas-Fort Worth’s business and innovation landscape with our curated news in your inbox Tuesday-Thursday.

R E A D N E X T

North Texas has plenty to see, hear, and watch. Here are our editors’ picks. Plus, you’ll find more selections to “save the date.”

AI seem overwhelming? Just go to office hours.

You’ll find deadlines coming up for a new accelerator program; and many more opportunities.

Data scientist Anmolika Singh put Dallas on the global AI Tinkerers map. At the first meetup, more than 30 pros—founders to Fortune 500 technologists—showed up to trade ideas, projects, and solutions.

UT Dallas researcher Dr. Walter Voit transformed Minecraft’s 170-million-player universe into an advanced virtual training ground—for students and for AI agents tested by DARPA. His team’s Polycraft World uses gameplay to turn classroom theory into real-world expertise, covering topics from synthetic organic chemistry to nuclear plants to semiconductor facilities. Their new startup company, Pedegree Studios, has licensed the core technologies from the university to create a scalable digital pipeline for education and workforce development.