Quantum repeaters represent a crucial technology for extending the reach of secure, long-distance communication, yet realising practical devices remains a significant challenge. Javier Rey-Domínguez and Mohsen Razavi, from the University of Leeds, alongside their colleagues, present a new approach that balances the need for scalability with the practical limitations of current infrastructure. Their work addresses a key problem in the field, where early repeater designs struggle to expand effectively, while more sophisticated solutions demand unrealistic hardware capabilities. The researchers propose a system employing a connectionless swapping method, similar to internet packet switching, and prioritises simple error detection over complex correction, resulting in a more readily implementable and scalable design. This innovative approach demonstrates the potential for achieving secure, continental-scale quantum key distribution through a phased development strategy, paving the way for a future quantum internet.

Desired design criteria often present challenges for quantum repeaters, as preliminary solutions frequently lack scalability while the most advanced options demand significant changes to existing telecom infrastructure. This research proposes a compromise solution intended to be scalable in the mid- to long term and adaptable to current Internet backbone networks. The core of this approach rests on a connectionless method for entanglement swapping, which allows the system to leverage the benefits of packet-switched networks, and employs simple error detection, offering a practical pathway towards robust quantum communication.

Long-Distance Quantum Communication with Repeaters

Establishing long-distance quantum communication presents a significant challenge, as quantum signals weaken and degrade over distance. Quantum repeaters are essential devices designed to overcome these limitations and extend the range of secure quantum communication. Key to these systems is the creation of entangled pairs of qubits, fundamental building blocks of quantum information. Entanglement swapping extends this entanglement over longer distances by performing a measurement on entangled pairs, relying on Bell state measurements. However, maintaining entanglement is difficult due to memory decoherence, the loss of quantum information from the environment, making techniques for error detection and correction crucial for reliable quantum communication.

Researchers have investigated several quantum repeater protocols, each employing different strategies for entanglement generation and swapping. One approach, Sequential Entanglement Generation with Error Detection (SEG-ED), generates entanglement step-by-step along a communication path, contrasting with parallel generation, which attempts to create entanglement across the entire distance simultaneously. The SEG-ED protocol prioritizes simplicity by focusing on error detection rather than complex error correction, reducing the demands on hardware. Other protocols explore probabilistic swapping, using linear optics to create entanglement with a certain probability, often characterized by parameters like the Werner parameter.

Ultimately, the choice of protocol depends on balancing factors like scalability, fidelity, and resource requirements. The key differences between these protocols lie in their approach to entanglement generation, error handling, and implementation complexity. Sequential protocols offer a more manageable approach, while parallel protocols potentially offer faster communication. Error detection simplifies hardware requirements, but may reduce overall fidelity. The goal is to identify the most promising approaches for building a practical long-distance quantum network, considering the trade-offs between performance and complexity.

Sequential Entanglement Generation Enables Scalable Repeaters

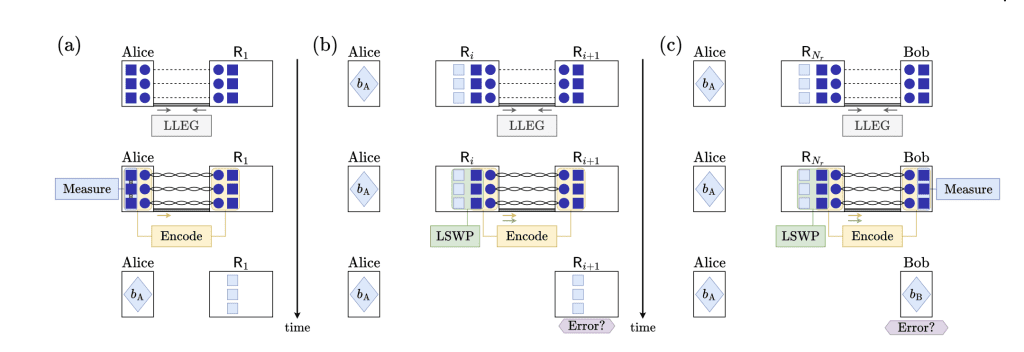

Researchers have developed a novel quantum repeater protocol, termed Sequential Entanglement Generation with Error Detection (SEG-ED), that addresses key challenges in long-distance quantum communication. This approach offers a compromise between scalability and practical implementation, adapting well to existing network infrastructure while paving the way for future quantum networks. The team’s work focuses on efficiently distributing entangled states over long distances, a crucial step for secure quantum key distribution and distributed quantum computing. Conventional quantum repeater protocols often employ parallel entanglement generation, which, while reducing waiting times, can be inefficient for networks with fluctuating demands.

SEG-ED, in contrast, utilizes a sequential approach, generating entanglement hop-by-hop along a designated path and releasing resources after each step, allowing for fairer resource allocation and better adaptation to network traffic. A significant innovation lies in the method of error handling; rather than attempting complex error correction, the protocol focuses on simple error detection. This simplifies the demands on quantum repeater hardware and allows for the use of less complex codes. The researchers demonstrate that by detecting errors and immediately aborting distribution, resources can be released for other users, improving network efficiency.

This strategy leverages the benefits of encoded repeaters without the computational burden of full error correction. Analysis reveals that the feasibility of SEG-ED hinges on balancing error resilience with achievable rates and distances; if equipment is too noisy, frequent abortions could render the protocol unusable. However, the team’s findings suggest that SEG-ED offers a promising pathway toward practical, scalable quantum communication networks, aligning with the architecture of the modern Internet and facilitating integration with existing infrastructure.

Scalable Quantum Repeaters with Error Detection

This research presents a practical quantum repeater scheme, termed Sequential Entanglement Generation with Error Detection (SEG-ED), designed to improve the feasibility of long-distance quantum communication. The team’s approach utilizes sequential entanglement generation combined with simple error detection techniques, offering a compromise between complex, resource-intensive solutions and those that lack scalability. This method enhances compatibility with existing classical networks through statistical multiplexing and demonstrates potential for efficient resource use in certain scenarios. The findings suggest that this scheme offers a pathway to scalability in the near to mid-term, even with relatively simple error correction codes, and can achieve acceptable performance within realistic network topologies. While the current study focuses on a single communication path, the researchers highlight the protocol’s potential benefits for multi-user networks, suggesting advantages in fairness and cost efficiency. Further investigation is needed to fully assess performance under high-traffic conditions and to explore the integration of this protocol with hybrid quantum networks combining terrestrial and satellite links.