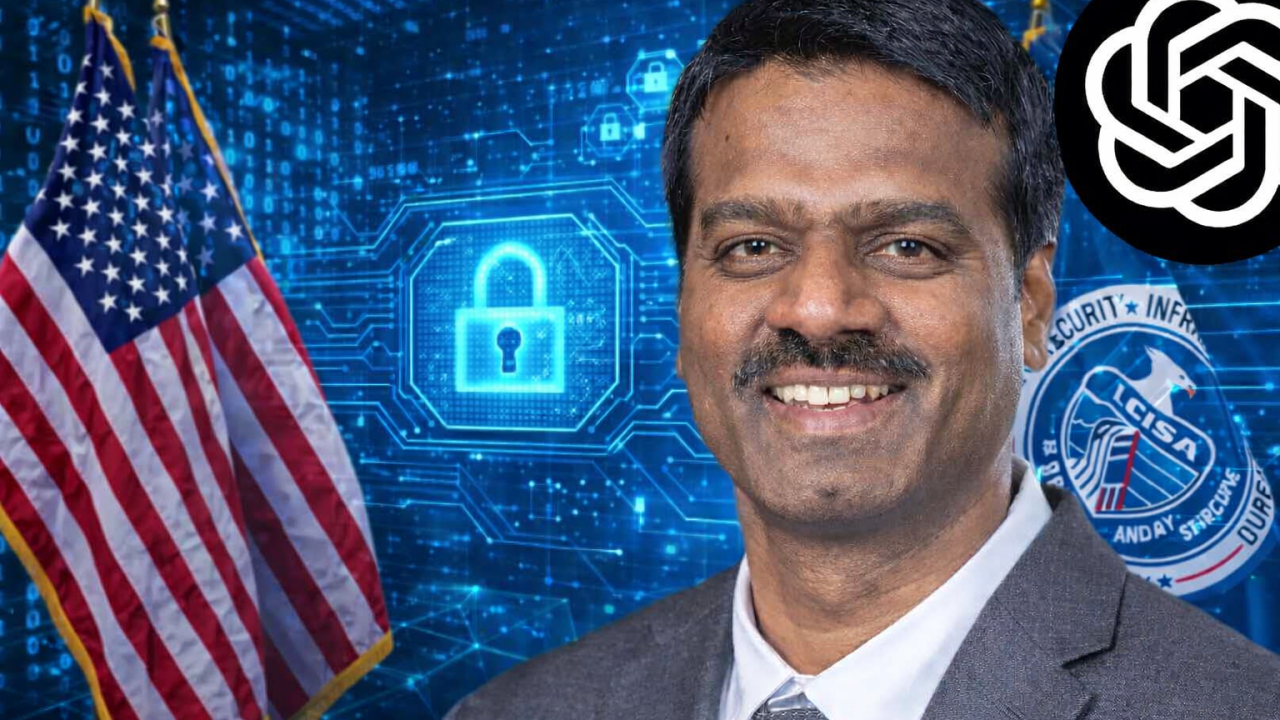

The acting director of the U.S. Cybersecurity and Infrastructure Security Agency (CISA), Madhu Gottumukkala, has sparked a high-stakes internal security review after uploading sensitive government contracting documents into the public version of ChatGPT, potentially exposing material not meant for broader dissemination, multiple news outlets and knowledgeable government sources confirm.

While the documents were not classified, they were marked “for official use only” (FOUO) — a designation used across federal agencies to denote sensitive information not intended for public release. Their transmission into an external, widely accessible AI platform triggered automated cybersecurity alerts and raised immediate concerns among senior officials at the Department of Homeland Security (DHS), which oversees CISA.

Incident Overview

According to insiders cited by Politico and subsequent reports, the uploads occurred in mid-July through early August 2025, shortly after Gottumukkala assumed the role of acting director in May 2025. At that time, the broader DHS workforce was explicitly blocked from accessing ChatGPT on government systems due to lingering concerns about data exfiltration and retention outside federal networks.

Gottumukkala had obtained a temporary exemption from CISA’s Office of the Chief Information Officer to use the public AI tool, purportedly as part of early efforts to explore artificial intelligence’s utility in federal cybersecurity operations.

However, the episode soon generated multiple internal warnings: CISA’s security sensors repeatedly flagged the uploads as potential exfiltration events and alerted DHS cybersecurity teams within days of the first file transfer.

In response, senior DHS officials ordered an internal damage assessment and review — a standard procedure under departmental policy when sensitive government material is inadvertently exposed outside secure government networks. These reviews typically examine root causes, data exposure risk, and potential personnel or policy actions, ranging from retraining to security clearance revocation.

Officials directly involved in the review spoke on condition of anonymity, acknowledging that the findings have not been publicly released and that the full extent of any compromise remains unclear. One source told The Independent that mid-summer meetings were held between Gottumukkala and top agency officials, including CISA’s chief information officer and chief counsel, to discuss proper handling of FOUO materials.

In an official statement, CISA spokesperson Marci McCarthy defended Gottumukkala’s use of ChatGPT as conducted “with DHS controls in place” under a “short-term and limited” exception, stressing the agency’s ongoing commitment to leveraging AI responsibly in line with federal directives. McCarthy also indicated Gottumukkala’s last recorded access to the public platform was in mid-July 2025.

But internal critics paint a far different picture. One current agency official described Gottumukkala’s actions as a misuse of privileged access, telling reporters he “forced CISA’s hand” in granting the exemption and then “abused it.”

A Closer Look at Madhu Gottumukkala

Madhu Gottumukkala (born October 29, 1976) is an American engineering executive who has served as acting director and deputy director of the Cybersecurity and Infrastructure Security Agency (CISA) since May 2025.

Prior to joining CISA, Gottumukkala held senior technology leadership roles in South Dakota state government. He served as chief information officer and commissioner of the South Dakota Bureau of Information and Telecommunications from September 2024 to May 2025, and briefly as the state’s chief technology officer from August to September 2024.

Gottumukkala was born in Andhra Pradesh, India, on October 29, 1976. He earned a bachelor’s degree in electronics and communication engineering from Andhra University. He later completed a master’s degree in computer science engineering at the University of Texas at Arlington, a Master of Business Administration in engineering and technology management from the University of Dallas, and a doctorate in information systems from Dakota State University.

Private sector (2000–2024)

Gottumukkala began his career in the private information technology sector in 2000. He later joined Sanford Health, where he served as senior director of business solutions beginning in December 2019. In April 2024, he was appointed to the advisory committee of Dakota State University. He is married to Vasantha, and they have two children.

Government of South Dakota (2024–2025)

In August 2024, South Dakota governor Kristi Noem appointed Gottumukkala as the state’s chief technology officer, and he was sworn in on August 5. The following month, he was named chief information officer and commissioner of the South Dakota Bureau of Information and Telecommunications, assuming both roles on September 9, 2024.

Cybersecurity and Infrastructure Security Agency

In April 2025, U.S. secretary of homeland security Kristi Noem appointed Gottumukkala as deputy director of the Cybersecurity and Infrastructure Security Agency. He assumed the role on May 16, 2025. Later that month, he informed agency personnel that much of CISA’s leadership was resigning and that he would serve as acting director beginning May 30, 2025. In August 2025, he spoke at the Black Hat Briefings conference on critical infrastructure.

In December 2025, Politico reported that Gottumukkala had requested access to a controlled access program in June, which required a polygraph examination. He failed the polygraph in late July. The Department of Homeland Security subsequently opened an investigation into the circumstances of the test and suspended six career staff members, stating that the polygraph should not have been administered.

Broader Context

The incident spotlights the growing challenges that government agencies face in integrating generative AI tools like ChatGPT into sensitive work environments. Public AI platforms — unlike internally controlled versions — may retain user inputs and, in some cases, use them to improve model performance, potentially making information accessible to others or even, in worst-case scenarios, adversarial actors. This risk is especially acute for agencies charged with defending against state-sponsored cyber threats.

It is worth noting that even unclassified but sensitive data can pose significant operational risk if adversaries glean insights into contracting activity, network architecture, or procurement strategies. Academic research on large language models underscores similar concerns: outside of secure government or enterprise AI systems, there are documented limitations in how effectively these tools can safeguard sensitive inputs without robust encryption, isolation, and data governance controls.

Gottumukkala’s tenure at CISA has already been marked by internal tension. In late 2025, multiple career staffers were placed on leave during a separate controversy involving a counterintelligence polygraph exam the acting director took — and reportedly failed — prompting additional internal investigations.

More recently, Gottumukkala attempted to remove CISA’s Chief Information Officer from his post, a move that was blocked by peers, according to multiple reports. These episodes have fueled questions about internal leadership dynamics at the agency at a moment when its workforce is also contracting due to budget pressures.

Conclusion

The revelations are likely to draw further scrutiny from Congressional committees overseeing homeland security and federal cybersecurity policy. Lawmakers have already expressed bipartisan concern over the government’s use of AI and data security practices, raising the possibility of new legislative action to tighten controls around sensitive information and artificial intelligence adoption in government. This case may serve as a pivotal example of why rigid protocols, real-time monitoring, and clear accountability structures are essential when public AI tools intersect with classified or sensitive operations.