Today, Wang Yunhe, the director of Huawei’s Noah’s Ark Laboratory, officially announced his departure on his WeChat Moments.

Since 2026, a series of high – level personnel changes in the domestic AI circle have been announcing that the entire industry is undergoing a profound structural transformation.

Wang Yunhe: A Veteran at Huawei

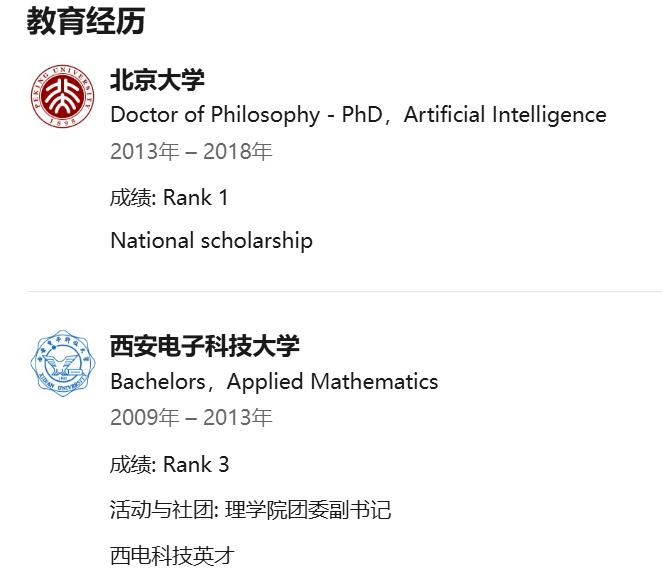

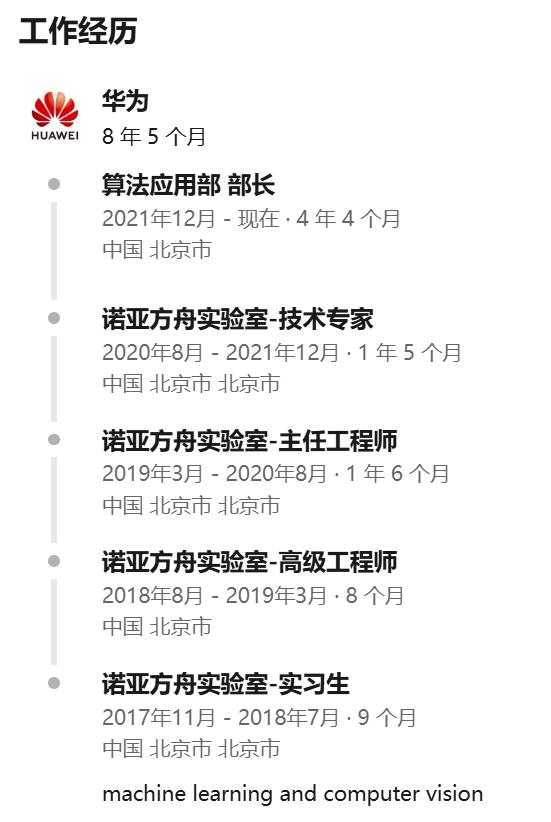

Wang Yunhe was born in 1991. He studied Mathematics and Applied Mathematics at Xidian University for his undergraduate degree and graduated with a doctorate from the Department of Intelligent Science at Peking University in 2018. His main research areas include deep learning, model compression, machine learning, computer vision, etc.

He interned at Huawei’s Noah’s Ark Laboratory before graduating from Peking University and naturally joined it after graduation, serving as a senior engineer. Later, he was successively promoted to chief engineer and technical expert.

In 2021, he began to serve as the head of Huawei’s Algorithm Application Department, responsible for the innovative research and development of efficient AI algorithms and their application in Huawei’s business. He was selected for Huawei’s Fourth “Top Ten Inventions” for his “High – performance multiplier and addition neural network that significantly improves computing power”. In March last year, Wang Yunhe succeeded Yao Jun as the director of Huawei’s Noah’s Ark Laboratory. Now, Wang Yunhe is a veteran with more than 8 years of work experience at Huawei.

In addition, Wang Yunhe is also a quite active answerer on Zhihu and an excellent answerer in the Deep Learning topic.

Wang Yunhe’s Research and Exploration

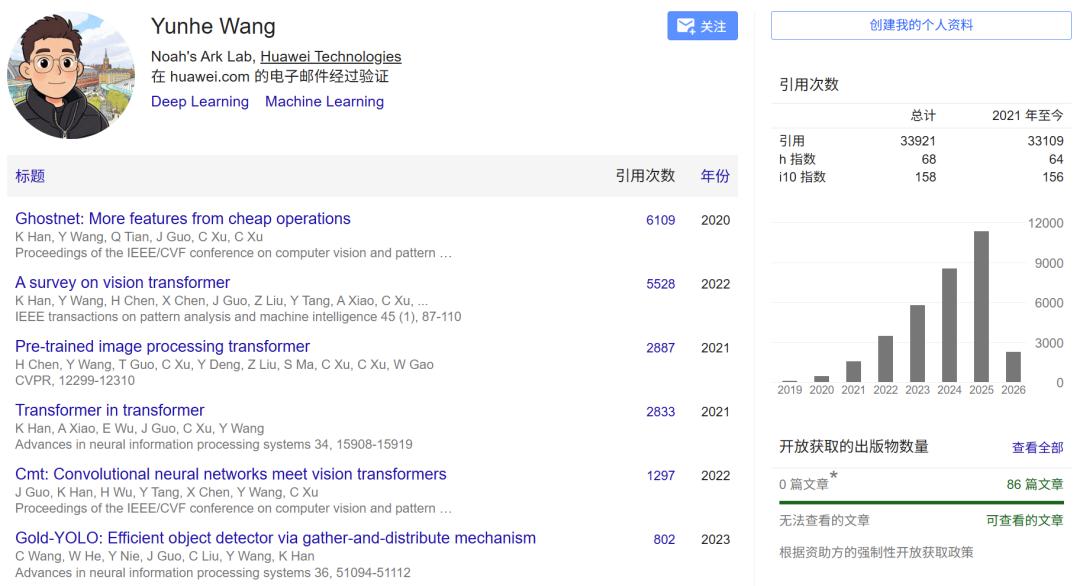

As a senior researcher and engineer, Wang Yunhe has a very impressive academic resume, and his Google Scholar citation count has exceeded 33,000.

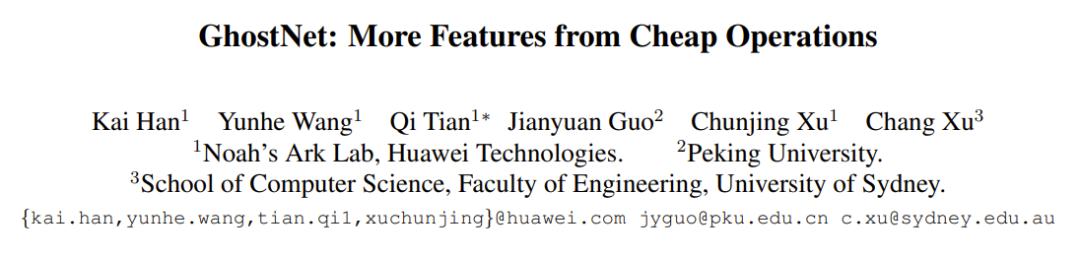

The paper with the highest citation count is GhostNet, a new type of edge – side neural network architecture developed in collaboration with Han Kai and others.

In this CVPR 2020 paper, Han Kai, Wang Yunhe, etc. proposed a brand – new Ghost module, aiming to generate more feature maps through inexpensive operations. Based on a set of original feature maps, the authors applied a series of linear transformations to generate many “ghost” feature maps that can extract the required information from the original features at a very low cost. The Ghost module is plug – and – play. By stacking Ghost modules, a Ghost bottleneck is obtained, and then a lightweight neural network, GhostNet, is built.

In the ImageNet classification task, GhostNet achieved a Top – 1 accuracy of 75.7% with a similar amount of computation, higher than the 75.2% of MobileNetV3.

Paper address: https://arxiv.org/abs/1911.11907

It can also be seen from his Google Scholar paper list that Wang Yunhe has achieved remarkable results in cutting – edge computer vision directions such as Vision Transformer. In the current wave of Vision Transformer research, the review article A survey on vision transformer he participated in publishing has been cited as many as 5,528 times and is an important reference in this field.

At the same time, two important studies, Pre – trained image processing transformer and Transformer in transformer, jointly launched by him and his team, both have citation counts approaching the 3,000 mark. This series of work systematically optimized the computational efficiency of the self – attention mechanism in visual feature extraction and greatly promoted the application and popularization of the Transformer architecture in visual tasks.

As an excellent answerer in the deep learning topic on Zhihu, Wang Yunhe also often shares his insights on topics such as the core architecture of AI. For example, on January 24 this year, he published an article titled “A Deep Thinking on Diffusion Language Models” on his personal Zhihu account. In this article, he in – depth explored the potential and technical bottlenecks of diffusion language models in the field of text generation.

Facing the mainstream technical routes in the era of large models, Wang Yunhe put forward unique insights. He recalled the scene of discussing “What is the next step for Transformer” many years ago and pointed out that “Transformer is a paradigm obtained through long – term accumulation from quantitative to qualitative changes”. Regarding the currently highly – concerned diffusion models, he believes that “diffusion itself is not the next step for Transformer, but in terms of the modeling method, it may have the potential to have a great impact on autoregression”.

In this technical sharing, he systematically sorted out the 10 core challenges and optimization directions currently faced by diffusion language models, covering multiple dimensions such as efficient architecture design for inference, exploration of more suitable vocabularies, and better optimization paradigms. Especially in terms of the model design concept, Wang Yunhe emphasized that “the most ideal diffusion model should not follow the existing paradigm of AR and should be structured like human thinking”.

He proposed that future AI model design can learn from the characteristics of human multi – scale thinking and explore a vocabulary structure with hierarchical connections. In addition, integrating discrete diffusion models with vision, language, and action modules in scenarios such as embodied intelligence is expected to explore a more unified model structure and training paradigm.

In the recently published paper “DLLM Agent: See Farther, Run Faster” led by Wang Yunhe, his team discussed a basic but often overlooked question: How do the generation paradigms of the underlying language models (DLLM based on diffusion and AR based on autoregression) profoundly affect the planning, tool – using behavior, and overall decision – making trajectory of agents when the agent framework, supervised data, and interaction budget are exactly the same?

Paper address: https://arxiv.org/abs/2602.07451

The DLLM agent he proposed can achieve more efficient global planning. With a comparable final accuracy, it has a faster end – to – end speed, fewer interactions and tool calls, and reduces redundancy and backtracking.

Conclusion

As an AI leader who has served at Huawei for more than 8 years, Wang Yunhe’s departure is undoubtedly a major focus in the industry. He grew from an intern to the director of the Noah’s Ark Laboratory, leading a number of underlying algorithm innovations with international influence.

Now, with his profound thinking on diffusion language models and the unified architecture of general artificial intelligence, where his next career journey will lead is still worthy of continuous attention from the entire industry.

This article is from the WeChat official account “Machine Intelligence” (ID: almosthuman2014). Author: Someone Concerned about AI. Republished by 36Kr with authorization.