Herbert Smith Freehills Kramer LLP’s articles from Herbert Smith Freehills Kramer LLP are most popular:

within Finance and Banking topic(s)

in United States

with readers working within the Property and Law Firm industries

Herbert Smith Freehills Kramer LLP are most popular:

within Transport, Antitrust/Competition Law and Employment and HR topic(s)

with Inhouse Counsel

The uptake of AI use cases in the financial services sector has

been faster than regulators had initially envisaged. In financial

services, AI is being used to: optimise internal processes;

transform customer relationships; target marketing and offer

services; assist in credit assessments; automate trading systems;

detect patterns and make future value predictions; support

know-your-customer (KYC) and due diligence processes; monitor

risks, regulatory compliance and employees; shed light on dark data

for underwriting and collection purposes; report data to

regulators; and more. Some firms are also already investing in

quantum computing projects, in anticipation of quantum

viability.

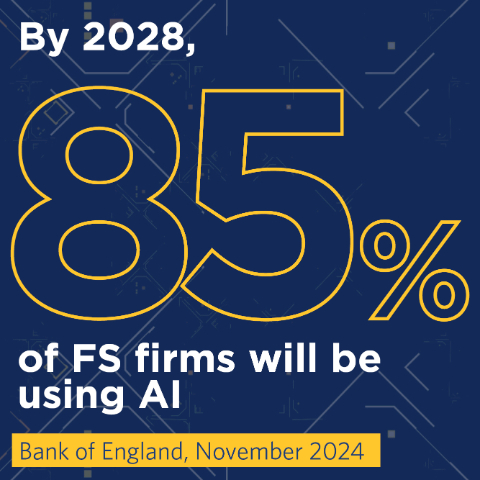

In November 2024, the Bank of England reported that more than 75% of

financial services firms were using AI systems. Over 50% of all

financial services AI use cases have some degree of automated

decision-making: around 24% are semi-autonomous (designed to

involve human oversight for critical or ambiguous decisions) and as

of November 2024, only 2% were fully autonomous.

Research from Russell Reynolds suggested that

in the first half of 2025 around 91% had taken steps towards

implementing generative AI in their workflow.

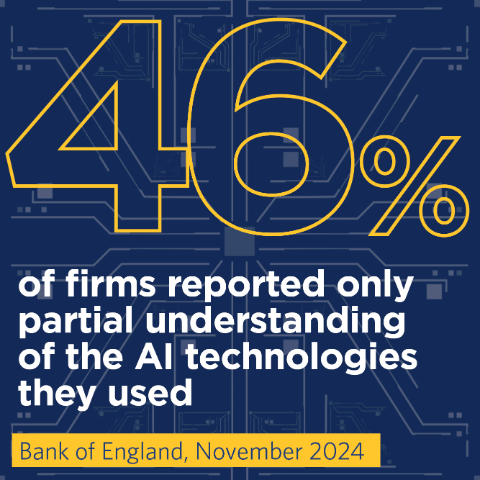

Against this background of increasing take-up, a third of

financial services use cases are third-party implementations

– this poses challenges in terms of transparency, governance

and control, in addition to a degree of concentration risk at the

level of cloud, model and data providers. More concerningly, only

34% of firms using AI felt they had a complete understanding of the

technologies they used; 46% reported only partial understanding.

Nonetheless, 40% of firms are using AI to optimise their own

internal processes.

Regulatory approaches

The regulators, often with strong encouragement from

governments, are taking steps to ensure firms are empowered to

embrace automation and capitalise on the many opportunities it

creates. The vision is that AI will help to enhance growth, market

integrity and consumer outcomes.1 Most regulators seek

to foster the development of these systems through the provision of

testing environments for AI that simulate conditions close to the

real world, as well as other forms of private/public collaboration.

Unsurprisingly, however, the regulators also expect firms to ensure

appropriate management of the risks that the use of this technology

may introduce or amplify.

Markets remain complex, nuanced and deeply human. The value of

skilled, well-trained analysts – those who understand market

behaviour, context and intent – is not diminished by

technology. If anything, it’s amplified.

Dominic Holland

FCA Director of Market Oversight, November 2025

While a few jurisdictions have sought to adopt

technology-specific legislation and rules, many jurisdictions and

regulators (for example, the UK and Hong Kong) aim to take a

technology neutral or agnostic stance, welcoming the use of

‘good technology’ to support and enhance decision-making

but only as long as firms maintain robust governance, oversight and

testing and comply with existing regulatory requirements. The

result is that existing regulatory requirements – many of

which were originally developed in a more analogue context –

now apply in an increasingly digitalised operating environment.

That said, regulators are also beginning to develop guidance and

best practices on the basis of their growing experience of the

range of AI use cases being deployed by the firms they

regulate.

Nonetheless, as Dominic Holland, Director of Market Oversight

at the UK Financial Conduct Authority (FCA), recently said, AI

cannot replace human judgement. Interestingly, he noted that

regulations are written with individuals in mind and stressed that

the regulators’ experience is in interrogating, issuing

directions to, and taking action against individuals, including

individuals within a body corporate.

Some regulators, like the Hong Kong Monetary Authority (HKMA),

require firms to allow customers to opt out or request human

intervention in customer facing applications using GenAI, and the

Hong Kong Securities and Futures Commission (SFC) imposes stricter

requirements on high-risk activities like the provision of

investment recommendations, investment advice and investment

research using GenAI models.

The Republic of Korea and Vietnam are progressing detailed and

comprehensive requirements specifically governing the use of AI

rather than focusing on financial services specifically; Indonesia,

Thailand and the Philippines are also considering a comprehensive

legislative approach, and the UK too is contemplating possible

cross-sector legislation. The Australian government has, for now at

least, rejected a proposal for stand-alone legislation.

On the other hand, having finalised AI-specific legislation, the

EU is now delaying implementation of requirements for ‘high

risk’ applications until harmonised standards and support tools

are in place and is considering targeted measures to simplify the

EU AI Act to help foster growth and innovation. In common with

approaches elsewhere, EU AI regulation stresses the need for

appropriate human oversight measures in addition to adequate risk

assessment and mitigation systems, quality of datasets,

transparency, monitorability, security and robustness.

Fundamentally, AI will continue to be a focus for regulatory,

supervisory and use case development through 2026 and beyond.

Footnote

1 The HKMA, for example, notes potential uses such as the

identification of vulnerable customers, and those who may need more

information or clarifications to better understand product

features, risks, and terms and conditions, and the issuance of

fraud alerts to customers engaging in transactions with potentially

higher risks: See HKMA Circular on customer protection in

respect of the use of Generative Artificial Intelligence, 19 August

2024 (HKMA GenAI Circular).

The content of this article is intended to provide a general

guide to the subject matter. Specialist advice should be sought

about your specific circumstances.