April 7, 2026

By Karan Singh

Tesla recently published a highly detailed patent that explains the inner workings of its vision-based occupancy network. The patent is titled Artificial Intelligence Modeling Techniques For Vision-based Occupancy Determination and was officially published on March 12, 2026.

Credited to a team of engineers that includes Ashok Elluswamy, the document provides a deep dive into how Tesla uses artificial intelligence to understand the physical world without relying on radar or LiDAR.

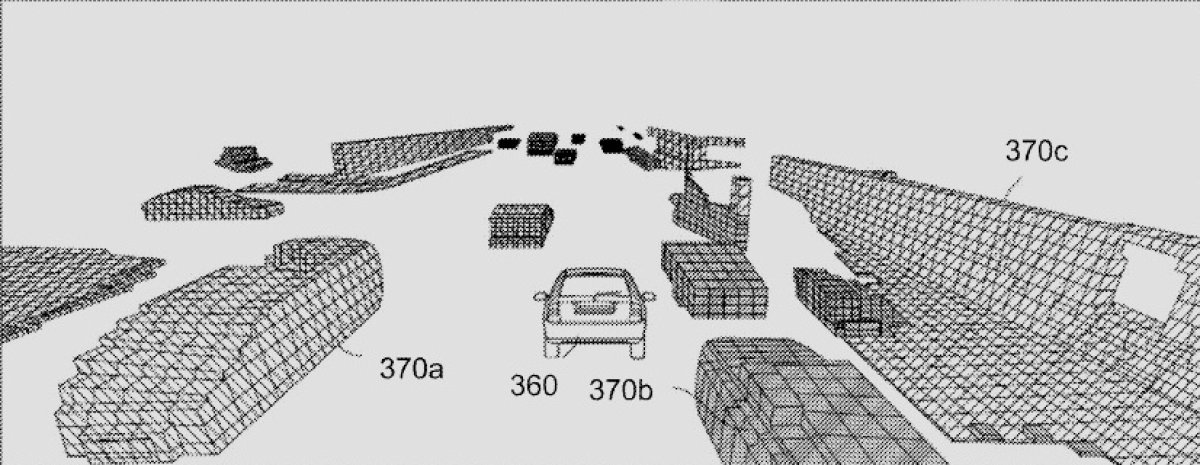

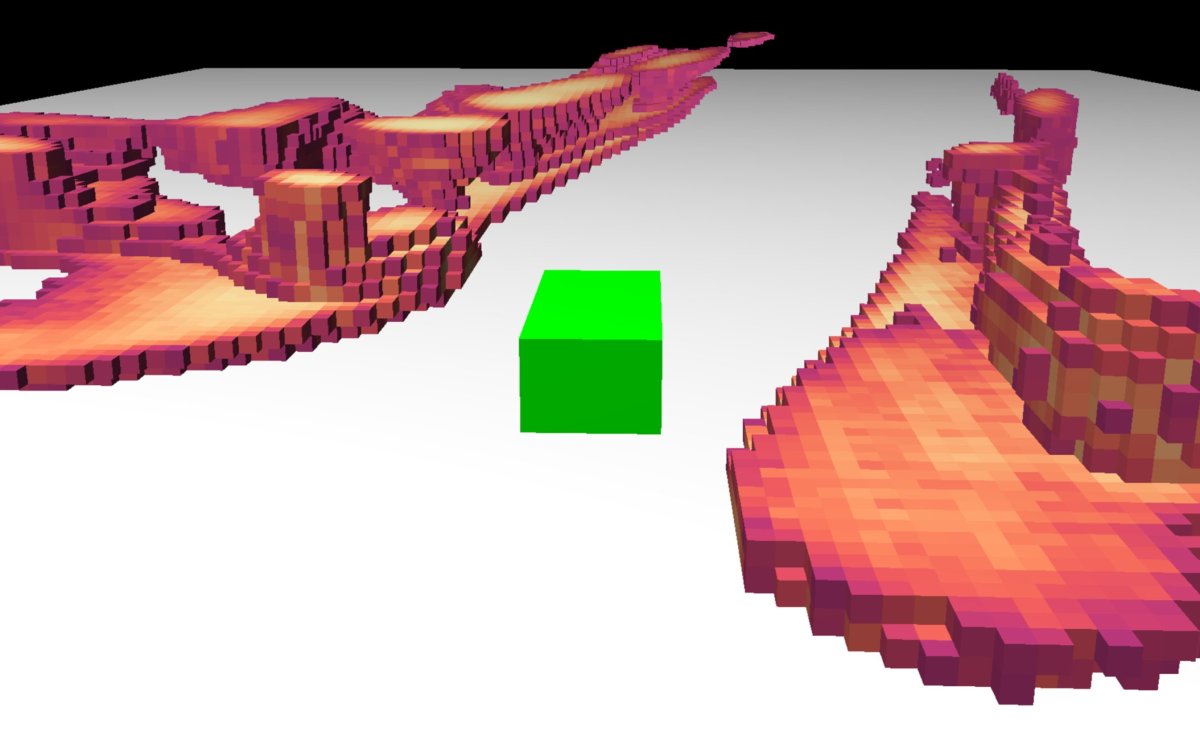

Understanding the Voxel Grid

The core of Tesla’s occupancy network revolves around voxels. A voxel is essentially a three-dimensional pixel that represents a specific point within a volumetric grid surrounding the vehicle. To build this grid, the artificial intelligence model ingests image data from the eight exterior cameras of the vehicle. The system then executes the model to predict whether each voxel is occupied by an object having mass.

Because labeling millions of 3D data points manually would be impossibly time-consuming, the patent notes that Tesla relies heavily on unsupervised training methods to train these models at scale.

Variable Resolution and Sub-Voxels

Variable Resolution and Sub-Voxels

One of the most interesting details revealed in the patent is how Tesla manages computing power by dynamically adjusting the size of these voxels. The default size for a voxel is 33 centimeters on each vertex. This size is generally acceptable for objects located far away or outside of the immediate driving surface.

However, FSD can reduce the voxel size to 10 centimeters for areas that are occupied and within a threshold distance from the vehicle. This allows for much higher granularity where it matters most. The neural networks can even predict partial occupancy by dividing occupied spaces into smaller sub-voxels.

This allows FSD to identify the exact shape of a curved object accurately. The analytics server can also use trilinear interpolation to estimate the occupancy status of any specific point within a voxel.

Temporal Fusion and 3D Semantics

Tesla’s AI does not just look at static frames in isolation. The artificial intelligence model uses a transformer to aggregate the 2D image data into a unified 3D representation. It then fuses this current 3D space with representations from previous timestamps. This combination of spatial and temporal data allows the network to calculate occupancy flow. Occupancy flow indicates the exact velocity of moving voxels.

Finally, FSD applies 3D semantic data to identify what the object actually is. It can distinguish whether a group of occupied voxels represents a moving car, a static building, or a street curb. The system is designed to prioritize certain semantic shapes. For instance, a moving vehicle near the ego will be analyzed much more thoroughly than a static building located far off the roadway.

Powering Vehicles and Optimus

All of this data is continuously aggregated into a queryable dataset. FSD can constantly query this dataset to receive occupancy statuses and make real-time navigational decisions. Additionally, this same dataset is used to generate the 3D environmental map displayed on the user interface inside the vehicle.

While the patent is heavily focused on autonomous vehicles, it confirms that the underlying technology is highly adaptable. The document specifically notes that this exact same vision-based occupancy network can be utilized by a general-purpose, bipedal humanoid robot to navigate various terrains.

If you enjoyed this article, we recommend reading our full series on Tesla patents related to FSD:

Subscribe to our newsletter to stay up to date on the latest Tesla news, upcoming features and software updates.

April 7, 2026

By Karan Singh

Tesla appears to be accelerating its autonomous ride-hailing ambitions. Observers recently spotted a fleet of approximately 60 Tesla Model Y vehicles at a parking lot in Phoenix, Arizona. This parking lot of vehicles suggests that Phoenix is no longer just a testing environment but an active staging ground for a broader Robotaxi rollout.

Rear Camera Washers Confirmed

The most revealing detail about this newly spotted fleet is a hardware modification not found on standard consumer vehicles. It seems all the Model Ys staged in the parking lot feature a dedicated rear camera washer. While these vehicles don’t have the unique repeater camera washers found in Austin, the rear camera washer is still a Robotaxi-specific change that is not found on consumer vehicles.

In a fully autonomous system, sensor hygiene is absolutely critical. A human passenger cannot be expected to step out of the vehicle to wipe off a dirty camera lens. By standardizing rear-camera sprayers across its Robotaxi-specific fleet, Tesla is demonstrating that these vehicles are explicitly configured for unsupervised operation under varying environmental conditions. However, we have yet to see this feature added to new consumer vehicles.

Scaling Up from Austin

Until now, Tesla has primarily focused its Robotaxi pilot program in Austin, Texas, working with a relatively small-scale fleet – providing both Supervised and Unsupervised rides throughout the slowly expanding geofence. The sudden appearance of 60 Robotaxi-ready Model Ys in Phoenix is a significant expansion for one of the next major cities that Tesla has been targeting.

Deploying a prebuilt fleet of this size may indicate that Tesla has internalized the operational lessons learned in Texas and is ready to expand. Phoenix is expected to be one of the first major markets to come online during the first half of 2026, alongside other Sun Belt cities like Dallas, Houston, Miami, Orlando, Tampa, and Las Vegas. These regions share a common profile of favorable weather, straightforward road geometry, and exceptionally high ride-hailing demand.

Bridging the Gap to Cybercab

While Tesla is actively preparing for the imminent production of its purpose-built Cybercab, the Model Y continues to serve as the backbone of the current Robotaxi network. The fleet currently staged in Phoenix will likely serve a vital dual purpose in the coming months.

Just like the fleet in Austin, this fleet will be gathering localized mapping and routing data to help refine FSD and Tesla’s Robotaxi control network for the specificities of Phoenix traffic patterns and any challenging and unique intersections. That also includes gathering information for all the potential drop-off and pick-up points shown in the Robotaxi App.

Once the Robotaxi network is ready, Tesla can simply flip the switch and begin generating revenue immediately. As Cybercab production ramps up later this year and dedicated units become available at scale, these specialized Model Ys may transition to a support role or be redeployed to open up even more new markets across the country.

April 6, 2026

By Karan Singh

Elon Musk recently confirmed that the highly anticipated FSD v14.3 update is officially in the hands of employee testers and will be rolling out to early-access customers in the near future.

After a series of incremental FSD v14.2 patches focused on stability, the upcoming v14.3 release could be a big jump in FSD capabilities. This could include anything from intelligent parking features to Banish, to controlling FSD with your voice.

Let’s take a look at some of the FSD features we’ve been waiting for that could be included in FSD v14.3.

Next-Level Reasoning and Pothole Avoidance

Musk recently updated the FSD v14 roadmap, noting that this specific set of updates is designed to make the vehicle feel almost sentient. While previous versions relied heavily on reacting to immediate obstacles, v14.3 is expected to introduce a massive upgrade in real-time reasoning. The neural network will be able to apply complex logic to edge cases and unpredictable urban environments on the fly.

This upgraded reasoning directly translates to a smoother, safer ride. In the v14.2 update, Tesla introduced major improvements to road debris avoidance. Version 14.3 could take this a step further by introducing advanced pothole detection and avoidance. The vehicle could recognize degraded road surfaces and smoothly adjust its path to protect your tires and suspension without erratic swerving.

In the video below, FSD changes lanes to avoid what was simply a shadow on the road, but this could easily have been a larger pothole.

Fsd 14.2.2.5 swerves for a shadow. I disengaged and reported the issue pic.twitter.com/7bse3vKymK

— Elias Martinez (@EliasMartinez) March 20, 2026 Actually Smart Summon for Cybertruck

Tesla’s Actually Smart Summon feature has been a game-changer for retrieving vehicles from crowded parking lots, but the angular Cybertruck has notoriously been left out of the fun.

With the recent end of the NHTSA investigation into Actually Smart Summon, we expect the first version of Summon to finally roll out for Cybertruck owners. In addition, we’re hoping to see some improvements to Smart Summon’s logic based on improvements to Tesla’s Robotaxi FSD builds for low-speed maneuvering in tight spaces.

That means better reliability, smoother navigation around pedestrians, and more decisive routing in complex parking lots.

We’re hoping that these improvements arrive in FSD 14.3.

Banish and Smarter Parking

Perhaps the most-requested feature in v14.3 is Banish. Serving as the ultimate evolution of Autopark, Banish would allow a driver to step out of the vehicle at the entrance of their destination. The car could then autonomously navigate the parking lot, locate a valid space, and park itself.

To support this, Tesla is likely overhauling its parking logic. Early versions of Autopark occasionally struggled with contextual spot selection, sometimes attempting to use handicap spaces or failing to pull into designated Supercharger stalls.

FSD v14.3 may bring improved parking spot selection, ensuring the vehicle intelligently identifies legally available spots.

While we have been waiting for Banish for years, we’ll likely see improvements to parking and parking spot selection before its eventual release.

Vehicle-to-Fleet Communications

One of the most exciting additions coming to FSD is the introduction of Vehicle-to-Fleet Communication. This feature was originally announced all the way back for the v12.4 release, but ultimately never materialized.

This technology would allow Teslas to share highly localized, real-time data with the rest of the fleet. If one Tesla encounters a severe pothole, a temporary road closure, or a sudden traffic hazard, it would instantly alert the rest of the fleet. Other Teslas approaching the same area would automatically receive this information and adjust their speed or routing before the hazard is even visible on their cameras.

Tesla is likely already employing a similar strategy for routing its Robotaxis, but seeing this logic come to the fleet at large would be another big step towards true Unsupervised autonomy.

Expanded Front Camera Cleaning

As Tesla pushes closer to unsupervised autonomy, keeping the vehicle’s cameras clean is absolutely critical. Last year, Tesla introduced a front camera cleaning technique that improves the cleaning of the front-facing cameras, but it has been limited to the newer 2026+ Model Y variants.

We could see Tesla expand software support to additional vehicle variants.

While the focus of FSD 14.3 is on improved reasoning, we’re likely to see the introduction of other FSD features we’ve been waiting on, such as improved parking selection.

FSD v14.3 is shaping up to be one of the most important FSD updates in recent memory. We will be keeping a close eye on the early-access rollout that’s expected to happen over the next week or two.