This large, multisite randomized study has shown that AI prioritization of CXRs did not improve the speed of the lung cancer diagnostic pathway. This means that this element of AI functionality, which introduces additional complexity and cost to AI installation and may add time to clinical workflows, is not required to accelerate the lung cancer diagnostic pathway. A detailed health economics evaluation will be published separately, but costs are both considerable and avoidable, given the results reported in this study. Several other important findings from the secondary outcomes and the exploratory analyses are discussed below.

CXR, often the first test for patients with lung cancer referred for diagnosis, is the most frequently performed imaging investigation, with more than 7 million performed annually in England, including ~2.2 million CXRs referred from primary care alone20. Following either an abnormal CXR or presentation in a high-risk patient, the NOLCP mandates rapid progression to CT. Current (April 2025) median reporting times for CXRs referred from primary care (2 days) and waiting time for CT chest (15 days) do not meet the NOLCP standards (72 h from abnormal CXR to CT)20. The lower number of CT scanners per capita21, as well as chronic shortage of consultant radiologists and radiographers and an ever-increasing workload, may explain the lack of compliance.

One potential strategy to optimize progression from CXR to CT and bring forward the diagnosis is to use an AI tool to prioritize CXRs with suspicious findings for urgent reporting and improve the accuracy of interpretation, thereby selecting patients most likely to benefit from a same-day CT scan. However, this approach needs careful evaluation, as AI might have the opposite effect of not increasing accuracy or speed and creating extra workload, for example, through FP findings or altered clinician behavior. Vigilance fatigue is of particular concern, that is, when the review of all CXRs flagged as abnormal either desensitizes the reader to potential subsequent FN CXRs labeled as normal by the AI or causes the reader to lose trust in the AI’s accuracy (the ‘cry wolf’ phenomenon)22. This is one reason why NICE has not recommended any AI products for CXR interpretation in England23.

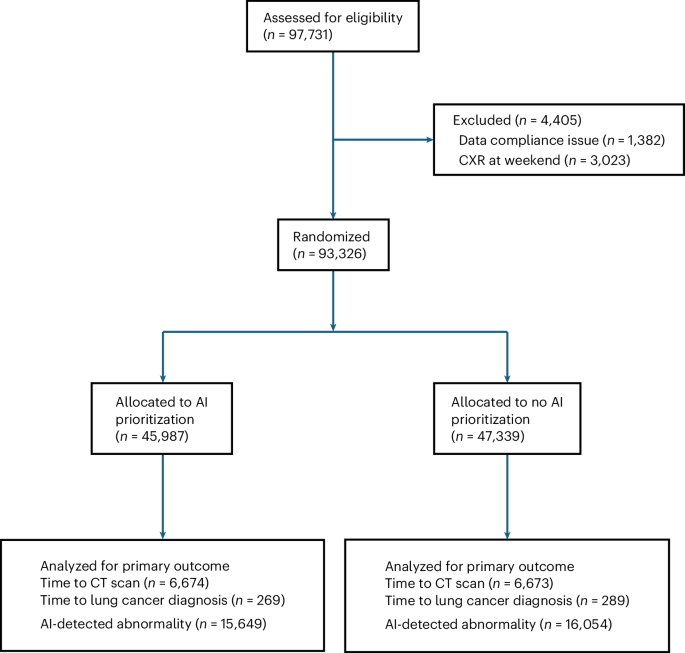

The LungIMPACT study has examined both the above elements of CXR use through its primary outcomes and several other aspects with the secondary outcomes, including analysis of discordances and the likelihood of a cancer diagnosis with the addition of AI. It has been shown conclusively that AI prioritization had no impact on time to CT or time to lung cancer diagnosis. In the secondary outcome analysis, further steps of the pathway were examined; the study has quantified the number of FPs and FNs and shown the number of lung cancers expected to be diagnosed (Tables 3 and 4). Exploratory analyses also showed differences in time to CT and time to lung cancer diagnosis across the different combinations of discordance (Table 5).

A significant reduction in the median time from CXR acquisition to report was observed, from 47 h to 34.1 h; however, no significant differences were detected in any of the timings measured as primary or secondary outcomes (Table 2). The lack of improvement in AI prioritization is likely because, although the time to CXR report was shortened, it was not sufficiently reduced in the prioritization arm to influence the clinical pathway in the same way as immediate radiographer triage did in our previous study13. This may, in turn, be explained by the limited capacity within Trusts to both report immediately and organize downstream tests and appointments, even though this is a recommendation in the NOLCP. However, this finding could not have been predicted with certainty because our previous study showed a marked effect on time to diagnosis under similar service constraints, albeit on a smaller scale. It could be argued that pathway change should have been mandated in the prioritization arm to ensure all prioritized CXRs were reviewed by a radiologist before the patient left the department. However, this represents a pathway change that may have an impact on its own and has little to do with AI-based prioritization (see review of other studies below). Furthermore, as shown in Extended Data Fig. 2, immediate review of the CXR was permitted, according to local procedure, in both arms of the study. This ensured that AI prioritization was tested rather than the effect of primary pathway change.

A large number of discordance reviews were completed (26,505), representing 28.4% of the randomized CXRs and 94% of all discordances. Although results are presented for eight classifications, the ‘other’ category includes an additional nine findings (none of which were relevant to lung cancer). Expert radiology review was considered the gold standard. Compared with radiologists, AI FP findings comprised 11.6% of the total across all categories. Some of these will be from the same CXR (proportion of FP plus FN of all reviewed CXRs was 33,910/28,261; ratio = 1.2:1). However, feedback from reporters indicated that most of the FPs are rapidly dismissed, so the potential for extra workload will be dependent on the user becoming familiar with the strengths and weaknesses of the AI. An important finding from our study relates to the number of cancers subsequently diagnosed in the cohort with a positive AI finding that was dismissed by the radiologist or reporting radiographer and the apparent association with time to diagnosis. We are aware of only a few cases in which there was a definite error on behalf of the reporter after review and this merits a detailed evaluation of the identified cases to establish whether they can be appropriately managed, perhaps using a combination of baseline risk24 and CXR AI findings. It is also important to establish the reason for FNs. These were relatively few in number, with over half in the ‘other’ and cardiomegaly categories (3,366/5,777). However, for the nodule category, with only 343 AI FNs, 20 cancers were diagnosed, representing 5% of the cancers in that category (Table 3).

A major strength of the trial is its randomized controlled design, which does not require obtaining individual consent from participants. This meant minimal influence on the normal clinical pathway and more equitable inclusion of groups underrepresented in research. Information provided in the radiology departments indicated that participants could opt out of the study; however, only one participant did so. In addition, the trial was multicentre, encompassing NHS hospitals of different sizes and catchment demographics. The automated data acquisition followed by manual verification is another strength of the study and the independent analysis of the results with no influence from either the AI developers or the investigators. Another major strength is the number of discordance reviews completed by expert radiologists and reporting radiographers. This has provided perhaps the largest reliable dataset on this aspect. Review/recall methodology is a form of radiology peer review, advocated as an effective method of quality assurance25,26,27,28,29, but it is seldom, if ever, done on this scale.

A strength of the trial is also the use of two clinically meaningful, real-world, primary outcomes: time to lung cancer diagnosis and time to CT. Both are highly relevant to the goal of achieving earlier diagnosis in lung cancer, but the former is more dependent on the many steps involved in the pathway. In addition, secondary outcomes provided insight into other potential impacts, including the number of lung cancer diagnoses, the stage at diagnosis and the number of urgent referrals, which in our previous work showed trends towards being more favorable in the immediate reporting arm, but with too few cancers to allow a statistically significant result (n = 49)13.

A limitation of the study is that the primary outcomes evaluated only the impact of AI prioritization, not the presence or absence of AI in the clinical workflow. This was necessary because of the study’s nonconsenting nature. Consenting would have been impractical and could itself have influenced the primary outcome through greater-than-normal scrutiny of imaging within the context of the busy NHS. Furthermore, the use of AI, rather than no use at all, could improve triage quality and potentially the speed of diagnosis. However, it would likely have less influence on CT requests unless the AI were highly accurate. By choosing time to CT as an additional primary outcome, we can be more confident that a comparison of AI use versus no AI would not show a meaningful difference.

A limitation is the use of a single AI product, which will inevitably have different performance characteristics from others. This issue is likely to persist as algorithms are updated. However, the fact that only prioritization was tested here means differences in AI accuracy had less effect on the coprimary outcomes than on the discordances.

A further limitation of the study was the difficulty in identifying the best way to measure time to CT. Including all CTs was necessary to ensure that any potential adverse effect of AI prioritization, such as ‘reassurance’ from a normal AI finding, were captured. This was because we found that many patients having CXRs go on to have CTs, which may have no relationship to the CXR—for example, due to subsequent respiratory assessment or emergency department attendance, even many days after the first referral. While this finding is of interest in itself, it may have diluted this primary outcome by adding many CTs that were not relevant to the hypothesis. This explains why the time to CT, for all downstream scans, was so long (indeed longer than the time to lung cancer diagnosis). We therefore had to complete restrictive sensitivity analyses using the code, where used, signifying a CT was triggered and also by limiting the time after CXR to less than 14 days, having determined that these were likely related to the initial CXR. This was the case even when the CXR was normal, but the risk remained high enough to warrant referral for subsequent CT. Despite this limitation, the results clearly show no difference in time from CXR to CT according to prioritization day across all analyses, each of which included a more than adequate number of observations (Table 3).

Another limitation relates to the discordance reviews, which have been reported according to differences between the AI flag classification and the final report. Because reporters had the AI available at the time, we cannot determine how many findings would be independently found by the AI or whether the human would have found something without AI assistance. However, the purpose of the discordance review was merely to confirm that the reports were indeed discordant, and this is what is presented. There were a small number of discordances in which the AI was correct and the reporter incorrect; these have not yet been fully quantified or analyzed. These cases, together with a detailed analysis of downstream outcomes, are the subject of a further project.

In England, there has been a phased implementation of lung cancer screening for people aged 55 to 74 at high risk of developing lung cancer. From Table 1 and Extended Data Table 1, the cancer rate per patient can be calculated, demonstrating that University College London Hospital, which has an established screening program, had a rate of 0.38%, whereas University Hospitals of Birmingham, which commenced screening in 2022, had a rate of 0.53%. However, the rate for University Hospitals of Leicester (without a program) and Nottingham University Hospitals (program started in 2023) was 0.7%. Our study is unable to determine whether the screening program is reducing the early-stage detection by CXR.

The health economics analysis is underway and considers the comparison made in the study, as well as focusing on the differences between using AI at all and not using AI, in a planned secondary analysis.

Research on the impact of AI prioritization of the CXR is very limited. A prospective study is currently underway in Glasgow, Scotland, assessing the impact of AI prioritization on time to CT among patients with lung cancer30; this is the only other randomized prospective study we are aware of.

The research on the impact of CXR AI on clinical outcomes and accuracy is also limited. Some information comes from company product specifications, which show favorable area under the curve (AUC) values across a variety of classifications31,32. In a retrospective study of 6,006 CXRs from a respiratory department, AI assistance improved radiologists’ detection performance for nodules/masses, consolidation and pneumothorax, increasing the AUC from 0.861 to 0.886 (P = 0.003; ref. 33). Another retrospective study using 2,568 CXRs acquired in a range of settings showed an AUC for radiologists of 0.71, which improved to 0.81 with AI assistance, but also noted a remarkable AUC of 0.96 with the AI model alone34. A further retrospective study of 563 emergency unit CXRs showed improvement in the accuracy of imaging diagnosis among nonradiology residents35. A small, single site (n = 68 lung cancers), nonrandomized before-and-after 12-month service evaluation conducted in Scotland with another CXR AI algorithm could not attribute the small (mean 51 versus 58 days) reduction in time to treatment to the use of AI as multiple pathway changes were made (additional radiologist, administrative support, pathway navigator) and resulted in an increase in cost of £3.59 per CXR36. Another single-trust before-and-after comparison of AI found a shorter time from CXR to CT chest from 6 to 3.6 days37. However, as with the Scottish service evaluation, there was extensive pathway redesign within the ‘after’ pathway, which the authors recognized as a significant limitation in attributing improvements to AI. Storey and colleagues also used a weak reference standard, namely lung cancer suspected on CT chest, rather than the robust reference standard of confirmed lung cancer diagnosis in the current study.

Although some of these studies show more favorable results for the classifiers shown in Table 3, they may not be directly comparable as the CXRs were acquired in a variety of settings, retrospectively selected and in some, enriched to include specific findings34. In the present study, cases were unselected and, in the context of primary care, requested a CXR, and were much greater in number. In addition, we were able to show the number of downstream lung cancer diagnoses for each classification.

The LungIMPACT trial has shown that immediate AI prioritization had no impact on important clinical outcomes for patients with suspected lung cancer in the English NHS and that this tool, as assessed, does not currently require potentially time-consuming and costly efforts to include in clinical services. This is likely to apply to the use of any CXR algorithm in this way, because the vast majority of cancers were detected where AI was abnormal and prioritization was therefore tested in a large number of cases. Even modest differences in accuracy are unlikely to change the primary outcome. Furthermore, this finding may extend to other settings where there is a relatively rapid review of images through the usual clinical pathway. Although the study applies directly to the English NHS, some findings may apply to other healthcare systems with established pathways. Because AI prioritization, although associated with a shortened time to CXR report, did not influence more important clinical outcomes, services would need to implement additional pathway changes to potentially replicate our previous findings. A recommendation could be that any AI-flagged abnormality prompts an immediate human review and, if confirmed, the implementation of a downstream bundle of investigations. The large-scale analysis of secondary outcomes shows the considerable potential burden of FP results, using radiologist and radiographer review as the gold standard, while also showing the considerable number of lung cancers diagnosed in this medium-to-high-risk group. This supports ongoing efforts to better manage these patients.